Академический Документы

Профессиональный Документы

Культура Документы

Improving Image Tag Recommendation Using Favorite Image Context

Загружено:

Wesley De NeveАвторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

Improving Image Tag Recommendation Using Favorite Image Context

Загружено:

Wesley De NeveАвторское право:

Доступные форматы

IMPROVING IMAGE TAG RECOMMENDATION USING FAVORITE IMAGE CONTEXT

Wonyong Eom, Sihyoung Lee, Wesley De Neve, and Yong Man Ro

Image and Video Systems Lab, Korea Advanced Institute of Science and Technology (KAIST),

Yuseong-gu, Daejeon, Republic of Korea

{ewony, ijiat, wesley.deneve, ymro}@kaist.ac.kr

ABSTRACT

Tag recommendation allows mitigating the amount of user effort

needed to annotate images. Assuming that favorite images and

their associated tags are indicative of the visual and topical

interests of users, this paper proposes a personalized image tag

recommendation technique that makes use of favorite image

context. Specifically, to recommend tags for a newly uploaded

image, we propose to take advantage of the tags assigned to

favorite images of the user who uploaded the image, fusing tag

statistics and visual similarity. Experimental results obtained for

images and tags retrieved from Flickr indicate that the use of

favorite image context for the purpose of tag recommendation is

promising, compared to the use of personal and collective context.

I ndex Terms Annotation, favorite image context, tag

recommendation, tagging, Flickr

1. INTRODUCTION

Thanks to easy-to-use multimedia devices, cheap storage and

bandwidth, and an increasing number of people going online, the

number of photos shared on online social network services keeps

growing at a fast rate. To facilitate effective image search and

management, online social network services typically make use of

tags. However, manual tagging of images is labor intensive. As a

result, images shared on online social network services are often

not or only weakly annotated [1][2].

Tag recommendation allows mitigating the amount of user

effort needed to annotate images. In [3], an image tag

recommendation method is proposed that makes use of all images

and tags available on an online social network service (collective

context). That way, a wide range of tags can be suggested.

However, tag recommendation using collective context is not

personalized [4]. Moreover, the use of collective context is

computationally expensive.

In [5], an image tag recommendation method is proposed that

makes use of images and tags previously made available by a user

(personal context). Compared to the use of collective context, the

use of personal context makes it possible to better reflect the

interests of users. In addition, the computational complexity is

lower (only a limited number of already annotated images are

used). However, the effectiveness of using personal context for

image tag recommendation is highly dependent on past tagging

behavior of a user [4]. Moreover, the tags suggested using personal

context are topically limited.

Users of online services for image sharing interact with each

other. Motivated by this observation, the authors of [4]investigate

image tag recommendation using contact and group context.

Whereas contact context is derived from images and tags made

available by the contacts of a user, group context is derived from

images and tags that belong to a pool of groups joined by the user.

The experimental results reported in [4] demonstrate that group

context can be successfully used to boost the effectiveness of

image tag recommendation.

As concluded in [4], additional types of context need to be

investigated. Moreover, the tag recommendation techniques

presented in [4] only make use of tag statistics, and do not take

advantage of visual similarity. This paper addresses both of the

aforementioned challenges, focusing on using a new type of

context for the purpose of image tag recommendation. In particular,

assuming that favorite images and their associated tags are

indicative of the visual and topical interests of a user [6], this paper

proposes to make use of favorite image context in order to realize

personalized image tag recommendation, fusing tag statistics and

visual similarity. Experimental results obtained for images and tags

retrieved from Flickr indicate that the use of favorite image context

for the purpose of tag recommendation is promising, compared to

the use of personal and collective context.

The remainder of this paper is organized as follows. In

Section 2, we introduce our novel method for image tag

recommendation, relying on favorite image context. Experimental

results are discussed in Section 3. Finally, conclusions and

directions for future research are presented in Section 4.

2. PROPOSED TAG RECOMMENDATION METHOD

2.1. Favorite image context

2.1.1. Definition

In this paper, we assume that a set of favorite images and their

associated tags reflect the visual and topical interests of a user

(given that favorite images have been explicitly bookmarked by the

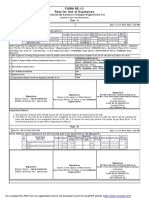

user). Fig. 1 shows the relationship between personal, collective,

and the proposed favorite image context, visualized from the point-

of-view of a user who uploaded an image that needs to be

annotated. A solid line between a user and an image indicates that

the user owns the image, whereas a dotted line between a user and

an image signals that the user bookmarked the image as a favorite

image. Finally, the three bounding boxes denote the three types of

contexts used in this paper.

...

...

personal context

favorite image context

collective context

Fig. 1. Personal, collective, and favorite image context,

visualized from the point-of-view of a user who uploaded a new

image that needs to be annotated.

2.1.2. Favorite images in MIRFLICKR-25000

Similar to the contact and group context in [4], the activity of a

user may have a significant influence on the size of the favorite

image context and on the effectiveness of image tag

recommendation. We therefore investigated the distribution of the

number of favorite images on Flickr for the 9,861 users of the

publicly available MIRFLICKR-25000 image set [7], assuming

that their behavior in terms of image bookmarking is representative

for the whole Flickr population.

Fig. 2 shows the distribution of the number of favorite images

on Flickr for the users of MIRFLICKR-25000. The x axis

represents the 9,861 users, whereas the y axis represents the

number of favorite images using a log scale. We can observe that

455 users did not bookmark any images, while 187 users

bookmarked more than 10,000 favorite images. In addition, about

40% of the users bookmarked at least 500 favorite images (the

median number of images bookmarked by the users of

MIRFLICKR-25000 is 301).

2.2. Tag recommendation using favorite image context

The relevance of a set of tags T

q

to a query image q is defined as

R(T

q

, q). If R(T

q

, q) is maximal, then we assume that the tags in T

q

are correct for q. Maximizing the relevance of T

q

to q can be

expressed as follows:

). , ( max arg

*

q T R T

q

T T

q

q

_

=

(1)

The computation of R(T

q

, q) requires evaluating a significant

number of tag combinations. Therefore, to reduce the

computational complexity, we assume that the tags in T are

statistically independent. Tag recommendation can then be

described using the following equation:

}, ) , ( | { , > e ~ q t R and T t t T

q

(2)

where t is a tag associated with q, R(t, q) is the relevance of t to q,

and is a threshold that determines whether t is correct or noisy

with respect to q.

The relevance function R(t, q) is modeled by combining the

output of two elementary relevance functions, making it possible to

suggest tags that are highly ranked by both of the two elementary

relevance functions. To combine the output of the two elementary

relevance functions, we make use of a weighted summation:

1

10

100

1000

10000

100000

1000000

1 9861

N

u

m

b

e

r

o

f

f

a

v

o

r

i

t

e

i

m

a

g

e

s

User

Fig. 2. Number of favorite images per MIRFLICKR-25000 user.

), , ( ) 1 ( ) , ( ) , ( q t R q t R q t R

img tag

+ = o o

(3)

where R

tag

(t, q) is an elementary relevance function that makes use

of tag statistics and where R

img

(t, q) is an elementary relevance

function that relies on image similarity. The parameter represents

a normalized weight value, making it possible to trade off the

influence of tag statistics against the influence of visual similarity.

First, R

tag

(t, q) is modeled by making use of the method

proposed in [3]. As this method relies on tag occurrence and tag

co-occurrence statistics, initial tags are needed. Similar to [4], we

randomly select two initial tags from the tags already assigned to

the test image by the user and measure the effectiveness in terms of

how many ground truth tags can be recommended (see Section 3.1).

Given a certain type of context and a set V of initial tags v for q,

the relevance value of each candidate tag t is then modeled as

follows:

,

otherwise ,

0 ) | ( if ), | (

) ( ) , (

[

e

>

V v

tag

v t P v t P

t P q t R

c

(4)

where is non-zero value significantly smaller than the lowest

P(t|v) in order to avoid the zero-probability problem (please see [4]

for further details).

The probability of a tag t occurring and the conditional

probability of two tags t and v co-occurring in a certain type of

context are defined as follows:

, ) (

I

I

t P

t

=

(5)

where |I| represents the total number of images in a certain type of

context, where |I

t

| and |I

v

| respectively denote the number of images

annotated with t and v in the context used, and where |I

t

I

v

|

indicates the number of images annotated with both t and v in the

context under consideration.

Second, R

img

(t, q) is modeled using the method outlined in [8]:

,

) | (

) | ( ) , | (

) , | ( ) , (

Q

Q Q

Q

q p

t P t q P

q t P q t R

img

=

(7)

where Q consists of images visually similar to q. The denominator

is constant and can be ignored (as the denominator is not a

function of t).

, ) | (

v

v t

I

I I

v t P

=

(6)

The first conditional probability in (7) is modeled using a

Gaussian distribution (please see [8] for further details). The

second conditional probability in (7) can be expressed as follows:

, ) , | (

q

t q

I

I I

q t P

= Q

(8)

where |I

q

| represents the number of images in Q and |I

q

I

t

| denotes

the number of images in Q annotated with tag t.

3. EXPERIMENTS

3.1. Experimental setup

We assume that users actively bookmark favorite images on Flickr.

Following this assumption, we collected images from users

meeting the following requirements: 1) the users uploaded at least

100 images; 2) the users assigned at least 500 tags; and 3) the users

bookmarked at least 500 favorite images. As a result, using the

Flickr API, we retrieved a total of 387,397 images (on September

30, 2010) from 27 users. The images retrieved are either favorite

images or owned by the 27 users selected. Moreover, the images

are annotated with a total of 4,657,288 tags by 46,686 users.

To study the influence of the number of images in the favorite

image context on the effectiveness of tag recommendation, we

divided the 27 users into four groups according to their

bookmarking activity. These four groups are listed in Table 1. The

average number of favorite images per user in MIRFLICKR-25000

was used to create a group that represents users with average

bookmarking activity (Level 1 in Table 1).

Table 1. Minimum and maximum number of favorite images for

each group of users.

Activity Level 1 Level 2 Level 3 Level 4

Number of

favorite

images

849

1,277

2,345

2,917

4,830

10,001

11,441

56,115

For testing purposes, we randomly selected 378 images from

the images uploaded by the 27 users (14 test images per user), and

where all of the selected test images were annotated with at least

ten tags. Further, we removed images that belong to the same event.

This finally resulted in the use of 342 test images. Experts created

a ground truth by making use of the tags suggested by all of the tag

recommendation methods studied, selecting tags that describe

visual aspects of the test images used.

To calculate the distance between images, we used global and

local image features. The 128-D MPEG-7 Scalable Color

Descriptor (SCD) [9] was used as a global image feature, whereas

local image features were created using the Bag of Visual Words

(BoVW) approach [10]. BoVW used a vocabulary of 500 visual

words, derived from 61,901 training images. Interest points were

detected, described, and clustered using Difference of Gaussians

(DoG), the Scale Invariant Feature Transform (SIFT), and K-

means clustering, respectively. To measure the distance between

two images in feature space, the L

2

metric was used for both the

global and local image features.

We measured the effectiveness of tag recommendation using

the following metrics: the precision of the top five recommended

tags (P@5), success among the top five recommended tags (S@5),

and the precision of the top one recommended tag (P@1).

3.2. Experimental results

3.2.1. Effectiveness of using favorite image context

Our first experiment made use of R

tag

(t, q) to recommend tags.

Compared to the use of personal and collective context, Table 2 for

instance shows that the use of favorite image context allows for a

relative improvement of 36% and 16% in terms of P@5.

Table 2. Tag recommendation using R

tag

(t, q).

Context P@5 S@5 P@1

Personal 0.158 0.609 0.318

Collective 0.208 0.612 0.373

Favorite 0.247 0.729 0.457

Our second experiment made use of R

img

(t, q) to recommend

tags (using SCD). Table 3 shows that the use of favorite image

context is more effective than the use of personal and collective

context, irrespective of the metric used. As an example, compared

to personal and collective context, favorite image context

respectively allows for a relative improvement of 36% and 29% in

terms of P@5.

Table 3. Tag recommendation using SCD-based R

img

(t, q).

Context P@5 S@5 P@1

Personal 0.187 0.629 0.384

Collective 0.208 0.611 0.324

Favorite 0.294 0.813 0.446

Our third experiment made use of R

img

(t, q) to recommend tags

(using BoVW). Table 4 shows that the use of favorite image

context is most effective in terms of P@5 and S@5, while the use

of collective context is most effective in terms of P@1.

Table 4. Tag recommendation using BoVW-based R

img

(t, q).

Context P@5 S@5 P@1

Personal 0.206 0.697 0.367

Collective 0.309 0.767 0.523

Favorite 0.317 0.813 0.513

3.2.2. Influence of fusion and bookmarking activity

Fig. 3 shows the influence of bookmarking activity on the

effectiveness of tag recommendation when making use of favorite

image context. The following weight values were heuristically

selected for , optimizing the effectiveness of tag recommendation:

=0.45 when using SCD and =0.2 when using BoVW. We can

observe that the combined use of tag statistics and visual similarity

is more effective than the separate use of either tag statistics or

visual similarity. Indeed, the combined use of tag statistics and

visual similarity allows suggesting tags that are ranked high by

both of the two elementary relevance functions. Also, the

combined use of tag statistics and BoVW is more effective than the

combined use of tag statistics and SCD.

Fig. 4 shows three example images. For each of these images,

the top 10 recommended tags are provided and sorted according to

their rank. The rank of a recommended tag is determined using

R(t,q). Correct tags (i.e., tags that belong to the ground truth) have

been underlined in Fig. 4. We can observe that fusing tag statistics

and visual similarity allows suggesting more relevant tags than the

separate use of either tag statistics or visual similarity.

images

tag statistics

october, street, film, michigan,

bw, california, color,

architecture, city, japan

nature, africa, photo, image,

moth, bird, wildlife, birds,

macro, Australia

sardegna, mare, donna, fitness,

street, red, luce, bw, green, light

visual similarity

blue, street, film, clouds, night,

black, bw, history, rome, color

nature, wildlife, macro, birds,

africa, flower, animal, flowers,

safari, butterfly

italy, bw, red, milano, green, street,

silhouette, people, paris, canon

tag statistics +

visual similarity

street, blue, film, black, color, city,

night, history, architecture, city

nature, wildlife, macro, birds,

africa, animal, bird, flower,

safari, flowers

italy, bw, red, green, street, milano,

silhouette, light,

sardegna, shadow

Fig. 4. Example images with recommended tags using favorite image context

0

0.1

0.2

0.3

0.4

Level 1 Level 2 Level 3 Level 4

P

@

5

Type of user group

tag statistics

visual similarity (SCD)

visual similarity (BoVW)

tag statistics + visual similarity (SCD)

tag statistics + visual similarity (BoVW)

Fig. 3. P@5 for different levels of bookmarking activity.

4. CONCLUSIONS AND FUTURE WORK

This paper proposed a novel method for image tag

recommendation, making use of favorite image context. Our

experimental results show that, in general, the use of favorite

image context is more effective than the use of personal and

collective context. In addition, fusing tag statistics and visual

similarity allows for a higher effectiveness in terms of P@5,

compared to their separate usage.

Future research will analyze the computational complexity of

the proposed tag recommendation method in more detail. Attention

will also be paid to more advance weighting schemes.

5. ACKNOWLEDGEMENTS

This research was supported by the Basic Science Research

Program of the National Research Foundation (NRF) of Korea,

funded by the Ministry of Education, Science and Technology

(research grant: 2010-0012495).

6. REFERENCES

[1] X. Li, C.G.M. Snoek, M. Worring, Learning Social Tag

Relevance by Neighbor Voting, IEEE Transactions on

Multimedia, pp. 1310-1322, 2009.

[2] D. Liu, M. Wang, L. Yang, X. Hua, and H. J. Zhang, Tag

Quality Improvement for Social Images, Proc. of ICME, pp.

250-353, 2009.

[3] B. Sigurbjrnsson and R. van Zwol, Flickr Tag

Recommendation based on Collective Knowledge,

International Conference on World Wide Web, pp. 327-336,

2008.

[4] A. Rae, B Sigurbjrnsson, R. van Zwol, Improving Tag

Recommendation using Social Networks, International

Conference on Adaptivity, Personalization and Fusion of

Heterogeneous Information, 2010.

[5] N. Grag and I. Weber, Personalized, Interactive Tag

Recommendation for Flickr, ACM Conference on

Recommender Systems, pp. 67-74, 2008.

[6] R. van Zwol, A. Rae, and L. G. Pueyo, Prediction of

Favourite Photos using Social, Visual, and Textual Signals,

ACM Multimedia, pp. 1015-1018, 2010.

[7] M. J. Huiskes, M. S. Lew, The Mir Flickr Retrieval

Evaluation, ACM International Conference on Multimedia

Information Retrieval, pp. 39-43, 2008.

[8] S. Lee, W. De Neve, K. N. Plataniotis, Y. M. Ro, MAP-

based Image Tag Recommendation using a Visual

Folksonomy, Pattern Recognition Letters, pp. 975-982,

2010.

[9] B. S. Manjunath, P. Salembier, T. Sikora, Introduction to

MPEG-7 : Multimedia Content Description Interface. John

Wiley and Sons, 2002.

[10] P. Tirilly, V. Claveau, and P. Gros, Language Modeling for

Bag-of-Visual Words Image Categorization, International

Conference on Content-based Image and Video Retrieval, pp.

249-258, 2008.

Вам также может понравиться

- Image Retrieval From The Web Database Based On The User Intention in A Hybrid ApproachДокумент5 страницImage Retrieval From The Web Database Based On The User Intention in A Hybrid ApproachseventhsensegroupОценок пока нет

- Data Sharing Website Using Personalised SearchДокумент5 страницData Sharing Website Using Personalised SearchseventhsensegroupОценок пока нет

- Tag Based Image Search by Social Re-Ranking: Chinnam Satyasree, B.Srinivasa RaoДокумент8 страницTag Based Image Search by Social Re-Ranking: Chinnam Satyasree, B.Srinivasa RaosathishthedudeОценок пока нет

- A Review On Personalized Tag Based Image Based Search EnginesДокумент4 страницыA Review On Personalized Tag Based Image Based Search EnginesRahul SharmaОценок пока нет

- Learning To Rank Image Tags With Limited Training Examples PDFДокумент13 страницLearning To Rank Image Tags With Limited Training Examples PDFRajaRam ManiОценок пока нет

- Movie GenreДокумент5 страницMovie GenrePradeepSinghОценок пока нет

- Report Mini Project 30NOVДокумент28 страницReport Mini Project 30NOVAnKush DadaОценок пока нет

- Visual Diversification of Image Search ResultsДокумент10 страницVisual Diversification of Image Search ResultsAlessandra MonteiroОценок пока нет

- Study of Social Image Re-Ranking According Inter and Intra User ImpactДокумент4 страницыStudy of Social Image Re-Ranking According Inter and Intra User ImpactInternational Journal Of Emerging Technology and Computer ScienceОценок пока нет

- Optimizing Image Search by Tagging and Ranking: Author-M.Nirmala (M.E)Документ11 страницOptimizing Image Search by Tagging and Ranking: Author-M.Nirmala (M.E)Arnav GudduОценок пока нет

- Image Clustering Based On A Shared Nearest Neighbors Approach For Tagged CollectionsДокумент10 страницImage Clustering Based On A Shared Nearest Neighbors Approach For Tagged CollectionsNinad SamelОценок пока нет

- Performance of Clustering Algorithms for Scalable Image RetrievalДокумент12 страницPerformance of Clustering Algorithms for Scalable Image RetrievalYishamu YihunieОценок пока нет

- Exploiting Implicit Affective Labeling For Image RecommendationsДокумент6 страницExploiting Implicit Affective Labeling For Image Recommendationsmonamona69Оценок пока нет

- Tag Based Image Search Social Re-rankingДокумент5 страницTag Based Image Search Social Re-rankingSanjay ShelarОценок пока нет

- Automatic Tag Recommendation Algorithms For Social Recommender SystemsДокумент35 страницAutomatic Tag Recommendation Algorithms For Social Recommender SystemsKriti AggarwalОценок пока нет

- Appm 3310 Final ProjectДокумент13 страницAppm 3310 Final Projectapi-491772270Оценок пока нет

- Learn To Personalized Image Search From The Photo Sharing WebsitesДокумент7 страницLearn To Personalized Image Search From The Photo Sharing WebsitesieeexploreprojectsОценок пока нет

- Privacy Policy Inference of UserДокумент7 страницPrivacy Policy Inference of UserHarikrishnan ShunmugamОценок пока нет

- Itmconf Ita2017 04008Документ5 страницItmconf Ita2017 04008Android Tips and TricksОценок пока нет

- Ijmer 45060109 PDFДокумент9 страницIjmer 45060109 PDFIJMERОценок пока нет

- Learn To Personalized Image Search From The Photo Sharing WebsitesДокумент4 страницыLearn To Personalized Image Search From The Photo Sharing WebsitesThangarasu PeriyasamyОценок пока нет

- Movie Recommendation ReportДокумент14 страницMovie Recommendation ReportSathvick BatchuОценок пока нет

- Performance Evaluation of Ontology Andfuzzybase CbirДокумент7 страницPerformance Evaluation of Ontology Andfuzzybase CbirAnonymous IlrQK9HuОценок пока нет

- Content-Based Image RetrievalДокумент4 страницыContent-Based Image RetrievalKuldeep SinghОценок пока нет

- A Literature Survey On Various Approaches On Content Based Image SearchДокумент6 страницA Literature Survey On Various Approaches On Content Based Image SearchijsretОценок пока нет

- Personalized Image SearchДокумент7 страницPersonalized Image SearchPrashanth GupthaОценок пока нет

- Efficient Image Retrieval Using Indexing Technique: Mr.T.Saravanan, S.Dhivya, C.SelviДокумент5 страницEfficient Image Retrieval Using Indexing Technique: Mr.T.Saravanan, S.Dhivya, C.SelviIJMERОценок пока нет

- CVPR10 Group SparsityДокумент8 страницCVPR10 Group Sparsitysrc0108Оценок пока нет

- Readme PDFДокумент6 страницReadme PDFKumara SОценок пока нет

- An Improved Personalized Filtering Recommendation Algorithm:, Sun Mingyang, Liu XidongДокумент10 страницAn Improved Personalized Filtering Recommendation Algorithm:, Sun Mingyang, Liu XidongEs QuitadaОценок пока нет

- Relevance Feedback and Learning in Content-Based Image SearchДокумент25 страницRelevance Feedback and Learning in Content-Based Image SearchmrohaizatОценок пока нет

- Tuning A CBIR System For Vector Images: The Interface SupportДокумент4 страницыTuning A CBIR System For Vector Images: The Interface SupporthkajaiОценок пока нет

- JournalNX - Image Re RankingДокумент4 страницыJournalNX - Image Re RankingJournalNX - a Multidisciplinary Peer Reviewed JournalОценок пока нет

- Content-Based Image Retrieval Over The Web Using Query by Sketch and Relevance FeedbackДокумент8 страницContent-Based Image Retrieval Over The Web Using Query by Sketch and Relevance FeedbackApoorva IsloorОценок пока нет

- Week1 Vig2009Документ10 страницWeek1 Vig2009Sam GlassmanОценок пока нет

- Personalize Movie Recommendation System CS 229 Project Final WriteupДокумент6 страницPersonalize Movie Recommendation System CS 229 Project Final Writeupabhay0% (1)

- A Method For Comparing Content Based Image Retrieval MethodsДокумент8 страницA Method For Comparing Content Based Image Retrieval MethodsAtul GuptaОценок пока нет

- Movie Recommender Systems - Implementation and Performace EvaluationДокумент6 страницMovie Recommender Systems - Implementation and Performace EvaluationSabri GOCERОценок пока нет

- TV program recommendation system accuracy over timeДокумент5 страницTV program recommendation system accuracy over timeMarco LisiОценок пока нет

- 10.26599@BDMA.2018.9020012Документ9 страниц10.26599@BDMA.2018.9020012TeX-CodersОценок пока нет

- Learning Image Similarity From Flickr Groups Using Fast Kernel MachinesДокумент13 страницLearning Image Similarity From Flickr Groups Using Fast Kernel MachinesSammy Ben MenahemОценок пока нет

- Rajan Dhabalia Netflix PrizeДокумент9 страницRajan Dhabalia Netflix Prizerajan_sfsu100% (1)

- Cbir Thesis PDFДокумент6 страницCbir Thesis PDFfc4b5s7r100% (2)

- Predicting IMDB Movie Ratings Using Social MediaДокумент5 страницPredicting IMDB Movie Ratings Using Social MediaJames AlbertsОценок пока нет

- BDA ReportДокумент9 страницBDA ReportSharvari KasarОценок пока нет

- Unifying User-Based and Item-Based Collaborative Filtering Approaches by Similarity FusionДокумент8 страницUnifying User-Based and Item-Based Collaborative Filtering Approaches by Similarity FusionEvan John O 'keeffeОценок пока нет

- Color Histogram Image RetrievalДокумент26 страницColor Histogram Image RetrievalAnil Kumar DudlaОценок пока нет

- Dynamic Reconstruct For Network Photograph ExplorationДокумент7 страницDynamic Reconstruct For Network Photograph Explorationsurendiran123Оценок пока нет

- Privacy Policy Inference of User-UploadedДокумент14 страницPrivacy Policy Inference of User-UploadedSyed AdilОценок пока нет

- Bridging The Semantic Gap Between Image Contents and TagsДокумент12 страницBridging The Semantic Gap Between Image Contents and Tagsbrahmaiah naikОценок пока нет

- Book Recommendation System ProjectДокумент14 страницBook Recommendation System ProjectSriram VangavetiОценок пока нет

- s13673-018-0161-6Документ15 страницs13673-018-0161-6karandhanodiyaОценок пока нет

- Sketch4Match - Content-Based Image Retrieval System Using SketchesДокумент17 страницSketch4Match - Content-Based Image Retrieval System Using SketchesMothukuri VijayaSankarОценок пока нет

- 98 Jicr September 3208Документ6 страниц98 Jicr September 3208bawec59510Оценок пока нет

- Web Image Re-Ranking UsingДокумент14 страницWeb Image Re-Ranking UsingkorsairОценок пока нет

- module 5Документ8 страницmodule 5Dhaarani PushpamОценок пока нет

- Literature Review On Content Based Image RetrievalДокумент8 страницLiterature Review On Content Based Image RetrievalafdtzwlzdОценок пока нет

- CC Project - Tarik SulicДокумент16 страницCC Project - Tarik SulicTarik SulicОценок пока нет

- Recommender: An Analysis of Collaborative Filtering TechniquesДокумент5 страницRecommender: An Analysis of Collaborative Filtering TechniquesParas MirraОценок пока нет

- Towards Twitter Hashtag Recommendation Using Distributed Word Representations and A Deep Feed Forward Neural NetworkДокумент7 страницTowards Twitter Hashtag Recommendation Using Distributed Word Representations and A Deep Feed Forward Neural NetworkWesley De NeveОценок пока нет

- Real-Time BSD-Driven Adaptation Along The Temporal Axis of H.264/AVC BitstreamsДокумент10 страницReal-Time BSD-Driven Adaptation Along The Temporal Axis of H.264/AVC BitstreamsWesley De NeveОценок пока нет

- Multimedia Lab at ACL W-NUT NER Shared Task: Named Entity Recognition For Twitter Microposts Using Distributed Word RepresentationsДокумент8 страницMultimedia Lab at ACL W-NUT NER Shared Task: Named Entity Recognition For Twitter Microposts Using Distributed Word RepresentationsWesley De NeveОценок пока нет

- Towards Block-Based Compression of Genomic Data With Random Access FunctionalityДокумент4 страницыTowards Block-Based Compression of Genomic Data With Random Access FunctionalityWesley De NeveОценок пока нет

- A Real-Time XML-based Adaptation System For Scalable Video FormatsДокумент10 страницA Real-Time XML-based Adaptation System For Scalable Video FormatsWesley De NeveОценок пока нет

- License Plate Recognition For Low-Resolution CCTV Forensics by Integrating Sparse Representation-Based Super-ResolutionДокумент11 страницLicense Plate Recognition For Low-Resolution CCTV Forensics by Integrating Sparse Representation-Based Super-ResolutionWesley De NeveОценок пока нет

- Genome Sequences As Media Files: Towards Effective, Efficient, and Functional Compression of Genomic DataДокумент8 страницGenome Sequences As Media Files: Towards Effective, Efficient, and Functional Compression of Genomic DataWesley De NeveОценок пока нет

- Alleviating Manual Feature Engineering For Part-Of-Speech Tagging of Twitter Microposts Using Distributed Word RepresentationsДокумент5 страницAlleviating Manual Feature Engineering For Part-Of-Speech Tagging of Twitter Microposts Using Distributed Word RepresentationsWesley De NeveОценок пока нет

- Beating The Bookmakers - Leveraging Statistics and Twitter Microposts For Predicting Soccer ResultsДокумент4 страницыBeating The Bookmakers - Leveraging Statistics and Twitter Microposts For Predicting Soccer ResultsWesley De NeveОценок пока нет

- Exploiting Collective Knowledge in An Image Folksonomy For Semantic-Based Near-Duplicate Video DetectionДокумент4 страницыExploiting Collective Knowledge in An Image Folksonomy For Semantic-Based Near-Duplicate Video DetectionWesley De NeveОценок пока нет

- MSM2013 IE Challenge: Leveraging Existing Tools For Named Entity Recognition in MicropostsДокумент4 страницыMSM2013 IE Challenge: Leveraging Existing Tools For Named Entity Recognition in MicropostsWesley De NeveОценок пока нет

- Contribution of Non-Scrambled Chroma Information in Privacy-Protected Face Images To Privacy LeakageДокумент15 страницContribution of Non-Scrambled Chroma Information in Privacy-Protected Face Images To Privacy LeakageWesley De NeveОценок пока нет

- The Rise of Mobile and Social Short-Form Video: An In-Depth Measurement Study of VineДокумент8 страницThe Rise of Mobile and Social Short-Form Video: An In-Depth Measurement Study of VineWesley De NeveОценок пока нет

- Sparse Representation-Based Human Action Recognition Using An Action Region-Aware DictionaryДокумент7 страницSparse Representation-Based Human Action Recognition Using An Action Region-Aware DictionaryWesley De NeveОценок пока нет

- Applying MPEG-21 BSDL To The JVT H.264/AVC Specification in MPEG-21 Session Mobility ScenariosДокумент4 страницыApplying MPEG-21 BSDL To The JVT H.264/AVC Specification in MPEG-21 Session Mobility ScenariosWesley De NeveОценок пока нет

- XML-Driven Bitstream Extraction Along The Temporal Axis of SMPTE's Video Codec 1Документ4 страницыXML-Driven Bitstream Extraction Along The Temporal Axis of SMPTE's Video Codec 1Wesley De NeveОценок пока нет

- Predictable Processing of Multimedia Content, Using MPEG-21 Digital Item ProcessingДокумент10 страницPredictable Processing of Multimedia Content, Using MPEG-21 Digital Item ProcessingWesley De NeveОценок пока нет

- Towards Automatic Assessment of The Social Media Impact of News ContentДокумент4 страницыTowards Automatic Assessment of The Social Media Impact of News ContentWesley De NeveОценок пока нет

- Performance Evaluation of Adaptive Residual Interpolation, A Tool For Inter-Layer Prediction in H.264/AVC Scalable Video CodingДокумент10 страницPerformance Evaluation of Adaptive Residual Interpolation, A Tool For Inter-Layer Prediction in H.264/AVC Scalable Video CodingWesley De NeveОценок пока нет

- XML-driven Bitrate Adaptation of SVC BitstreamsДокумент4 страницыXML-driven Bitrate Adaptation of SVC BitstreamsWesley De NeveОценок пока нет

- Image Tag Refinement Along The 'What' Dimension Using Tag Categorization and Neighbor VotingДокумент6 страницImage Tag Refinement Along The 'What' Dimension Using Tag Categorization and Neighbor VotingWesley De NeveОценок пока нет

- GPU-Driven Recombination and Transformation of YCoCg-R Video SamplesДокумент6 страницGPU-Driven Recombination and Transformation of YCoCg-R Video SamplesWesley De NeveОценок пока нет

- MPEG-21-based Scalable Bitstream Adaptation Using Medium Grain ScalabilityДокумент5 страницMPEG-21-based Scalable Bitstream Adaptation Using Medium Grain ScalabilityWesley De NeveОценок пока нет

- Towards Using Semantic Features For Near-Duplicate Video DetectionДокумент6 страницTowards Using Semantic Features For Near-Duplicate Video DetectionWesley De NeveОценок пока нет

- XML-based Customization Along The Scalability Axes of H.264/AVC Scalable Video CodingДокумент4 страницыXML-based Customization Along The Scalability Axes of H.264/AVC Scalable Video CodingWesley De NeveОценок пока нет

- Description-Based Substitution Methods For Emulating Temporal Scalability in State-of-the-Art Video Coding FormatsДокумент4 страницыDescription-Based Substitution Methods For Emulating Temporal Scalability in State-of-the-Art Video Coding FormatsWesley De NeveОценок пока нет

- Shot Boundary Detection Using Macroblock Prediction Type InformationДокумент4 страницыShot Boundary Detection Using Macroblock Prediction Type InformationWesley De NeveОценок пока нет

- On An Evaluation of Transformation Languages in A Fully XML-Driven Framework For Video Content AdaptationДокумент4 страницыOn An Evaluation of Transformation Languages in A Fully XML-Driven Framework For Video Content AdaptationWesley De NeveОценок пока нет

- Adaptive Residual Interpolation: A Tool For Efficient Spatial Scalability in Digital Video CodingДокумент7 страницAdaptive Residual Interpolation: A Tool For Efficient Spatial Scalability in Digital Video CodingWesley De NeveОценок пока нет

- Ancient Greek Divination by Birthmarks and MolesДокумент8 страницAncient Greek Divination by Birthmarks and MolessheaniОценок пока нет

- Polytechnic University Management Services ExamДокумент16 страницPolytechnic University Management Services ExamBeverlene BatiОценок пока нет

- 8dd8 P2 Program Food MFG Final PublicДокумент19 страниц8dd8 P2 Program Food MFG Final PublicNemanja RadonjicОценок пока нет

- PLC Networking with Profibus and TCP/IP for Industrial ControlДокумент12 страницPLC Networking with Profibus and TCP/IP for Industrial Controltolasa lamessaОценок пока нет

- CIT 3150 Computer Systems ArchitectureДокумент3 страницыCIT 3150 Computer Systems ArchitectureMatheen TabidОценок пока нет

- Surgery Lecture - 01 Asepsis, Antisepsis & OperationДокумент60 страницSurgery Lecture - 01 Asepsis, Antisepsis & OperationChris QueiklinОценок пока нет

- Last Clean ExceptionДокумент24 страницыLast Clean Exceptionbeom choiОценок пока нет

- Rubber Chemical Resistance Chart V001MAR17Документ27 страницRubber Chemical Resistance Chart V001MAR17Deepak patilОценок пока нет

- MODULE+4+ +Continuous+Probability+Distributions+2022+Документ41 страницаMODULE+4+ +Continuous+Probability+Distributions+2022+Hemis ResdОценок пока нет

- DLL - The Firm and Its EnvironmentДокумент5 страницDLL - The Firm and Its Environmentfrances_peña_7100% (2)

- 3 - Performance Measurement of Mining Equipments by Utilizing OEEДокумент8 страниц3 - Performance Measurement of Mining Equipments by Utilizing OEEGonzalo GarciaОценок пока нет

- 2-Port Antenna Frequency Range Dual Polarization HPBW Adjust. Electr. DTДокумент5 страниц2-Port Antenna Frequency Range Dual Polarization HPBW Adjust. Electr. DTIbrahim JaberОценок пока нет

- Steam Turbine Theory and Practice by Kearton PDF 35Документ4 страницыSteam Turbine Theory and Practice by Kearton PDF 35KKDhОценок пока нет

- 15 - 5 - IoT Based Smart HomeДокумент6 страниц15 - 5 - IoT Based Smart HomeBhaskar Rao PОценок пока нет

- IoT BASED HEALTH MONITORING SYSTEMДокумент18 страницIoT BASED HEALTH MONITORING SYSTEMArunkumar Kuti100% (2)

- Software Requirements Specification: Chaitanya Bharathi Institute of TechnologyДокумент20 страницSoftware Requirements Specification: Chaitanya Bharathi Institute of TechnologyHima Bindhu BusireddyОценок пока нет

- Fernandez ArmestoДокумент10 страницFernandez Armestosrodriguezlorenzo3288Оценок пока нет

- UNIT FOUR: Fundamentals of Marketing Mix: - Learning ObjectivesДокумент49 страницUNIT FOUR: Fundamentals of Marketing Mix: - Learning ObjectivesShaji ViswambharanОценок пока нет

- Philippine Coastal Management Guidebook Series No. 8Документ182 страницыPhilippine Coastal Management Guidebook Series No. 8Carl100% (1)

- Trading As A BusinessДокумент169 страницTrading As A Businesspetefader100% (1)

- Mission Ac Saad Test - 01 QP FinalДокумент12 страницMission Ac Saad Test - 01 QP FinalarunОценок пока нет

- UAPPДокумент91 страницаUAPPMassimiliano de StellaОценок пока нет

- Unit 1 - Gear Manufacturing ProcessДокумент54 страницыUnit 1 - Gear Manufacturing ProcessAkash DivateОценок пока нет

- ConductorsДокумент4 страницыConductorsJohn Carlo BautistaОценок пока нет

- Circular Flow of Process 4 Stages Powerpoint Slides TemplatesДокумент9 страницCircular Flow of Process 4 Stages Powerpoint Slides TemplatesAryan JainОценок пока нет

- TDS Sibelite M3000 M4000 M6000 PDFДокумент2 страницыTDS Sibelite M3000 M4000 M6000 PDFLe PhongОценок пока нет

- Portfolio Artifact Entry Form - Ostp Standard 3Документ1 страницаPortfolio Artifact Entry Form - Ostp Standard 3api-253007574Оценок пока нет

- PESO Online Explosives-Returns SystemДокумент1 страницаPESO Online Explosives-Returns Systemgirinandini0% (1)

- 4 Wheel ThunderДокумент9 страниц4 Wheel ThunderOlga Lucia Zapata SavaresseОценок пока нет

- Intro To Gas DynamicsДокумент8 страницIntro To Gas DynamicsMSK65Оценок пока нет