Академический Документы

Профессиональный Документы

Культура Документы

Web Reliability Analyzing Methods

Загружено:

Sanjeev KumarОригинальное название

Авторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

Web Reliability Analyzing Methods

Загружено:

Sanjeev KumarАвторское право:

Доступные форматы

ANALYZING WEB LOGS TO IDENTIFY COMMON ERRORS AND IMPROVE WEB RELIABILITY

Zhao Li and Jeff Tian

Southern Methodist University Dept of Computer Science and Engineering, SMU, Dallas, Texas 75275, USA

ABSTRACT In this paper, we analyze web access and error logs to identify major error sources and to evaluate web site reliability. Our results show that both error distribution and reliability distribution among different file types are highly uneven, and point to the potential benefit of focusing on specific file types with high concentration of errors and high impact to effectively improve web site reliability and overall user satisfaction. KEYWORDS Web errors and problems, reliability, error log, access log, file type and classification.

1. INTRODUCTION

The prevalence of the World Wide Web also spreads intended or unintended problems on an ever larger scale. The problems include various malicious viruses as well as unintended problems caused by communication breakdowns, hardware failures, and software defects. Identifying the root causes for these problems can help us understand their severity and scope. More importantly, such understandings help us derive effective means to deal with the problems and improve web reliability. In this paper, we focus on the identification, analysis, and characterization of software defects that lead to web problems and affect web reliability. Since the 80:20 rule (Koch, 2000), which states that the majority (say 80%) of the problems can be traced back to a small proportion (say 20%) of the components, has been observed to be generally true for software systems (Porter and Selby, 1990; Tian, 1995), the identification and characterization of these major error sources could also lead us to effective reliability improvement. For web applications, various log files are routinely kept at web servers. In this paper, we extend our previous study on statistical web testing and reliability analysis in (Kallepalli and Tian, 2001) to extract web error and workload information from these log files to support our analyses. The rest of the paper is organized as follows: Section 2 analyzes the web reliability problems and examines the contents of various web logs. Section 3 presents our error analyses, with common error sources identified and characterized, followed by reliability analyses in Section 4. Conclusions and future directions are discussed in Section 5.

2. WEB PROBLEMS AND WEB LOGS

We next examine the general characteristics of web problems and information concerning web traffic and errors recorded in the web server logs, to set the stage for our analyses of web errors and reliability.

2.1 Characterizing web problems and web site reliability

Key to the satisfactory performance of the web is acceptable reliability. The reliability for web applications can be defined as the probability of failure-free web operation completions. Acceptable reliability can be

235

IADIS International Conference e-Society 2003

achieved via prevention of web failures or reduction of chances for such failures. We define web failures as the inability to obtain and deliver information, such as documents or computational results, requested by web users. This definition conforms to the standard definition of failures being the behavioral deviations from user expectations (IEEE, 1990). Based on this definition, we can consider the following failure sources in this process of obtaining and delivering information requested by web users: Host or network failures: Host hardware or systems failures and network communication problems may lead to web failures. However, such failures are no different from regular system or network failures, which can be analyzed by existing techniques. Therefore, these failure sources are not the focus of our study. Browser failures: These failures can be treated the same way as software product failures, thus existing techniques for software quality assurance and reliability analysis can be used to deal with such problems. Therefore, they are not the focus of our study either. Source or content failures: Web failures can also be caused by the information source itself at the server side. We will primarily deal with this kind of web failures in this study.

The failure information, when used in connection with workload measurement, can be fed to many software reliability models (Lyu, 1995; Musa, 1998) to help us evaluate the web site reliability and the potential for reliability improvement. In this paper, we use the Nelson model (Nelson, 1978), one of the earliest and most widely used input domain reliability models, to assess the web site's current reliability. If a total number of f failures are observed for n workload units, the estimated reliability R according to the Nelson model can be obtained as: R = 1 r = 1 f/n = (n f)/n Where r=f/n is the failure rate, a complementary measure to reliability R . The summary reliability measure, mean-time -between-failures (MTBF), can be calculated as: MTBF = n/f = 1/r If discovered defects are fixed over time, its effect on reliability (or reliability growth due to defect removal) can be analyzed by using various software reliability growth models (Lyu, 1995). Both the time domain and input domain information can also be used in tree-based reliability models (Tian, 1995) to identify reliability bottlenecks for focused reliability improvement.

2.2 Web logs and their contents

Two types of log files are commonly used by web servers: individual web accesses, or hits, are recorded in access logs, and related problems are recorded in error logs. Sample entries from such log files for the www.seas.smu.edu web site are given in Figures 1 and 2.

148.233.119.16 - - [16/Aug/1999:03:38:25 -0500] "GET /ce/seas/ HTTP/1.0" 200 10670 "http://www.seas.smu.edu/" "Mozilla/2.0 (compatible; MSIE 3.01; Windows 95)" Figure 1. A sample entry in an access log.

[Mon Aug 16 00:00:56 1999] [error] [client 129.119.4.17] File does not exist: /users/csegrad2/srinivas/public_html/Image10.jpg Figure 2. A sample entry in an error log.

A ``hit'' is registered in the access log if a file corresponding to an HTML page, a document, or other web content is explicitly requested, or if some embedded content, such as graphics or a Java class within an HTML page, is implicitly requested or activated. Most web servers record the following information in their access logs: the requesting computer, the date and time of the request, the file that the client requested, the size of the requested file, and an HTTP status code. In this paper, we use this information together with error information to assess the impact on web reliability by different types of web sources.

236

ANALYZING WEB LOGS TO IDENTIFY COMMON ERRORS AND IMPROVE WEB RELIABILITY

Although access logs also record common HTML errors, separate error logs are typically used by web servers to record details about the problems encountered. The format of these error logs is simple: a timestamp fo llowed by the error message, such as in Figure 2. Common error types are listed below: permission denied no such file or directory stale NFS file handle client denied by server configuration file does not exist invalid method in request invalid URL in request connection mod_mime_magic request failed script not found or unable to start connection reset by peer

Notice that most of these errors conform closely to the source or content failures we defined in Section 2.1. We refer to such failures as errors in subsequent discussions to conform to the commonly used terminology in the web community. Questions about error occurrences and distribution, as well as overall reliability of the web site, can be answered by analyzing error logs and access logs.

2.3 Web log analyses for www.seas.smu.edu

In this paper, we analyze the web logs from www.seas.smu.edu, the official web site for the School of Engineering at Southern Methodist University, to demonstrate the viability and effectiveness of our approach. This web site utilizes Apache Web Server (Behlandorf, 1996), a popular choice among many web hosts, and shares many common characteristics of web sites for educational institutions. These features make our observations and results meaningful to many application environments. Server log data covering 26 consecutive days recently for this website were analyzed. The access log is about 130 megabytes in size, and contains more than 760,000 records. The error log is about 13.5 megabytes in size, and contains more than 30,000 records. These data are large enough for our study to avoid random variations that may lead to severely biased results. On the other hand, because of the nature of constantly evolving web contents, data covering longer periods call for different analyses that take change into consideration, different from the analyses we performed in this study. Some pre -existing log analyzers were used by us previously in (Kallepalli and Tian, 2001) to analyze the access logs. However, these analyzers only provide very limited capability for error analysis. Therefore, we implemented various utility programs in Perl to count the number of errors, number of hits, and to capture frequently used navigation patterns therein. In this study, we extended these utility programs to support additional information extraction and analyses.

3. WEB ERROR ANALYSIS AND CHARACTERIZATION

With the information extracted from our web server logs, we can perform various error analyses to obtain desired results, as presented in this section.

3.1 Analysis of error types

For the 26 days covered by our web server logs, a total of 30760 errors were recorded. The distribution of these errors by error types is given in Table 1. The most dominant error types are permission denied and file does not exist. The former accounts for unauthorized access to web resources, while the latter accounts for the occasions when the requested file was not found on the system.

237

IADIS International Conference e-Society 2003

Table 1. Error distribution by predefined error types. Error type permission denied No such file or directory stale NFS file handle client denied by server configuration file does not exist invalid method in requests invalid URL in request connection mod_mime_magic request failed script not found or unable to start connection reset by peer Total Errors 2079 14 4 2 28631 0 1 1 1 27 0 30760

There are two scenarios in the denied access situations: The first is for denying unauthorized accesses to restricted resources, which should not be counted as web failures. The second is wrongfully denied accesses to unrestricted resources or to restricted resources with proper access authorization, which should be counted as web failures. Consequently, further information needs to be gathered for this type of errors to determine whether to count them as failures. Only afterwards, these properly counted failures can be used in web reliability analyses. File-not-found errors usually represent bad links, and should be counted as web failures. They are also called 404 errors because their error code in the web logs. They are by far the most common type of problems in web usage, accounting for more than 90% of the total errors in this case. Further analyses can be performed to examine the causes for these errors and to assess web site reliability.

3.2 Identifying error sources

For 404 errors, or file does not exist errors, the obvious questions to ask are: What kind of files are they? and How often do they occur? Fortunately, our log files include information about requested files. From this information, we can group requests leading to 404 errors by the requested file types, to examine which kinds of files are the major sources for such errors. To do this, we can simply classify the errors by the corresponding file extensions, which usually indicate the file types. We need to keep in mind that different variations of file extensions may exist for the same type. For example, .html, .htm, .HTML, etc., all indicate that the requested file is an HTML file or of HTML file type. However, this did not turn out to be a real problem, because typically there is a dominant file extension for each major file type for our web site.

Table 2. File types and corresponding errors caused by such files. File type .gif .class directory .html .jpg .ico .pdf .mp3 .ps .doc Cumulative Total Errors % of total 12471 43.56% 4913 17.16% 4443 15.52% 3639 12.71% 1354 4.73% 849 2.97% 235 0.82% 214 0.75% 209 0.73% 75 0.26% 28402 99.20% 28631 100%

For our web site, there are more than 100 different file extensions, with most of them accounting for very few 404 errors. We sorted these file extensions by their corresponding 404 errors and give the results for the top 10 in Table 2. These top 10 error sources represent a dominating share (more than 99%) of the overall 404 errors. In fact, only four file types, .gif, .class, directory, and .html, represent close to 90% of all

238

ANALYZING WEB LOGS TO IDENTIFY COMMON ERRORS AND IMPROVE WEB RELIABILITY

the errors. Our results generally confirm the uneven distribution of problems, or the 80:20 rule mentioned earlier, and point out the potential benefit of identifying and correcting such highly problematic areas. The top error sources indicate what kind of problems a web user would be most likely to encounter when her request was not completed because of 404 errors. Consequently, fixing these problems would improve the overall web site reliability and thus improve overall user satisfaction. Furthermore, root cause analysis can be carried out to understand the reasons or causes for these high defect file types, and corresponding follow-up actions can be carried out to prevent future problems related to the identified causes. On the other hand, the effort to fix the problems may not be directly linked to the total number of 404 errors corresponding to each file type, because a few missing files may be requested repeatedly with high frequency, resulting in high error count for the corresponding file type. In fact, the effort to fix the problems is directly proportional to the actual number of such missing files. This number can be obtained from the error log by counting the unique 404 errors, i.e., an error is counted only once at its first observation, but not counted subsequently.

Table 3. File types and corresponding unique errors or missing files. File type directory .html .gif .ico .jpg .ps .pdf .txt .doc .class Cumulative Total Unique errors % of total 1137 40.46% 897 31.92% 274 9.75% 122 4.34% 106 3.77% 52 1.85% 42 1.49% 25 0.89% 23 0.82% 21 0.75% 2699 96.05% 2810 100% Errors 4443 3639 12471 849 1354 209 235 32 75 4913 28220 28631 % of total 15.52% 12.71% 43.56% 2.97% 4.73% 0.73% 0.82% 0.11% 0.26% 17.16% 98.56% 100%

Table 3 gives the top 10 missing file types and the corresponding numbers of such missing files, with the corresponding total errors also presented for comparison. These top 10 files types are almost identical to that in Table 2, with the exception that .mp3 is replaced by .txt. Similar to Table 2, there is an uneven distribution of missing files, with the top 10 file types representing 96.05% of all the missing files, and three types, directory, .html, and .gif, representing more than 80%. However, the relative ranking is quite different. The most striking difference is for the .class files, where 21 of such missing files, which represent only 0.75% of all the missing files, were requested 4913 times (or 17.16% of the 404 errors). Similar difference is also observed for .gif files, with relatively few missing files requested relatively more often. The directories and .html files demonstrate the opposite trend, where relatively more missing files or directories were requested less often. These differences also confirmed the usefulness of obtaining unique errors or missing file counts: Such information as in Table 3 could help us properly allocate development effort to fix missing file problems.

4. WEB RELIABILITY EVALUATION

The number of errors and the number of unique errors obtained above give us a general indication of the quality of a given web site. However, such error counts are generally more meaningful to the web site owners and maintainers than to the general web users. From a web users point of view, it doesnt matter how many errors or non-existent files are present in a web site, as long as she can most likely get what she requested, the web site quality is good. In other words, even if there are many 404 errors related to a specific type of files, if the vast majority of requests for files of this type can be found and delivered, it is not much of a problem. Conversely, even if there are just a few 404 errors for a file type, if most of the corresponding requests result in file does not exist, it is a serious problem. Therefore, the number of errors needs to be viewed in relation to the number of requests. This characterization of web quality from the users perspective is captured in various reliability measures, to be analyzed in this section.

239

IADIS International Conference e-Society 2003

4.1 Assessment of overall web site reliability

As mentioned in Section 2, the reliability of a web site can be defined as the probability of failure-free (or error-free, in the terminology used in the web community) user request completions. Consequently, we also need to characterize the user requests, or the workload for the web site, for web site reliability assessment. This kind of workload characterization and measurement is a common activity for all reliability analyses, where appropriate workload measurement can typically lead to more accurate reliability assessments and predictions (Tian and Palma, 1997). For web applications, the number of accesses, or the hit count (or hits), directly corresponds to user requests, and can be used to measure the overall workload for a web site (Kallepalli and Tian, 2001). To evaluate the web site reliability, we need to obtain the hit information, in addition to the error information above, and then apply various reliability models. As mentioned in Section 2.1, we can directly use the Nelson model (Nelson, 1978), to obtain the web reliability R and other related reliability measures, such as error rate r and mean-time-between-failures MTBF. For the web site www.seas.smu.edu, the total number of hits over the 26 day period is 763021 and the total number of 404 errors is 28631, giving us the following: Error rate r = 0.0375, or 3.75% of the requests will result in a 404 error. Reliability R = 0.9625, or the web site is 96.25% reliable against 404 errors. MTBF = 26.66, or on average, a user would expect a 404 error for every 26.66 requests.

If we count all the errors (not just 404 errors), the total is 30760, giving us slightly worse reliability numbers, as follows: Error rate r = 0.0403, or 4.03% of the requests will result in an error. Reliability R = 0.9597, or the web site is 95.97% reliable. MTBF = 24.81, or on average, a user would expect a problem for every 24.81 requests.

Table 4. File types and corresponding reliability (error rate). File type .gif .html directory .jpg .pdf .class .ps .ppt .css .txt .doc .c .ico Cumulative (or average rate) Total (or average rate) Hits 438536 128869 87067 65876 10784 10055 2737 2510 2008 1597 1567 1254 849 753709 763021 % of total 57.47% 16.89% 11.41% 8.63% 1.41% 1.32% 0.36% 0.33% 0.26% 0.21% 0.21% 0.16% 0.11% 98.78% 100% Errors 12471 3639 4443 1354 235 4913 209 7 17 32 75 5 849 28249 28631 % of total 43.56% 12.71% 15.52% 4.73% 0.82% 17.16% 0.73% 0.02% 0.06% 0.11% 0.26% 0.02% 2.97% 98.67% 100% Error rate 0.0284 0.0282 0.0510 0.0199 0.0218 0.4886 0.0764 0.0028 0.0085 0.0200 0.0479 0.0040 1.0000 0.0375 0.0375

4.2 Reliability by file types and potential for reliability improvement

The above analyses gave us the overall reliability assessment for the web site. However, when a user requests different types of files, she may experience different reliability. To calculate this reliability for individual file types, we can customize the Nelson model (Nelson, 1978) by using the number of errors and the number of hits associated with the specific file types only. This gives us the reliability analysis results in Table 4, ranked by the number of hits for each file type. We only calculated error rate for those file types with relatively large hit counts in order to minimize random variations due to small number of observations.

240

ANALYZING WEB LOGS TO IDENTIFY COMMON ERRORS AND IMPROVE WEB RELIABILITY

The subsets with extremely low reliability (or high error rate) are the types .ico and .class, where every request for .ico files has resulted in a 404 error, while close to half (48.86%) of the requests for .class files have resulted in 404 errors. Further analysis can be carried out to understand the root causes for these low reliability file types, and corresponding follow-up actions can be carried out to prevent future reliability problems related to the identified causes. Relative to the average error rate of 0.0375, we can categorize these 13 major file types into four categories by their reliability (error rate) below: good reliability category, with error rate r < 0.01, includes .c, .css, .ppt files. above -average reliability category, with error rate r between 0.01 and 0.0375 (average), include .txt, .html, .gif, .jpg, and .pdf files. below-average reliability category, with error rate r between 0.0375 (average) and 0.1, include directories and .doc and .ps files. poor reliability category, with error rate r > 0.1, include .ico and .class files.

This reliability categorization also helps us prioritize effort for reliability improvement: The file types with the poor reliability should be the primary focus for reliability improvement, because they represent the reliability bottleneck. Improvement to their reliability will have a large impact on the overall reliability. In this case, fixing the 122 missing .ico files and 21 .class ones (see Table 3 for unique errors or missing files) would result in reducing 5762 (= 849 + 4913) errors and improving the overall web site reliability from an average error rate r = 0.0375 to r = 0.0300. This significant reliability improvement of 20% can be achieved with relatively low effort, because these 143 (= 122 + 21) missing files only represent a small share (about 5%) of all the 2810 missing files. After fixing these problems, we can focus on the below-average file types, then above-average ones, before we start working on the good ones. This priority scheme gives us a cost effective procedure to improve the overall web site reliability.

5. CONCLUSION

By analyzing the unique problems and information sources for the web environment, we have developed an approach for identifying and characterizing web errors and for assessing and improving web site reliability based on information extracted from existing web logs. This approach has been applied to analyze the log files for the web site at the School of Engineering at Southern Methodist University. Our results demonstrated that the error distribution across different error types and sources is highly uneven. In addition, missing file distribution, workload distribution, as well as reliability distribution for individual types of requested files are all quite uneven. These distributions generally follow the so-called 80:20 rule, where a few components are responsible for most of the problems or effort. Our analysis results can help web site owners to prioritize their web site maintenance and quality assurance effort and to guide further analyses, such as root cause analysis, to identify problem causes and perform preventive and corrective actions. All these focused actions and efforts would lead to better web service and user satisfaction due to the improved web site reliability. The primary limitation of our study is the fact that our web site may not be a representative one for many non-academic web sites. Most of our web pages are static ones, with the HTML documents and embedded graphics dominating other types of pages, while in e -commerce and various other business applications, dynamic pages and context -sensitive contents play a much more important role. To overcome these limitations, we plan to analyze some public domain web logs, such as from the Internet Traffic Archive at ita.ee.lbl.gov or the W3C Web Characterization Repository at repository.cs.vt.edu, to cross-validate our general results. As an immediate follow-up to this study, we plan to analyze web site reliability over time for different types of error sources, and perform related risk identification activities for focused reliability improvement. We also plan to identify better existing tools, develop new tools and utility programs, and integrate them to provide better implementation support for our strategy. All these efforts should lead us to a more practical and effective approach to achieve high quality web service and to maximize user satisfaction.

241

IADIS International Conference e-Society 2003

ACKNOWLEDGEMENT

This research is supported in part by NSF grants 9733588 and 0204345, THECB/ATP grants 003613-00301999 and 003613-0030-2001, and Nortel Networks.

REFERENCES

Behlandorf, B., 1996. Running a Perfect Web Site with Apache, 2nd Ed. MacMillan Computer Publishing, New York, USA. IEEE, 1990. IEEE Standard Glossary of Software Engineering Terminology. STD 610.12-1990. IEEE. Kallepalli, C. and Tian, J., 2001. Measuring and modeling usage and reliability for statistical web testing. IEEE Trans. on Software Engineering, Vol. 27, No. 11, pp 1023--1036. Koch, R., 2000. The 80/20 Principle: The Secret of Achieving More With Less, Nicholas Brealey Publishing, London, UK. Lyu, M. R., editor, 1995. Handbook of Software Reliability Engineering. McGraw-Hill, New York, USA. Musa, J. D., 1998. Software Reliability Engineering. McGraw-Hill, New York, USA. Nelson, E., 1978 . Estimating software reliability from test data. Microelectronics and Reliability, Vol. 17, No. 1, pp. 67-73. Porter, A. A. and Selby, R. W., 1990. Empirical Guided Software Development Using Metric-Based Classification Trees, IEEE Software, Vol. 7, No. 2, pp. 4654. Tian, J., 1995. Integrating time domain and input domain analyses of software reliability using tree-based models. IEEE Trans. on Software Engineering, Vol. 21, No. 12, pp. 945--958. Tian, J. and Palma, J., 1997. Test workload measurement and reliability analysis for large commercial software systems. Annals of Software Engineering, Vol. 4, pp. 201--222.

242

Вам также может понравиться

- Seth Godin Add LipchinДокумент43 страницыSeth Godin Add LipchinMertxe Pasamontes Fitó100% (3)

- Automating Done Done With Team WorkflowДокумент27 страницAutomating Done Done With Team WorkflowSanjeev KumarОценок пока нет

- Effective Metrics For Managing Test EffortsДокумент23 страницыEffective Metrics For Managing Test EffortsSanjeev KumarОценок пока нет

- QSIC 2012 Presentation Automated Testing of WebДокумент27 страницQSIC 2012 Presentation Automated Testing of WebSanjeev KumarОценок пока нет

- Container and Vertical GardeningДокумент14 страницContainer and Vertical GardeningSanjeev Kumar100% (1)

- ISO 24734 Support in PageSenseДокумент18 страницISO 24734 Support in PageSenseSanjeev KumarОценок пока нет

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (890)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (265)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2219)

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (119)

- ALU LTE LB WorkshopДокумент47 страницALU LTE LB WorkshopBelieverОценок пока нет

- HikCentral V1.6 - BrochureДокумент24 страницыHikCentral V1.6 - BrochuredieterchОценок пока нет

- Pioneer AVH X2500BT ManualДокумент96 страницPioneer AVH X2500BT ManualShima SanОценок пока нет

- TCM3.2L La 22PFL1234 D10 32PFL5604 78 32PFL5604 77 42PFL5604 77Документ67 страницTCM3.2L La 22PFL1234 D10 32PFL5604 78 32PFL5604 77 42PFL5604 77castronelsonОценок пока нет

- TW PythonДокумент243 страницыTW Pythonoscar810429Оценок пока нет

- ReadmeДокумент2 страницыReadmeNiraj BhakdeОценок пока нет

- Ug949 Vivado Design MethodologyДокумент243 страницыUg949 Vivado Design MethodologyjivashiОценок пока нет

- Jehovah Shammah Christian Community School (JSCCS) Enrollment SystemДокумент4 страницыJehovah Shammah Christian Community School (JSCCS) Enrollment SystemDarcy HaliliОценок пока нет

- SYSC5603 (ELG6163) Digital Signal Processing Microprocessors, Software and ApplicationsДокумент49 страницSYSC5603 (ELG6163) Digital Signal Processing Microprocessors, Software and ApplicationsgokulphdОценок пока нет

- 2.3.2.2 Embedded Smart CamerasДокумент2 страницы2.3.2.2 Embedded Smart CamerashanuОценок пока нет

- CPLD User Guide From XilinxДокумент24 страницыCPLD User Guide From XilinxSidharth ChaturvedyОценок пока нет

- PRO1 - 03E - Simatic Manager (Compatibility Mode) (Repaired)Документ1 страницаPRO1 - 03E - Simatic Manager (Compatibility Mode) (Repaired)hwhhadiОценок пока нет

- ZigBee RF4CE Specification PublicДокумент101 страницаZigBee RF4CE Specification PublicQasim Ijaz AhmedОценок пока нет

- MP C6000 C7500 Ricoh Sales HandbookДокумент72 страницыMP C6000 C7500 Ricoh Sales HandbookPham Cong ThuОценок пока нет

- 25 06 RFIDWeapArmouryManagementДокумент80 страниц25 06 RFIDWeapArmouryManagementFaizan ShafieОценок пока нет

- Veeam Backup & Replication - User Guide For Hyper-V EnvironmentsДокумент249 страницVeeam Backup & Replication - User Guide For Hyper-V EnvironmentsHayTechОценок пока нет

- Building Management SystemДокумент36 страницBuilding Management SystemFros FrosОценок пока нет

- Bahasa Inggris Pcs & MacsДокумент3 страницыBahasa Inggris Pcs & MacsAgus DanaОценок пока нет

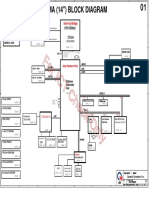

- PCB stack up and block diagram for 14-inch Intel Ivy Bridge laptopДокумент34 страницыPCB stack up and block diagram for 14-inch Intel Ivy Bridge laptopabhilashvaman5542100% (1)

- B0193uc HДокумент68 страницB0193uc HMiguel Angel GiménezОценок пока нет

- Verilog Code For 60s TimerДокумент4 страницыVerilog Code For 60s TimerChandem Bacasmas0% (3)

- Time Sharing SystemsДокумент7 страницTime Sharing SystemsNeeta PatilОценок пока нет

- Emerging Trends, Technologies, AND Applications: BidgoliДокумент49 страницEmerging Trends, Technologies, AND Applications: BidgoliMaheshwaar SОценок пока нет

- I/A Series V6.2 UNIX™ and Windows NT Read Me First: August 14, 2001Документ36 страницI/A Series V6.2 UNIX™ and Windows NT Read Me First: August 14, 2001saratchandranbОценок пока нет

- Widevine DRM Proxy IntegrationДокумент23 страницыWidevine DRM Proxy IntegrationpooperОценок пока нет

- Regarding The Change of Names Mentioned in The Document, Such As Mitsubishi Electric and Mitsubishi XX, To Renesas Technology CorpДокумент37 страницRegarding The Change of Names Mentioned in The Document, Such As Mitsubishi Electric and Mitsubishi XX, To Renesas Technology CorpАнтон ПОценок пока нет

- Chapter 9 ADM Modulator OperationДокумент15 страницChapter 9 ADM Modulator OperationVinita DahiyaОценок пока нет

- Catalogo ZelioДокумент42 страницыCatalogo ZelioROLANDOОценок пока нет

- Toshiba SatelliteProL300 PSLB9A-00H002 Specification Brochure Jan'09Документ2 страницыToshiba SatelliteProL300 PSLB9A-00H002 Specification Brochure Jan'09phatgaz100% (1)

- Address Book in JAVAДокумент18 страницAddress Book in JAVAmelyfony100% (1)