Академический Документы

Профессиональный Документы

Культура Документы

Double Ranked Set Sampling

Загружено:

Lubna AmrАвторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

Double Ranked Set Sampling

Загружено:

Lubna AmrАвторское право:

Доступные форматы

Statistics & Probability Letters 48 (2000) 205212

Double-ranked set sampling

M. Fraiwan Al-Saleh

a,

, M. Ali Al-Kadiri

b

a

Statistics Department, Yarmouk University, Irbid, Jordan

b

Islamic Hospital, Amman, Jordan

Received April 1999; received in revised form August 1999

Abstract

As a variation of ranked set sampling (RSS), double-ranked set sampling technique (DRSS) is introduced and inves-

tigated. It is shown that this extension of RSS is more ecient in estimating the population mean than both RSS and

simple random sampling (SRS). It is also shown, using the concept of degree of distinguishability between the sample

observations, that ranking in the second stage is easier than that in the rst stage. c 2000 Elsevier Science B.V. All

rights reserved

Keywords: Ranked set sampling; Double-ranked set sampling; Relative precision; Probability of perfect ranking; Degree

of distinguishability

1. Introduction

Ranked set sampling technique was rst introduced by McIntyre (1952). This technique of sampling is

proper to use when the observer faces diculty in measuring a large number of sampling units, but visual

ranking for a few of them is not dicult. The procedure consists of drawing m random samples, each of

size m, from the population. The m units of each set are ranked visually or by a negligible cost method.

Then m measurements are obtained by quantifying the ith visual order statistic from the ith set. The whole

process may be repeated n times until a total of nm

2

elements have been drawn from the population but only

nm elements have been measured. The nm measured observations are referred to as the ranked set sample

(RSS).

Takahasi and Wakimoto (1968), provided the statistical theory for RSS procedure. Assuming the sampling

is from an absolutely continuous distribution, they showed that the mean of the RSS, j

, is the unbiased

estimator for the population mean, j, and has higher eciency than the mean of SRS, j. Takahasi and

Correspondence address: Department of Mathematics & Statistics, Sultan Qaboos University, P.O. Box 36, Al Khodh, Oman.

E-mail address: malsaleh@squ.edu.om (M.F. Al-Saleh)

0167-7152/00/$ - see front matter c 2000 Elsevier Science B.V. All rights reserved

PII: S0167- 7152( 99) 00206- 0

206 M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212

Wakimoto established the following bound for the relative precision (eciency):

16RP =

var( j)

var( j

)

6

m + 1

2

,

where the upper bound is achieved when the distribution is uniform. Here, RP can be written as

RP =

1

1 (1}m)

m

i=1

((j

i

j)}o

2

)

2

, (1)

where j

i

is the mean of the ith-order statistic.

Stokes (1980) showed that the RSS variance does not enjoy the same advantage as an estimator of the

population variance.

Annotated bibliography of ranked set sampling was provided by Kour et al. (1995). A comparison between

RSS and stratied sampling was considered by Kour et al. (1996). Also, Kour et al. (1997) used unequal

allocation methods for ranked set sampling with skewed distributions.

The eciency of j

relative to j is independent of n, but increases in m. However, accurate visual ordering

would be dicult in practice for large m, m is usually taken to be 2 or 3.

In this paper we introduce DRSS as a procedure, which yields an increase in the eciency of j

(the

mean of the DRSS) relative to j and j

without increasing the value of m. The DRSS is described as follows

(assume n = 1).

(1) Identify m

3

elements from the target population and divide these elements randomly into m sets each

of size m

2

elements.

(2) Use the usual RSS procedure on each set to obtain m ranked set samples of size m each.

(3) Apply the RSS procedure again on step (2) to obtain a DRSS of size m.

The procedure is illustrated for the case of m = 3 in the following example.

Example 1. Consider the case of m = 3. In this case we must identify 27 elements (3 sets of size 9 each).

Assume the elements are

X

(1)

11

, X

(1)

12

, . . . , X

(1)

33

, X

(2)

11

, . . . , X

(2)

33

, X

(3)

11

, . . . , X

(3)

33

.

After ranking the elements of each set (visually) we obtain

X

(1)

(11)

, X

(1)

(12)

, X

(1)

(13)

X

(1)

(21)

, X

(1)

(22)

, X

(1)

(23)

X

(1)

(31)

, X

(1)

(32)

, X

(1)

(33)

X

(2)

(11)

, X

(2)

(12)

, X

(2)

(13)

X

(2)

(21)

, X

(2)

(22)

, X

(2)

(23)

X

(2)

(31)

, X

(2)

(32)

, X

(2)

(33)

and

X

(3)

(11)

, X

(3)

(12)

, X

(3)

(13)

X

(3)

(21)

, X

(3)

(22)

, X

(3)

(23)

X

(3)

(31)

, X

(3)

(32)

, X

(3)

(33)

,

so we have 3 RSS

S

1

= {X

(1)

(11)

, X

(1)

(22)

, X

(1)

(33)

}, S

2

= {X

(2)

(11)

, X

(2)

(22)

, X

(2)

(33)

} and S

3

= {X

(3)

(11)

, X

(3)

(22)

, X

(3)

(33)

}.

Let Y

1

= min(S

1

), Y

2

= med(S

2

) and Y

3

= max(S

3

), then {Y

1

, Y

2

, Y

3

} is DRSS of size 3. For general m, we

have {X

(i)

(11)

, X

(i)

(22)

, . . . , X

(i)

(mm)

} i = 1, 2, . . . , m. If Y

i

= ith min{X

(i)

(11)

, X

(i)

(22)

, . . . , X

(i)

(mm)

}, then {Y

1

, . . . , Y

m

} is DRSS

of size m.

2. General setup and some basic results

Assume that the variable of interest X has density [(x), with absolutely continuous distribution function

F(x), mean j and variance o

2

. Let X

1

, . . . , X

m

be i.i.d [(x), then the p.d.f. [

) : m

of the )th ordered statistic

M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212 207

X

())

is

[

) : m

(x) = m

m 1

) 1

[(x)(F(x))

)1

(1 F(x))

m)

,

with mean j

)

and variance o

)

.

Let {Y

1

, . . . , Y

m

} be DRSS and assume that Y

)

q

) : m

(x) with c.d.f. G

) : m

(,). Note that q

) : m

(x) is the

density of the )th-ordered statistic from an RSS, Z

1

, . . . , Z

m

say, with Z

)

[

) : m

(x). Let E(Y

)

) = j

)

and

Var(Y

)

) = o

2

)

. Clearly, Y

1

, . . . , Y

m

are independent but not identically distributed.

The identities in the following lemma are an analogue to Takahasi and Wakimoto (1968) identities for

RSS.

Lemma 1. (i) [(x) = (1}m)

m

)=1

q

) : m

(x).

(ii) j = (1}m)

m

)=1

j

)

.

(iii) o

2

= (1}m)

m

)=1

o

2

)

+ (1}m)

m

)=1

(j

)

j)

2

.

Proof. To prove (i), let

W

)

=

1 if Z

)

6x,

0 o.w.

and

W =

m

)=1

W

)

,

then

E(W) =

m

)=1

P[Z

)

6x] =

m

)=1

F

) : m

(x) = mF

(by Takahasi and Wakimoto, 1968).

On the other hand,

m

)=1

G

) : m

(x) =

m

)=1

P[Y

)

6x]

=

m

)=1

P[at least ) of Z

1

, . . . , Z

m

6x]

=

m

)=1

P[W)] = E(W) = mF

and hence (i) follows. The proofs of (ii) and (iii) follow easily from (i).

3. Diculty of ranking in the second stage

For RSS to be more advantageous over SRS, judgment ranking should be accurate and has negligible cost.

Likewise, for DRSS to be of practical use and more useful than RSS and SRS, it is necessary that the ranking

in the second phase should be accurate and almost costless. In this section, it is shown in some sense, that

208 M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212

Table 1

Distribution: U(0,1) Exp(1) N(0,1)

c P

(1)

2

P

(2)

2

c P

(1)

2

P

(2)

2

c P

(1)

2

P

(2)

2

(a) Probability of perfect ranking for m = 2

0.1 0.8100 0.8667 0.1 0.9048 0.9336 0.1 0.9436 0.9531

0.5 0.2500 0.3542 0.9 0.4065 0.4328 0.5 0.7236 0.7800

0.9 0.0100 0.0187 1.5 0.2231 0.2809 1.0 0.4796 0.5650

(b) Probability of perfect ranking for m = 3

0.01 0.9427 0.9635 0.1 0.7300 0.8008 0.1 0.8310 0.8737

0.5 0.5199 0.6000 0.9 0.0661 0.0700 0.9 0.3780 0.4374

0.9 0.0000 0.001 1.5 0.0071 0.0077 1.5 0.0931 0.1330

ranking in the second stage of obtaining DRSS is easier than ranking in the rst stage (obtaining RSS). Thus,

if perfect ranking can be achieved easily in the rst stage, then it is even easier to be achieved in the second

stage. We believe that this is essential for DRSS to be useful.

Suppose that w

1

, w

2

can be correctly ranked if |w

1

w

2

| c for some preassigned positive number c.

Takahasi (1970) used this denition to study the degree of diculty in doing the ranking to obtain an RSS.

However, this does not appear to have led to any useful results in the subsequent literature. The following

denition is an obvious extension of the above denition.

Denition 1. We say that the units w

1

, w

2

, . . . , w

m

can be perfectly ranked if min

i, )

[|w

i

w

)

|] c for a pre

chosen positive number c.

If W

1

, W

2

, . . . , W

m

are random variables then P

m

=Pr{min

i, )

[|W

i

W

)

|] c} is called the probability of perfect

ranking. Let P

(1)

m

and P

(2)

m

be, respectively, the probability of perfect ranking in the rst and the second stage

of obtaining a DRSS of size m.

If m = 2, then using the terminology of the previous section, we have P

(1)

2

= 2E[F(X c)] and P

(2)

2

=

E[F

1

(X

2

c)] + E[F

2

(X

1

c)].

Example 2. (i) If X U(0, 1) then P

(1)

2

= (1 c)

2

and P

(2)

2

= 1

4

3

c +

1

3

c

4

.

(ii) If X exponential (0 = 1) then P

(1)

2

= e

c

and P

(2)

2

=

4

3

e

c

1

3

e

2c

.

(iii) If X N(0, 1), then P

(1)

2

= 2[1(c}

2)], P

(2)

2

can be calculated numerically.

It can be seen in each of the three examples above, that P

(2)

2

P

(1)

2

. Table 1 contains the values of P

(1)

2

,

P

(2)

2

, P

(1)

3

and P

(2)

3

for the above distributions. It is clear that P

m

depends solely on the underlying distribution

F (and on the choice of c), in constrast to the non-parametric character of RSS and DRSS.

Next, we will consider another way of measuring diculty in ranking, that does not depend on the

underlying distribution.

Denition 2. Assume W

1

, W

2

, . . . , W

m

is a sample from an absolutely continuous distribution function F.

The degree of distinguishability between the sample elements, is dened as DD

m

= max

S

[P(W

i

1

W

i

2

W

i

m

)] where S is the set of all permutations of the numbers 1, 2, . . . , m.

Let DD

(1)

m

and DD

(2)

m

be, respectively the values of DD

m

for the rst and second stage of ranking. The

following properties are almost obvious.

(1) DD

(1)

m

= 1}m!; this is true, because in the rst stage, we rank an iid sample.

M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212 209

(2) DD

(2)

m

1}m! and DD

(2)

m

is decreasing in m.

(3) If Y

1

, Y

2

, . . . , Y

m

is a DRSS then P(Y

i

1

Y

i

2

Y

i

m

) = E[h(F(X))], where h is a polynomial in F.

Hence, the value of DD

m

does not depend on F and therefore DD

m

has a non-parametric character.

It can be shown via direct evaluation of the above probability that

P(Y

i

1

Y

i

2

Y

i

m

)

=

(m)

m

m

)=1

(i

)

1)!

mi

1

)

1

=0

mi

2

)

2

=0

mi

m

)

m

=0

(1)

m

k=1

J

k

m

k=1

[

k

r=1

()

r

+ i

r

)]

m

k=1

[)

k

!(m i

k

)

k

)!]

DD

(2)

m

can be calculated for any value of m. For example, DD

(2)

2

=0.8333; DD

(2)

3

=0.6095 and DD

(2)

4

=0.4028;

while, DD

(1)

2

= 0.50; DD

(1)

3

= 0.1667 and DD

(1)

4

= 0.0417.

Hence, the degree of distinguishability between the elements of the RSS (which are to be ranked to obtain

a DRSS) is much higher than the degree of distinguishability between the elements of SRS (which are to be

ranked to obtain an RSS). In this sense, it can be said that ranking in the second stage is much easier than

in the rst stage.

4. Eciency of the method

To see how ecient this method is, compared to SRS or RSS, we will use {Y

1

, Y

2

, . . . , Y

m

} to estimate the

population mean j. Let j

= (1}m)

m

i=1

Y

i

, be the DRSS estimator, while j = (1}m)

m

i=1

X

i

is the SRS

estimator, and j

is the RSS estimator. Clearly, var( j) = o

2

}m. (Assuming that we are sampling from an

innite population.)

Lemma 3. (i) E( j

) = j.

(ii) var( j

) = (1}m)[o

2

(1}m)

(j

i

j)

2

] = (1}m)

m

i=1

o

2

i

.

(iii) RP of j

w.r.t. j is

RP =

1

1 (1}m)

m

i=1

[(j

i

j)}o]

2

.

(iv) var ( j

)6var( j

).

Proof. (i) and (ii) can be easily proved using Lemma 1 and the independency of Y

1

, Y

2

, . . . , Y

m

.

To prove (iii), we have

RP =

var( j)

var( j

)

=

o

2

}m

(1}m

2

)

m

i=1

o

2

i

=

o

2

}m

(1}m)[o

2

(1}m)

m

i=1

(j

i

j)

2

]

=

1

1 (1}m)

m

i=1

[(j

i

j)}o]

2

. (2)

Clearly, RP1.

The quantity (1}m)

m

i=1

[(j

i

j)}o]

2

can be shown to equal the quantity [var( j) var( j

)]}var( j). For

this reason this quantity is called by some authors, the relative saving (RS). Thus, RP = 1}(1 RS).

210 M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212

To prove (iv), let Z

1

, Z

2

, . . . , Z

m

be an RSS then,

var( j

) = var

m

i=1

Z

i

m

= var

m

i=1

Z

(i)

m

=

1

m

2

m

i=1

var(Z

(i)

) +

1

m

2

i=)

cov(Z

(i)

, Z

( ))

)

= var( j

) +

1

m

2

i=)

cov(Z

(i)

, Z

( ))

) [since for all i, var(Y

i

) = var(Z

(i)

)].

Now, if (J

i

, W

)

) and (J

i

, W

)

) are two independent vectors having the same joint distribution as (Z

(i)

, Z

( ))

)

then, similar to Lehman (1966) for the i.i.d. case, we have

2 cov(Z

(i)

, Z

( ))

) = 2 cov(J

i

, W

)

)

= 2[E(J

i

W

)

) E(J

i

)E(W

)

)]

=E[(J

i

J

i

)(W

)

W

)

)].

Let

I (J

i

, u) =

1, J

i

6u,

0, o.w.

Then,

E[(J

i

J

i

)(W

)

W

)

)] =

[(I (t

i

, u) I (t

i

, u)) (I (w

i

, t) I (w

i

, t))] du dt

= 2

[P(Z

(i)

6u, Z

())

6t) P(Z

(i)

6u)P(Z

())

6t)] du dt.

Esary et al. (1967), showed that if 1

1

, . . . , 1

n

are independent random variable and S

1

, . . . , S

n

are non-

decreasing functions of 1

1

, . . . , 1

n

then P(S

1

6s

1

, . . . , S

k

6s

k

)

k

i=1

P(S

i

6s

i

). Let k =2, S

1

=Z

(i)

and S

2

=Z

( ))

then S

1

and S

2

are non-decreasing in each of Z

1

, . . . , Z

m

. Thus,

P[Z

(i)

6u, Z

( ))

6t]P[Z

(i)

6u]P[Z

( ))

6t].

Therefore, Cov(Z

(i)

, Z

( ))

)0 and hence var( j

)var( j

); i.e. the DRSS estimator is at least as ecient as

RSS estimator.

Example 3. If m = 2, then

F

1

(x) = 1 [1 F(x)]

2

and F

2

(x) = F

2

(x),

G

1

(x) = 1 [1 F

1

(x)][1 F

2

(x)] and G

2

(x) = F

1

(x)F

2

(x).

While if m = 3 then,

F

1

(x) = 1 [1 F(x)]

3

and F

3

(x) = F

3

(x) and F

2

(x) = 3F(x) F

1

(x) F

3

(x)

G

1

(x) = 1 [1 F

1

(x)][1 F

2

(x)][1 F

3

(x)] and G

3

(x) = F

1

(x)F

2

(x)F

3

(x) and

G

2

(x) = 3F(x) G

1

(x) G

3

(x).

Now, we will calculate the eciency of the RSS and DRSS estimators with respect to SRS estimator, for

uniform, exponential and normal distributions.

M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212 211

Table 2

Eciencies of RSS and DRSS with respect to SRS

Distribution Method m = 2 m = 3 m = 4 m = 5

U(0,1) RSS 1.500 2.000 2.500 3.000

DRSS 1.923 3.026 4.711 5.816

Exp(1) RSS 1.330 1.640 1.990 2.728

DRSS 1.516 2.024 2.374 3.375

N(0,1) RSS 1.470 1.914 2.347 2.770

DRSS 1.648 2.558 3.198 4.459

4.1. Uniform distribution, U(0, 1)

Using the above formulas, we have for m = 2,

(j

1

, j

2

) = (

1

3

,

2

3

) and (j

1

, j

2

) = (

7

10

,

3

10

).

Hence, the eciencies of RSS and DRSS estimators with respect to SRS are (1.5, 1.923) respectively (using

(1) and (2)).

For m = 3 we have

(j

1

, j

2

, j

3

) = (

1

4

,

1

2

,

3

4

) and (j

1

, j

2

, j

3

) = (

59

280

,

1

12

,

221

280

),

and hence the corresponding eciencies are (2, 3.026).

4.2. Exponential distribution, Exp(1)

Using the above formulas, we have for m = 2,

(j

1

, j

2

) = (

1

2

,

3

2

) and (j

1

, j

2

) = (

5

12

,

19

12

).

Hence, the eciencies of RSS and DRSS estimators with respect to SRS are (1.33, 1.516) respectively (using

(1) and (2)).

For m = 3 we have

(j

1

, j

2

, j

3

) = (

1

3

,

5

6

,

11

6

) and (j

1

, j

2

, j

3

) = (

131

504

,

983

1260

,

4939

2520

)

and hence the corresponding eciencies are (1.640, 2.024).

4.3. Normal distribution, N(0,1)

For m = 2 we have (j

1

, j

2

) = (1}

, 1}

) and hence the eciency of j

with respect to j is

}(1 ) =1.470. The eciency of j

with respect to j is found to be 1.648 (using numerical integration).

For m = 3, (j

1

, j

2

, j

3

) = (3}2

, 0, 3}2

) and hence the eciency of j

with respect to j is 2}

(23) =1.914. The corresponding eciency of j

with respect to j is 2.558 (using numerical integration).

For higher values of m (m = 4, 5), the eciencies are calculated numerically. Table 2 summarizes the

values.

It can be seen from Table 2, that there is a signicant improvement in the eciency when using DRSS

rather than RSS or SRS. Also, the improvement in eciency for the two symmetric distributions (normal and

uniform) is higher than that for the skewed distribution (exponential).

212 M.F. Al-Saleh, M.A. Al-Kadiri / Statistics & Probability Letters 48 (2000) 205212

5. Concluding remarks

It has be seen that the DRSS estimator of the population mean is more ecient than both SRS and RSS

estimators. Thus, a gain in eciency is obtained using DRSS without increasing the set size m. Hence, DRSS

is a more representative sample of the population than RSS and SRS. It appears also, using the concept of

degree of distinguishability, that no extra eort is needed to obtain accurate ranking in the second stage of

obtaining DRSS.

It is believed that this work is the rst step towards the study of multi-stage RSS.

Acknowledgements

Our sincere thanks are due to the referee who provided many critical comments and helpful suggestions

which signicantly improved the original version of the paper.

References

Esary, J., Proschan, F., Walkup, D., 1967. Association of random variables, with application. Ann. Math. Statist. 38, 14661474.

Kour, A., Patil, G., Sinha, A., Tailie, C., 1995. Ranked set sampling: an annotated bibliography. Environ. Ecol. statist. 2, 2554.

Kour, A., Patil, G., Susan, J., Charles, T., 1996. Environmental sampling with a concomitant variable: a comparison between ranked set

sampling and stratied simple random sampling. J. Appl. Statist. 23 (2 and 3), 231255.

Kour, A., Patil, G., Taillie, C., 1997. Unequal allocation models for ranked set sampling with skewed distributions. Biometrices 53,

123130.

McIntyre, G., 1952. A method for using selective sampling using ranked set. Austral. J. Agric. Res. 3, 385890.

Stokes, L., 1980. Estimation of variance using judgment ordered ranked set samples. Biometrics 36, 3542.

Takahasi, K., 1970. Practical note on estimation of population mean based on samples stratied by means of ordering. Ann. Inst. Statist.

Math. 22, 421428.

Takahasi, K., Wakimoto, K., 1968. On unbiased estimates of the population mean based on the sample stratied by means of ordering.

Ann. Inst. Statist. Math. 20, 421428.

Вам также может понравиться

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- Contribution of Obesity and Abdominal Fat Mass To Risk of Stroke and TransientДокумент8 страницContribution of Obesity and Abdominal Fat Mass To Risk of Stroke and TransientLubna AmrОценок пока нет

- Bachelor Project PowerpointДокумент26 страницBachelor Project PowerpointLubna AmrОценок пока нет

- A Nonparametric Test For Symmetry Using RSS PDFДокумент19 страницA Nonparametric Test For Symmetry Using RSS PDFLubna AmrОценок пока нет

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- WHO's Drinking Water Standards 1993Документ1 страницаWHO's Drinking Water Standards 1993Lubna Amr100% (1)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (895)

- Koti and Pau PDFДокумент14 страницKoti and Pau PDFLubna AmrОценок пока нет

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- Question 1 Matlab CodeДокумент3 страницыQuestion 1 Matlab CodeLubna AmrОценок пока нет

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- APA Citation StyleДокумент13 страницAPA Citation StyleCamille FernandezОценок пока нет

- Q2) Gaussian Elimination: FunctionДокумент3 страницыQ2) Gaussian Elimination: FunctionLubna AmrОценок пока нет

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- Minitab f1&2Документ42 страницыMinitab f1&2Lubna AmrОценок пока нет

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (266)

- Ecoflam Burners 2014 enДокумент60 страницEcoflam Burners 2014 enanonimppОценок пока нет

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- University of Cambridge International Examinations General Certificate of Education Advanced LevelДокумент4 страницыUniversity of Cambridge International Examinations General Certificate of Education Advanced LevelHubbak KhanОценок пока нет

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Factors That Affect College Students' Attitudes Toward MathematicsДокумент17 страницFactors That Affect College Students' Attitudes Toward MathematicsAnthony BernardinoОценок пока нет

- Class VI (Second Term)Документ29 страницClass VI (Second Term)Yogesh BansalОценок пока нет

- Disc Brake System ReportДокумент20 страницDisc Brake System ReportGovindaram Rajesh100% (1)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- 1.project FullДокумент75 страниц1.project FullKolliparaDeepakОценок пока нет

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- Microprocessor I - Lecture 01Документ27 страницMicroprocessor I - Lecture 01Omar Mohamed Farag Abd El FattahОценок пока нет

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2259)

- Chap005 3Документ26 страницChap005 3Anass BОценок пока нет

- Epson WorkForce Pro WF-C878-879RДокумент8 страницEpson WorkForce Pro WF-C878-879Rsales2 HARMONYSISTEMОценок пока нет

- Serie W11 PDFДокумент2 страницыSerie W11 PDFOrlandoОценок пока нет

- Thermal Breakthrough Calculations To Optimize Design of Amultiple-Stage EGS 2015-10Документ11 страницThermal Breakthrough Calculations To Optimize Design of Amultiple-Stage EGS 2015-10orso brunoОценок пока нет

- Synthesis of Glycerol Monooctadecanoate From Octadecanoic Acid and Glycerol. Influence of Solvent On The Catalytic Properties of Basic OxidesДокумент6 страницSynthesis of Glycerol Monooctadecanoate From Octadecanoic Acid and Glycerol. Influence of Solvent On The Catalytic Properties of Basic OxidesAnonymous yNMZplPbVОценок пока нет

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- Famous MathematicianДокумент116 страницFamous MathematicianAngelyn MontibolaОценок пока нет

- 21 API Functions PDFДокумент14 страниц21 API Functions PDFjet_mediaОценок пока нет

- Grade-9 (3rd)Документ57 страницGrade-9 (3rd)Jen Ina Lora-Velasco GacutanОценок пока нет

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- Chapter 1 IntroductionДокумент49 страницChapter 1 IntroductionGemex4fshОценок пока нет

- Lab 3.1 - Configuring and Verifying Standard ACLsДокумент9 страницLab 3.1 - Configuring and Verifying Standard ACLsRas Abel BekeleОценок пока нет

- TitleДокумент142 страницыTitleAmar PašićОценок пока нет

- Biztalk and Oracle IntegrationДокумент2 страницыBiztalk and Oracle IntegrationkaushiksinОценок пока нет

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (121)

- Sample Chapter - Oil and Gas Well Drilling Technology PDFДокумент19 страницSample Chapter - Oil and Gas Well Drilling Technology PDFDavid John100% (1)

- PRD Doc Pro 3201-00001 Sen Ain V31Документ10 страницPRD Doc Pro 3201-00001 Sen Ain V31rudybestyjОценок пока нет

- The Number MysteriesДокумент3 страницыThe Number Mysterieskothari080903Оценок пока нет

- HANA OverviewДокумент69 страницHANA OverviewSelva KumarОценок пока нет

- Student - The Passive Voice Without AnswersДокумент5 страницStudent - The Passive Voice Without AnswersMichelleОценок пока нет

- OK Flux 231 (F7AZ-EL12) PDFДокумент2 страницыOK Flux 231 (F7AZ-EL12) PDFborovniskiОценок пока нет

- 003pcu3001 Baja California - JMH - v4 PDFДокумент15 страниц003pcu3001 Baja California - JMH - v4 PDFEmir RubliovОценок пока нет

- DocuДокумент77 страницDocuDon'tAsK TheStupidOnesОценок пока нет

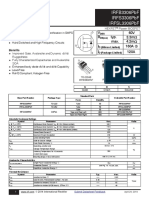

- Irfb3306Pbf Irfs3306Pbf Irfsl3306Pbf: V 60V R Typ. 3.3M: Max. 4.2M I 160A C I 120AДокумент12 страницIrfb3306Pbf Irfs3306Pbf Irfsl3306Pbf: V 60V R Typ. 3.3M: Max. 4.2M I 160A C I 120ADirson Volmir WilligОценок пока нет

- Workshop 2 Electrical Installations Single PhaseДокумент3 страницыWorkshop 2 Electrical Installations Single PhaseDIAN NUR AIN BINTI ABD RAHIM A20MJ0019Оценок пока нет