Академический Документы

Профессиональный Документы

Культура Документы

Documentation

Загружено:

Kirti Dutt0 оценок0% нашли этот документ полезным (0 голосов)

27 просмотров41 страницаghfghfghfghfghfghsrdfsrerwersd

Авторское право

© © All Rights Reserved

Доступные форматы

DOCX, PDF, TXT или читайте онлайн в Scribd

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документghfghfghfghfghfghsrdfsrerwersd

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате DOCX, PDF, TXT или читайте онлайн в Scribd

0 оценок0% нашли этот документ полезным (0 голосов)

27 просмотров41 страницаDocumentation

Загружено:

Kirti Duttghfghfghfghfghfghsrdfsrerwersd

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате DOCX, PDF, TXT или читайте онлайн в Scribd

Вы находитесь на странице: 1из 41

Cloud data protection for masses

A thesis Submitted in partial fulfillment of the requirements

For the award of the degree of

Bachelor of Technology

In

Computer science and Engineering

Submitted by

M.moulika davi (106F1A0526)

N.sowjanya (106F1A0528)

k.sushmitha (106F1A0520)

k.kanaka durga (106F1A0517)

Under the esteemed guidance

Department of Computer Science and Engineering

Sai Ganapati Engineering College

(Approved by AICTE, Affiliated to JNTU, Kakinada)

Gidajala Village, Anandhapuram, Visakhapatnam-73

2013-2014

Sai Ganapati Engineering Collage

(Approved by AICTE, Affiliated to JNTU, Kakinada)

Gidajala Village, Anandhapuram, Visakhapatnam-73

DEPARTMENT OF COMPUTER SCIENCE AND ENGINEERING

CERTIFICATE

This is to certify that the Project entitled Cloud Data Protection For

Masses

Is submitted by

M.moulika davi (106F1A0526)

N.sowjanya (106F1A0528)

K.susmitha (106F1A0520)

K.kanaka durga (106F1A0517)

in partial fulfillment of the requirement for the award of the degree

Bachelor of Technology

In Computer Science and Engineering from Jawaharlal Nehru

Technological University,

Kakinada,Visakhapatnam for the academic year 2013-2014.

Signature of the Internal guide Signature of the HOD

Date: Date:

Mrs. N.R.G.K.PRASAD Mrs.D.MANINDRA

SAI

Assistant Professor H.O.D

Internal Guide Head of the Department

External Examiner:

ABSTRACT

Offering strong data protection to cloud users while enabling rich applications is a

challenging task. We explore a new cloud platform architecture called Data Protection

as a Service, which dramatically reduces the per-application development effort

required to offer data protection, while still allowing rapid development and

maintenance.

EXISTING SYSTEM

Cloud computing promises lower costs, rapid scaling, easier maintenance, and

service availability anywhere, anytime, a key challenge is how to ensure and build

confidence that the cloud can handle user data securely. A recent Microsoft survey

found that 58 percent of the public and 86 percent of business leaders are excited

about the possibilities of cloud computing. But more than 90 percent of them are

worried about security, availability, and privacy of their data as it rests in the cloud.

PROPOSED SYSTEM

We propose a new cloud computing paradigm, data protection as a service (DPaaS)is

a suite of security primitives offered by a cloud platform,which enforces data security

and privacy and offers evidenceof privacy to data owners, even in the presence

ofpotentially compromised or malicious applications.Such as secure data using

encryption, logging, key management.

MODULE DESCRIPTION:

1. Cloud Computing

2. Trusted Platform Module

3. Third Party Auditor

4. User Module

1. Cloud Computing

Cloud computing is the provision of dynamically scalable and often virtualized

resources as a services over the internet Users need not have knowledge of, expertise

in, or control over the technology infrastructure in the "cloud" that supports them.

Cloud computing represents a major change in how we store information and run

applications. Instead of hosting apps and data on an individual desktop computer,

everything is hosted in the "cloud"an assemblage of computers and servers

accessed via the Internet.

Cloud computing exhibits the following key characteristics:

1. Agility improves with users' ability to re-provision technological

infrastructure resources.

2. Multi tenancy enables sharing of resources and costs across a large pool of

users thus allowing for:

3. Utilization and efficiency improvements for systems that are often

only 1020% utilized.

4. Reliability is improved if multiple redundant sites are used, which makes

well-designed cloud computing suitable for business continuity and disaster

recovery.

5. Performance is monitored and consistent and loosely coupled architectures

are constructed using web services as the system interface.

6. Security could improve due to centralization of data, increased security-focused

resources, etc., but concerns can persist about loss of control over certain sensitive

data, and the lack of security for stored kernels. Security is often as good as or better

than other traditional systems, in part because providers are able to devote resources

to solving security issues that many customers cannot afford. However, the

complexity of security is greatly increased when data is distributed over a wider area

or greater number of devices and in multi-tenant systems that are being shared by

unrelated users. In addition, user access to security audit logs may be difficult or

impossible. Private cloud installations are in part motivated by users' desire to retain

control over the infrastructure and avoid losing control of information security.

7. Maintenance of cloud computing applications is easier, because they do

not need to be installed on each user's computer and can be accessed from

different places.

2 .Trusted Platform Module

Trusted Platform Module (TPM) is both the name of a published specification

detailing a secure crypto processor that can store cryptographickeys that protect

information, as well as the general name of implementations of that specification,

often called the "TPM chip" or "TPM Security Device". The TPM specification is the

work of the Trusted Computing Group.

Disk encryption is a technology which protects information by converting it into

unreadable code that cannot be deciphered easily by unauthorized people. Disk

encryption uses disk encryption software or hardware to encrypt every bit of data that

goes on a disk or disk volume. Disk encryption prevents unauthorized access to data

storage. The term "full disk encryption" (or whole disk encryption) is often used to

signify that everything on a disk is encrypted, including the programs that can encrypt

bootableoperating systempartitions. But they must still leave the master boot record

(MBR), and thus part of the disk, unencrypted. There are, however, hardware-based

full disk encryption systems that can truly encrypt the entire boot disk, including the

MBR.

3. Third Party Auditor

In this module, Auditor views the all user data and verifying data and also changed

data. Auditor directly views all user data without key. Admin provided the permission

to Auditor. After auditing data, store to the cloud.

4. User Module

User store large amount of data to clouds and access data using secure key. Secure

key provided admin after encrypting data. Encrypt the data using TPM. User store

data after auditor, view and verifying data and also changed data. User again views

data at that time admin provided the message to user only changes data.

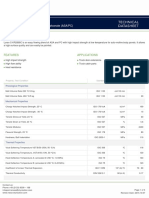

System Configuration:-

H/W System Configuration:-

Processor : Pentium III

Speed : 1.1 Ghz

RAM : 256 MB(min)

Hard Disk : 20 GB

Floppy Drive : 1.44 MB

Key Board : Standard Windows Keyboard

Mouse : Two or Three Button Mouse

Monitor : SVGA

S/W System Configuration:-

Operating System : Windows xp

Application Server : Tomcat5.0/6.X

Front End : HTML, Java, Jsp

Scripts : JavaScript.

Server side Script : Java Server Pages.

Database : Mysql

Database Connectivity : JDBC.

CONCLUSION

As private data moves online, the need to secure it properly becomes

increasingly urgent. The good news is that the same forces concentrating data in

enormous datacenters will also aid in using collective security expertise more

effectively. Adding protections to a single cloud platform can immediately benefit

hundreds of thousands of applications and, by extension, hundreds of millions of

users. While we have focused here on a particular, albeit popular and privacy-

sensitive, class of applications, many other applications also needs solutions.

ACKNOWLEDGEMENT

I wish to express my deep sense of gratitude to __________________________,

Assistant Professor, Computer Science & Engineering Department for his

wholehearted co-operation, unfailing inspiration and valuable guidance. Throughout

the project work, his useful suggestions, constant encouragement has given a right

direction and shape to our learning.

I thank Dr.V.RANGA RAO GARU, Principal,for extending his utmost support

and co-operation in providing all the provisions for the successful completion of the

project.

I consider it my privilege to express my deepest gratitude to D.MANINDRA

SAI,Head of the Department for his valuable support.

I sincerely thank all the members of the staff in the Department of Computer

Science & Engineering for their sustained help in our pursuits. I thank all those who

directly or indirectly helped in successfully carrying out this work.

M.moulika davi (106F1A0526)

N.sowjanya (106F1A0528)

K.sushmitha (106F1A0520)

K.kanaka durga (106F1A0517)

CONTENTS

Abstract

List of Figures

List of Tables

ii

iii

iv

Chapter

1:

Introduction

1.1 Motivation

1

1

1.2 Background 2

1.3 Scope of the project 2

1.3 Objective

3

Chapter

2:

Literature Review 6

2.1 Introduction

2.1 a. Technology used

2.1 b. Database used

2.2 TECHNIQUES:

ALGORITHM

6

8

Chapter

3:

Problem Specification 13

1 Existing system

13

Chapter

4:

Proposed Scheme

14

4.1 Proposed system

14

4.2 System models 14

4.3 Requirements specification

16

4.4 Algorithm

4.5 Algorithm for HAPPY architecture

17

18

4.6 System Analysis

26

4.6.1 Introduction

4.6.2 Identifying objects

20

20

4.6.3 Mapping use case with sequence

diagram

4.6.4 Class diagram

4.6.5 Modeling behavior with state chart

diagram

4.6.6 Modeling behavior with activity diagram

21

24

25

4.7 System Design

27

4.7.1 Introduction

4.7.2 Purpose of the system

4.7.3 Identifying design goals

4.7.4 Proposed software architecture

4.7.5 Identifying subsystem decomposition

4.7.6 Architecture

4.7.7 Database design

4.7.7 DFD

27

27

27

29

29

30

4.8 Object design 36

4.8.1 Introduction

4.8.2 Identifying missing attributes and

operations

4.8.3 Type, Signature and Visibility

4.8.4 Specifying constraints

4.8.5 Realizing associations

4.8.6 UML Diagrams

36

36

36

37

37

Chapter

5:

Implementation 38

5.1 Introduction

5.2 Hardware-Enhanced Association Rule

Mining with Hashing and Pipelining

5.3 Code for admin trimming

5.4 Code for admin page

5.5 Code for vendor edit products

38

38

38

42

45

Chapter

6:

Testing

6.1 Introduction

6.2 Unit testing

6.3 Integration testing

6.4 Validation testing

6.5 System testing

6.6 Test Cases

49

49

49

49

49

49

Chapter

7:

Appendices and Results

7.1 Difference with Traditional Mining

7.2 Output Screens & Reports

7.3 Performance analysis

55

55

56

68

Chapter

8:

Conclusion and Future Work

8.1 Conclusion

8.1.1 Limitations

8.2 Future work

OPERATIONAL MANUAL/USER MANUAL

72

72

72

73

REFERENCES 74

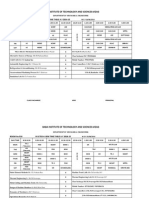

LIST OF TABLES

S.No Table NoName Of TablePage No

1 4.1 Performance criteria 27

2 4.2 Design criteria 28

3 4.3 Design criteria 28

4 4.4 Maintenance Criteria 28

5 6.1 TestcaseId: HAPPI_TC_adm_001 52

6 6.2 TestcaseId: HAPPI_TC_ven_002 52

7 6.3 TestcaseId: HAPPI_TC_cus_003 53

8 6.4 TestcaseId: add Product_004 53

9 6.5 TestcaseId: buy Product_005 54

10 6.6 TestcaseId: transactiontrimming_006 54

LIST OF FIGURES

S. No Fig No Name of the figure Page No.

1. 4.1 Sequence diagram for view results 22

2. 4.2 Sequence diagram for Add product 23

3. 4.3 Sequence diagram for Buy product 23

4. 4.4 Class Diagrams 24

5. 4.5 Modeling Behavior with State Chart Diagram 25

6. 4.6 Modeling Behavior with Activity Diagram 26

7. 4.7 Deployment diagram 31

8. 4.8 Collaboration diagram 32

9. 4.9 Component diagram 32

10. 4.10 Activity diagram 34

11. 4.11 Realizing Associations 37

1. INTRODUCTION

Cloud computing promises lower costs, rapid scaling, easier maintenance, and

services that are available anywhere, anytime. A key challenge in moving to the cloud

is to ensure and build confidence that user data is handled securely in the cloud. A

recent Microsoft survey [10] found that ...58% of the public and 86% of business

leaders are excited about the possibilities of cloud computing. But, more than 90% of

them are worried about security, availability, and privacy of their data as it rests in the

cloud.

There is tension between user data protection and rich computation in the cloud.

Users want to maintain control of their data, but also want to benefit from rich

services provided by application developers using that data. At present, there is little

platform-level support and standardization for verifiable data protection in the cloud.

On the other hand, user data protection while enabling rich computation is

challenging. It requires specialized expertise and a lot of resources to build, which

may not be readily available to most application developers. We argue that it is highly

valuable to build in data protection solutions at the platform layer: The platform can

be a great place to achieve economy of scale for security, by amortizing the cost of

maintaining expertise and building sophisticated security solutions across different

applications and their developers.

Target Applications

There is a real danger in trying to solve security and privacy for the cloud,

because the cloud means too many different things to admit any one solution. To

make any actionable statements, we must constrain ourselves to a particular domain.

We choose to focus on an important class of widely-used applications which

includes email, personal financial management, social networks, and business

applications such as word processors and spreadsheets. More precisely, we focus on

deployments which meet the following criteria:

applications that provide services to a large number of distinct end users, as

opposed to bulk data processing or workflow management for a single entity;

Applications whose data model consists mostly of sharable data units, where all

data objects have ACLs consisting of one or more end users (or may be designated as

public);

And developers who write applications to run on a separate computing platform

which

Encompasses the physical infrastructure, job scheduling, user authentication, and the

base

Software environmentrather than implementing the platform themselves

Data Protection and Usability Properties

A primary challenge in designing a platform-layer solution useful to many

applications is allowing rapid development and maintenance. Overly rigid security

will be as detrimental to cloud services value as inadequate security. Developers do

not want their security problems solved by losing their users! To ensure a practical

solution, we consider goals relating to data protection as well as ease of development

and maintenance.

Integrity: The users private (including shared) data is stored faithfully, and will not

be corrupted.

Privacy: The users private data will not be leaked to any unauthorized person.

Access transparency: It should be possible to obtain a log of accesses to data

indicating who or what performed each access.

Ease of verification: It should be possible to offer some level of transparency to the

users, such that they can to some extent verify what platform or application code is

running. Users may also wish to verify that their privacy policies have been strictly

enforced by the cloud.

Rich computation: The platform allows most computations on sensitive user data,

and can run those computations efficiently.

Development and maintenance support: Any developer faces a long list of

challenges: bugs to find and fix, frequent software upgrades, continuous change of

usage patterns, and users demand for high performance. Any credible data protection

approach must grapple with these issues, which are often overlooked in the literature

on the topic.

Literature survey

Literature survey is the most important step in software development process. Before

developing the tool it is necessary to determine the time factor, economy n company

strength. Once these things r satisfied, ten next steps are to determine which operating

system and language can be used for developing the tool. Once the programmers start

building the tool the programmers need lot of external support. This support can be

obtained from senior programmers, from book or from websites. Before building the

system the above consideration are taken into account for developing the proposed

system.

SYSTEM DESIGN

Data Flow Diagram / Use Case Diagram / Flow Diagram

The DFD is also called as bubble chart. It is a simple graphical

formalism that can be used to represent a system in terms of the input data to the

system, various processing carried out on these data, and the output data is generated

by the system.

SYSTEM DESIGN :( Admin)

User

Auditor

User Case Diagram

Class Diagram

Activity Diagram

IMPLEMENTATION

Implementation is the stage of the project when the theoretical design is turned

out into a working system. Thus it can be considered to be the most critical stage in

achieving a successful new system and in giving the user, confidence that the new

system will work and be effective.

The implementation stage involves careful planning, investigation of the

existing system and its constraints on implementation, designing of methods to

achieve changeover and evaluation of changeover methods.

MODULE DESCRIPTION:

5. Cloud Computing

6. Trusted Platform Module

7. Third Party Auditor

8. User Module

1. Cloud Computing

Cloud computing is the provision of dynamically scalable and often virtualized

resources as a services over the internet Users need not have knowledge of, expertise

in, or control over the technology infrastructure in the "cloud" that supports them.

Cloud computing represents a major change in how we store information and run

applications. Instead of hosting apps and data on an individual desktop computer,

everything is hosted in the "cloud"an assemblage of computers and servers

accessed via the Internet.

Cloud computing exhibits the following key characteristics:

1. Agility improves with users' ability to re-provision technological

infrastructure resources.

2. Multi tenancy enables sharing of resources and costs across a large pool of

users thus allowing for:

3. Utilization and efficiency improvements for systems that are often only 10

20% utilized.

4. Reliability is improved if multiple redundant sites are used, which makes

well-designed cloud computing suitable for business continuity and disaster

recovery.

5. Performance is monitored and consistent and loosely coupled architectures

are constructed using web services as the system interface.

6. Security could improve due to centralization of data, increased security-

focused resources, etc., but concerns can persist about loss of control over certain

sensitive data, and the lack of security for stored kernels. Security is often as good as

or better than other traditional systems, in part because providers are able to devote

resources to solving security issues that many customers cannot afford. However, the

complexity of security is greatly increased when data is distributed over a wider area

or greater number of devices and in multi-tenant systems that are being shared by

unrelated users. In addition, user access to security audit logs may be difficult or

impossible. Private cloud installations are in part motivated by users' desire to retain

control over the infrastructure and avoid losing control of information security.

7. Maintenance of cloud computing applications is easier, because they do not

need to be installed on each user's computer and can be accessed from different

places.

2 .TRUSTED PLATFORM MODULE

Trusted Platform Module (TPM) is both the name of a published specification

detailing a secure crypto processor that can store cryptographickeys that protect

information, as well as the general name of implementations of that specification,

often called the "TPM chip" or "TPM Security Device". The TPM specification is the

work of the Trusted Computing Group.

Disk encryption is a technology which protects information by converting it into

unreadable code that cannot be deciphered easily by unauthorized people. Disk

encryption uses disk encryption software or hardware to encrypt every bit of data that

goes on a disk or disk volume. Disk encryption prevents unauthorized access to data

storage. The term "full disk encryption" (or whole disk encryption) is often used to

signify that everything on a disk is encrypted, including the programs that can encrypt

bootableoperating systempartitions. But they must still leave the master boot record

(MBR), and thus part of the disk, unencrypted. There are, however, hardware-based

full disk encryption systems that can truly encrypt the entire boot disk, including the

MBR.

3. Third Party Auditor

In this module, Auditor views the all user data and verifying data and also changed

data. Auditor directly views all user data without key. Admin provided the permission

to Auditor. After auditing data, store to the cloud.

4. User Module

User store large amount of data to clouds and access data using secure key. Secure

key provided admin after encrypting data. Encrypt the data using TPM. User store

data after auditor, view and verifying data and also changed data. User again views

data at that time admin provided the message to user only changes data.

6. SYSTEM TESTING

The purpose of testing is to discover errors. Testing is the process of

trying to discover every conceivable fault or weakness in a work product.

It provides a way to check the functionality of components, sub

assemblies, assemblies and/or a finished product It is the process of

exercising software with the intent of ensuring that the

Software system meets its requirements and user expectations and does

not fail in an unacceptable manner. There are various types of test. Each

test type addresses a specific testing requirement.

TYPES OF TESTS

Unit testing

Unit testing involves the design of test cases that validate that the

internal program logic is functioning properly, and that program inputs

produce valid outputs. All decision branches and internal code flow

should be validated. It is the testing of individual software units of the

application .it is done after the completion of an individual unit before

integration. This is a structural testing, that relies on knowledge of its

construction and is invasive. Unit tests perform basic tests at component

level and test a specific business process, application, and/or system

configuration. Unit tests ensure that each unique path of a business

process performs accurately to the documented specifications and

contains clearly defined inputs and expected results.

Integration testing

Integration tests are designed to test integrated software components to

determine if they actually run as one program. Testing is event driven

and is more concerned with the basic outcome of screens or fields.

Integration tests demonstrate that although the components were

individually satisfaction, as shown by successfully unit testing, the

combination of components is correct and consistent. Integration testing

is specifically aimed at exposing the problems that arise from the

combination of components.

Functional test

Functional tests provide systematic demonstrations that functions tested

are available as specified by the business and technical requirements,

system documentation, and user manuals.

Functional testing is centered on the following items:

Valid Input : identified classes of valid input must be accepted.

Invalid Input : identified classes of invalid input must be rejected.

Functions : identified functions must be exercised.

Output : identified classes of application outputs must be

exercised.

Systems/Procedures: interfacing systems or procedures must be invoked.

Organization and preparation of functional tests is focused on

requirements, key functions, or special test cases. In addition, systematic

coverage pertaining to identify Business process flows; data fields,

predefined processes, and successive processes must be considered for

testing. Before functional testing is complete, additional tests are

identified and the effective value of current tests is determined.

System Test

System testing ensures that the entire integrated software system meets

requirements. It tests a configuration to ensure known and predictable

results. An example of system testing is the configuration oriented system

integration test. System testing is based on process descriptions and

flows, emphasizing pre-driven process links and integration points.

White Box Testing

White Box Testing is a testing in which in which the software tester has

knowledge of the inner workings, structure and language of the software,

or at least its purpose. It is purpose. It is used to test areas that cannot be

reached from a black box level.

Black Box Testing

Black Box Testing is testing the software without any knowledge of the

inner workings, structure or language of the module being tested. Black

box tests, as most other kinds of tests, must be written from a definitive

source document, such as specification or requirements document, such

as specification or requirements document. It is a testing in which the

software under test is treated, as a black box .you cannot see into it.

The test provides inputs and responds to outputs without considering how

the software works.

6.1 Unit Testing:

Unit testing is usually conducted as part of a combined code and

unit test phase of the software lifecycle, although it is not uncommon for

coding and unit testing to be conducted as two distinct phases.

Test strategy and approach

Field testing will be performed manually and functional tests will

be written in detail.

Test objectives

All field entries must work properly.

Pages must be activated from the identified link.

The entry screen, messages and responses must not be delayed.

Features to be tested

Verify that the entries are of the correct format

No duplicate entries should be allowed

All links should take the user to the correct page.

CONCLUSION

As private data moves online, the need to secure it properly becomes increasingly

urgent. The good news is that the same forces concentrating data in enormous

datacenters will also aid in using collective security expertise more effectively.

Adding protections to a single cloud platform can immediately benefit hundreds of

thousands of applications and, by extension, hundreds of millions of users. While we

have focused here on a particular, albeit popular and privacy-sensitive, classes of

applications, many other applications also need solutions.

REFERENCES

[1] http://www.mydatacontrol.com.

[2] The need for speed. http://www.technologyreview.com/files/54902/GoogleSpeed

charts.pdf.

[3] C. Dwork. The differential privacy frontier.In TCC, 2009.

[4] C. Gentry. Fully Homomorphic Encryption Using Ideal Lattices. In STOC, pages

169178, 2009.

[5] A. Greenberg. IBMs Blindfolded Calculator. Forbes, June 2009. Appeared in the

July 13, 2009 issue of Forbes magazine.

[6] P. Maniatis, D. Akhawe, K. Fall, E. Shi, S. McCamant, and D. Song. Do You

Know Where Your Data Are? Secure Data Capsules for Deployable Data Protection.

In HotOS, 2011.

[7] S. McCamant and M. D. Ernst.Quantitative information flow as network flow

capacity. In PLDI, pages 193205, 2008.

[8] M. S. Miller. Towards a Unified Approach to Access Control and Concurrency

Control.PhD thesis, Johns Hopkins University, Baltimore, Maryland, USA, May

2006.

[9] A. Sabelfeld and A. C. Myers.Language-Based Information-Flow Security. IEEE

Journal on Selected Areas in Communications, 21(1):519, 2003.

[10] L. Whitney. Microsoft Urges Laws to Boost Trust in the Cloud.

http://news.cnet.com/ 8301-1009 3-10437844-83.html.

Sites Referred:

http://java.sun.com

http://www.sourcefordgde.com

http://www.networkcomputing.com/

http://www.roseindia.com/

http://www.java2s.com/

Вам также может понравиться

- 41 Killing of Kamsa 2Документ11 страниц41 Killing of Kamsa 2Kirti DuttОценок пока нет

- Structural Drafting Structural Symbols and Conventions GeneralДокумент10 страницStructural Drafting Structural Symbols and Conventions GeneralKirti DuttОценок пока нет

- Qty Sell Price Total Validity Scrip Qty Buy Price Total Qty Buyprice Total Validity Stocks Purchased VTD Sell Order VTD Buy OrderДокумент1 страницаQty Sell Price Total Validity Scrip Qty Buy Price Total Qty Buyprice Total Validity Stocks Purchased VTD Sell Order VTD Buy OrderKirti DuttОценок пока нет

- AbbreviationsДокумент36 страницAbbreviationsamitjaguriОценок пока нет

- Career ObjectiveДокумент2 страницыCareer ObjectiveKirti DuttОценок пока нет

- AbbreviationsДокумент36 страницAbbreviationsamitjaguriОценок пока нет

- Nad DeoДокумент10 страницNad DeoKirti DuttОценок пока нет

- Class Time Tables NewДокумент4 страницыClass Time Tables NewKirti DuttОценок пока нет

- Books AuthorsДокумент16 страницBooks AuthorsGerald GarciaОценок пока нет

- Telugu PoetsДокумент4 страницыTelugu PoetsKirti DuttОценок пока нет

- Sai Ganapati Engineering College 1st Year BTech 1st Semester Department-Wise TimetablesДокумент5 страницSai Ganapati Engineering College 1st Year BTech 1st Semester Department-Wise TimetablesKirti DuttОценок пока нет

- Qty Sell Price Total Validity Scrip Qty Buy Price Total Qty Buyprice Total Validity Stocks Purchased VTD Sell Order VTD Buy OrderДокумент1 страницаQty Sell Price Total Validity Scrip Qty Buy Price Total Qty Buyprice Total Validity Stocks Purchased VTD Sell Order VTD Buy OrderKirti DuttОценок пока нет

- DDR3 ReportNEW Bala (1) FДокумент76 страницDDR3 ReportNEW Bala (1) FKirti DuttОценок пока нет

- DocumentationДокумент41 страницаDocumentationKirti DuttОценок пока нет

- VisakhapatnamДокумент28 страницVisakhapatnamKirti DuttОценок пока нет

- SailgsgsfgsfgsgsfgsfgsgsgsfgsfДокумент1 страницаSailgsgsfgsfgsgsfgsfgsgsgsfgsfKirti DuttОценок пока нет

- RCC Sleeper Laying Machine Design RequirementsДокумент1 страницаRCC Sleeper Laying Machine Design RequirementsKirti DuttОценок пока нет

- Common Expressions: Arc WeldingДокумент3 страницыCommon Expressions: Arc WeldingKirti DuttОценок пока нет

- Higher Algebra - Hall & KnightДокумент593 страницыHigher Algebra - Hall & KnightRam Gollamudi100% (2)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (265)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (119)

- Magnum 3904 DatasheetДокумент3 страницыMagnum 3904 DatasheetbobОценок пока нет

- Operators Manuel International Cub Cadet 72, 104, 105, 124, ZND 125 TractorsДокумент44 страницыOperators Manuel International Cub Cadet 72, 104, 105, 124, ZND 125 Tractorsfundreamer1Оценок пока нет

- Piping Vibration: Causes, Limits & Remedies: Public Courses In-House Courses Operator TrainingДокумент12 страницPiping Vibration: Causes, Limits & Remedies: Public Courses In-House Courses Operator Trainingmember1000100% (1)

- Previews AGA XQ9902 PreДокумент6 страницPreviews AGA XQ9902 PreAldrin HernandezОценок пока нет

- AS1895/7 E-FLEX Sealing Solutions: Part Number AS1895/7 Reference Duct Size Seal DimensionsДокумент1 страницаAS1895/7 E-FLEX Sealing Solutions: Part Number AS1895/7 Reference Duct Size Seal DimensionsAlex Zambrana RodríguezОценок пока нет

- 50TJДокумент56 страниц50TJHansen Henry D'souza100% (2)

- Calgon Tech SpecДокумент4 страницыCalgon Tech SpecDanStratoОценок пока нет

- 83 - Detection of Bearing Fault Using Vibration Analysis and Controlling The VibrationsДокумент12 страниц83 - Detection of Bearing Fault Using Vibration Analysis and Controlling The VibrationsmaulikgadaraОценок пока нет

- Zener DataДокумент2 страницыZener Dataapi-27149887Оценок пока нет

- Mixers Towable Concrete Essick EC42S Rev 8 Manual DataId 18822 Version 1Документ84 страницыMixers Towable Concrete Essick EC42S Rev 8 Manual DataId 18822 Version 1Masayu MYusoffОценок пока нет

- 01-01 Boltec S - SafetyДокумент30 страниц01-01 Boltec S - SafetyALVARO ANTONIO SILVA DELGADOОценок пока нет

- Setting vpn1Документ10 страницSetting vpn1Unink AanОценок пока нет

- Melter / Applicators: Modern Cracksealing TechnologyДокумент16 страницMelter / Applicators: Modern Cracksealing TechnologyEduardo RazerОценок пока нет

- Internet Controlled Multifunctional UGV For SurvellianceДокумент74 страницыInternet Controlled Multifunctional UGV For SurvellianceMd Khaled NoorОценок пока нет

- 1986 Lobel RobinsonДокумент18 страниц1986 Lobel RobinsonNathallia SalvadorОценок пока нет

- 06-Fc428mar Water-in-Fuel Indicator Sensor Circuit - Voltage Above Normal or Shorted To High SourceДокумент3 страницы06-Fc428mar Water-in-Fuel Indicator Sensor Circuit - Voltage Above Normal or Shorted To High SourceSuryadiОценок пока нет

- Course OutlineДокумент14 страницCourse OutlineTony SparkОценок пока нет

- Vinay Quality ResumeДокумент3 страницыVinay Quality Resumevinay kumarОценок пока нет

- A320 Aircraft CharacteristicsДокумент387 страницA320 Aircraft CharacteristicsEder LucianoОценок пока нет

- 2022 Manufacture AnswerДокумент8 страниц2022 Manufacture AnswerChampika V SamarasighaОценок пока нет

- Flash ADCДокумент3 страницыFlash ADCKiran SomayajiОценок пока нет

- Seminar ReportДокумент30 страницSeminar Reportshashank_gowda_7Оценок пока нет

- Government Engineering College Surveying Lab ManualДокумент26 страницGovernment Engineering College Surveying Lab ManualNittin BhagatОценок пока нет

- New Schedule For Sunset Limited Benefits Passengers and Improves Financial PerformanceДокумент3 страницыNew Schedule For Sunset Limited Benefits Passengers and Improves Financial Performanceapi-26433240Оценок пока нет

- Studio GPGL LogДокумент5 страницStudio GPGL LogCarlos Julian LemusОценок пока нет

- Linear Slot DiffuserДокумент15 страницLinear Slot DiffuserhyderabadОценок пока нет

- Chapter-6 IscaДокумент1 страницаChapter-6 IscakishorejiОценок пока нет

- Civil DEMOLITION OF BUILDINGДокумент12 страницCivil DEMOLITION OF BUILDINGShaik Abdul RaheemОценок пока нет

- Luran S KR2868C: Acrylonitrile Styrene Acrylate / Polycarbonate (ASA/PC)Документ3 страницыLuran S KR2868C: Acrylonitrile Styrene Acrylate / Polycarbonate (ASA/PC)rosebifОценок пока нет

- The Shand CCS Feasibility Study Public ReportДокумент124 страницыThe Shand CCS Feasibility Study Public ReportSai RuthvikОценок пока нет