Академический Документы

Профессиональный Документы

Культура Документы

10 - Obanijesu and Araromi, 2008

Загружено:

Rahul SarafОригинальное название

Авторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

10 - Obanijesu and Araromi, 2008

Загружено:

Rahul SarafАвторское право:

Доступные форматы

Petroleum Science and Technology, 26:19932008, 2008

Copyright Taylor & Francis Group, LLC

ISSN: 1091-6466 print/1532-2459 online

DOI: 10.1080/10916460701399493

Predicting Bubble-point Pressure and

Formation-volume Factor of Nigerian Crude Oil

System for Environmental Sustainability

E. O. Obanijesu

1

and D. O. Araromi

1

1

Chemical Engineering Department, Ladoke Akintola University of Technology,

Ogbomoso, Nigeria

Abstract: This paper presents a model for predicting the bubblepoint pressure (P

b

)

and oil formation-volume-factor at bubble-point (B

ob

) for crude samples collected

from some producing wells in the Niger-Delta region of Nigeria. The model was

developed using articial neural networks with 542 experimentally obtained Pressure-

Volume-Temperature (PVT) data sets. The model accurately predicts the P

b

and B

ob

as functions of the solution gas-oil ratio, the gas relative density, the oil specic

gravity, and the reservoir temperature. In order to obtain a generalized accurate model,

backpropagation with momentum for error minimization was used. The accuracy of

the developed model in this study was compared with some published correlations.

Apart from its accuracy, this model takes a shorter time to predict the PVT properties

when compared with empirical correlations. The immediate reason for this may have

to do with the non-linear nature of the empirical correlations.

Keywords: environmental sustainability, neural network, Niger-Delta, PVT

INTRODUCTION

The bubble point pressure (1

b

), which is an important Pressure-Volume-

Temperature (PVT) property, determines the oil-water ow ratio during hy-

drocarbon production. If too high, the quantity of produced water obtained at

the surface may be higher than that of oil, production will be reduced, and

well efciency will be low. The produced water, also known as brine (SPE,

2006), is the water associated with oil and gas reservoirs that is produced

along with the oil and gas. Produced water contains aromatic heterocycles

Address correspondence to E. O. Obanijesu, Chemical Engineering Department,

Ladoke Akintola University of Technology, Ogbomoso, Nigeria. E-mail: emmanuel

257@yahoo.com

1993

1994 E. O. Obanijesu and D. O. Araromi

and pyrogenics (CMI, 2002); trace (or heavy) metal such as cadmium (Cd),

chromium (Cr), lead (1

b

), zinc (Zn), copper (Cu), and nickel (Ni) (Obanijesu

et al., 2004); and inorganic solutes like sulfur compounds; all of which could

be transported to air through evaporation. The volatile organic compounds

(VOCs), which are major components of the aromatic compounds, are pri-

mary contributors to the formation of photochemical oxidants (Mohammed

et al., 2002) and increase cancer risk in humans at certain levels of exposure

(Guo, 2004); hence, the need for its treatment before disposal. The disposal

of the concentrate at the end of treatment equally constitutes a pressing

technological problem. Dumping of the concentrate, which is the common

method of disposal in Nigeria, may result in the accumulation of heavy metals

in soils (Howari, 2004); and these toxic metals may pose an enormous threat

to soil, water, and air (Baba and Deniz, 2004). At certain concentrations,

exposure to heavy metals results to human death (Obanijesu et al., 2004).

The conventional way of obtaining the required PVT data is either

through experimental setup early in the production life of the reservoir or

by empirical correlation developed by some researchers. Oftentimes, exper-

imental data are very difcult and expensive to obtain while most of the

empirical correlations are complex in composition and highly non-linear.

Before the advent of ANN, various empirical correlations have been

in use for prediction (Gharbi and Elsharkawy, 2003; Petrosky and Farshad,

1993). They are essentially based on assumption that the bubble point pressure

normally increases with an increase in solution gas-oil ratio and reservoir tem-

perature and a decrease in oil API gravity and gas gravity. Other assumptions

include T

ob

increases with an increase in 1

s

, T , gas gravity, and oil gravity.

A major shortcoming with most of these correlations is that different crude

oil and gas systems used to develop them exhibit regional trends in chemical

and physical properties. They are either classied as parafnic, naphthenic,

or aromatic. This has made it impossible to use correlations developed from

samples of certain regions to give satisfactory prediction uid properties for

other regions.

Neural network-base modeling has been extensively used in process

engineering in the last decade (Gharbi and Elshakawy, 1997; Elsharkawy,

1998; Mujtaba and Hussain, 2001). ANN is a computer-based algorithm

which learns the behavior of a data population by self-turning its parameter in

such a way that the trained ANN matches the employed data accurately. For

a given set of input, ANN is able to produce a corresponding set of outputs

according to some mapping relationships that are encoded into some network

structure during a period of training (also called learning), and is dependent

upon the parameters of the network, that is, weight and biases.

Importantly, if the data used are sufciently descriptive, the ANN pro-

vides a rapid and condent prediction as soon as a new case, which has not

been seen by the model during the training phase. Also, ANN has the ability

to discover patterns in data, which are so obscure as to be imperceptible to

normal observation and standard statistical methods.

Nigerian Crude Oil System for Environmental Sustainability 1995

Most of the PVT properties predicted have a very low mean relative error

of 0.52.5% with no data set having a relative error in excess of 5%.

Osman et al. (2001) used 803 data sets from the Middle East, Malaysia,

Colombia, and the Gulf of Mexico to develop an ANN model with 402 of

these data sets used to train it, 201 sets to cross validate the relationship

established during the training process, and the remaining 200 sets used to

test the model for accuracy evaluation.

Garbi and Elsharkawy (2003) developed a neural network to predict 1

b

and T

ob

for crude oil samples collected from North and South America,

the North Sea, South East Asia, the Middle East, and Africa. In all, 5,200

experimentally obtained PVT data sets were used to train the model while

an additional 234 PVT data sets were used to investigate its effectiveness.

The petroleum industry in Nigeria is more interested in hydrocarbon

production and avoidance of the resultant problems emanating from brine

production, there is dire need to develop a predictive model on Nigerian crude

based on its peculiarity when compared to other regional oil. This will assist

in solving some of the emerging communal problems faced by the operating

companies in the Niger-Delta area of the country due to environmental

degradation.

METHODOLOGY

This work develops a predictive model for Nigeria crude oil PVT properties

using articial neural network (ANN). This involves mapping of PVT data

of Nigeria crude oil systems using a generalized neutral network model; a

statistical tool was used to analyze and compare the accuracy of the neural

network model prediction with published PVT correlations data and using the

developed model to estimate the bubble point (1

b

) and oil formation volume

factor (T

ob

) for Nigeria crude oil system.

The values of bubble point pressure (1

b

) and oil formation volume factor

(T

ob

) were predicted. These two variables correspond to ANN output vector

(indicated by Y ). The inputs to ANN represent the amounts of information

that networks need to give output vector. The choice of inputs is made

using engineering judgment. Too much input would overload the network

and introduce unnecessary correlations among data, and can therefore disrupt

the network performance (Greaves et al., 2003). On the other hand, too few

inputs could lead to inaccurate predictions.

From the previous work done, it has been established that 1

b

and T

ob

are

strong functions of the solution gas-oil ratio 1

s

, the reservoir temperature T,

gas specic gravity Y

g

, and oil specic gravity Y

o

. These variables constituted

our input vectors for the networks. A Marquardt-Levenberg feed forward

backpropagation ANN topology is used due to its robustness and efcient

performance (Hussain and Ho, 2004). The network structure adopted for this

work is multilayer perceptron (MLP) consisting of an input layer with four

1996 E. O. Obanijesu and D. O. Araromi

neurons (T, 1

s

, Y

g

, and Y

o

) and several hidden layers with 50 neurons each

(Figure 1). Input to the perceptron are individually weighted and summed.

The perceptron computes the output as a function of J of the sum. The

activation function J is needed to introduce non-linearities into the network.

This makes the multilayer network a powerful representation of the non-

linearity system. The output from the perceptron is given as

,(k) D (n

T

(k)..(k)). (1)

The weights are dynamically updated using backpropagation algorithm. The

difference between the target output T and actual output (error e) is calculated

as

e(k) D T(k) ,(k). . . . (2)

The errors are backpropagated through the layer : and weight changes are

made. The formula for adjusting the weight is given by

n(k C1) D n(k) Cj.e(k). . . . (3)

Once the weights are adjusted, the feedforward process is repeated.

The training is intended to gradually update the connection weights to

minimize the mean square error 1 through

1 D

m

kD1

n

i D1

(T

.k/

i

,

.k/

i

)

2

. (4)

The weights are adjusted according to the gradient decent rule, so that the

actual output of the MLP moves closer to the desired output.

The training algorithm and the network architecture were implemented

using the MATLAB program. The algorithmis designed to approach a second

order training speed without computing the Hessian matrix. The performance

function having the form of a sum of squares was approximated as

H D J

T

J. (5)

Figure 1. Diagram of the perceptron model.

Nigerian Crude Oil System for Environmental Sustainability 1997

and the gradient was computed as

g D J

T

e. (6)

where J is the Jacobian matrix that contains rst derivatives of the network

errors with respect to the weights and biases, and e is a vector of network

errors.

The Jacobian matrix is then computed through a standard back-propaga-

tion technique and the generated Hessian matrix is then approximated using

quasi-Newton equation, thus,

.

kC1

D .

k

J

T

J Cj1|

1

J

T

e. (7)

During the training phase, the weights are adjusted according to the

generalized rule. To obtain the accurate models for predicting 1

b

and T

ob

as a

function of the other four variables, the number of neurons was systematically

varied to obtain a good t to the data. Training was completed when the

network was able to predict the given output.

Of the 542 data sets obtained from some wells in Niger-Delta of Nigeria,

264 sets were used to train the model, 142 sets used to cross validate the

relationship established during the training process, and the remaining 136

sets were used to test the model for accuracy evaluation. Each data range

contains the reservoir temperature T, solution gas-oil ratio 1

s

, gas specic

gravity ;

g

, oil API gravity ;

o

, bubble point pressure 1

b

, and oil formation

volume factor T

ob

(Table 1).

For comparison purposes, 1

b

and T

ob

for some existing empirical models

such as Standing, Glas, Labedi, and Elsharkawy were calculated through

their various equations.

For Standing model, 1

b

and T

ob

are respectively given as

1

b

D

__

1

s

;

g

_

anti log

10

(0.00091T 0.0142511) 1.4

_

. (8)

T

ob

D 0.972 C

1.47 10

4

1

s

(;

g

,;

o

)

0:5

C1.25T|

1:175

. (9)

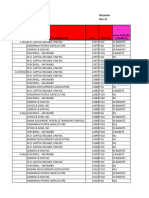

Table 1. Range of PVT data used for training

Bubble point pressure (psia) 953,660

Bubble point oil formation volume factor (RB/STB) 1.03101.659

Solution gas oil ratio (SCF/STB) 221,234

Gas specic density (air D 1) 0.66901.1510

Stock tank oil gravity (

API) 16.350.8

Reservoir temperature 108220

1998 E. O. Obanijesu and D. O. Araromi

For Glas model

T

ob

D 1.0 C10

6:58511C2:91329log.Bb/0:27683.log.Bb//

2

(10)

T

b

D 1

s

_

;

g

;

o

_

0:526

C0.968T. (11)

while the T

ob

for Labedi and Elsharkawy were respectively calculated as

T

ob

D 0.9897 C0.0001364

_

1

s

_

;

g

;

o

_

0:5

C1.25T

_

1:175

. (12)

T

ob

D 1.0 C40.428 10

5

1

s

C63.802 10

5

(T 60)

C0.0780 10

5

_

1

s

(T 60)

_

;

g

;

o

__

. (13)

For comparative performance evaluation, average relative percent error,

average absolute percent error, minimum and maximum absolute percent

error, standard deviation and correlation coefcient were used as statistical

tools for comparison between the developed Neural Network (NN) model

and these existing empirical models.

The average percent relative error, which is the relative deviation of the

estimated values from the experimental data, was calculated as

1

r

D

1

n

N

i D1

1

i

. (14)

where

1

i

D

(,

i

O ,

i

)

,

i

100 i D 1. . . . . n. (15)

The average absolute percent relative error, which measures the relative

absolute deviation of the estimated values from the experimental values, was

calculated as

1

a

D

1

n

N

i D1

j1

i

j. (16)

The minimum and maximum absolute percent relative error, which dene the

ranges of error for each correlation, are respectively given by

1

min

D

n

min

i D1

j1

i

j (17)

1

max

D

n

max

i D1

j1

i

j. (18)

Nigerian Crude Oil System for Environmental Sustainability 1999

The Standard deviation, which is a measure of the spread or dispersion of

the data distribution, was calculated as

o D

_

n

i D1

(.

i

.)

n 1

. (19)

While the correlation coefcient that represents the degree of success in

reducing the standard deviation by regression analysis was calculated as

r D

_

1

n

i D1

(,

i

O ,

i

)

2

,

n

i D1

(,

i

,). (20)

where

, D

1

n

n

i D1

,

i

. (21)

RESULTS AND DISCUSSION

The training plots of the network, which show that the performance goal

is met, are displayed in Figures 2 and 3. The accuracy of the ANN de-

veloped in this study is evaluated against those developed by Standing,

Glas, Elsharkawy, and Labedi due to their global acceptability. The statistical

results of the comparison for both 1

b

and T

ob

are given in Tables 2 and

3. As shown in the tables, the proposed model shows high accuracy in

predicting both the 1

b

and T

ob

values, and achieves the lowest minimum

error, lowest maximum error, lowest standard deviation, and correlation co-

efcient. The model achieves 99.2% and 98.9% correlation coefcient for 1

b

and T

ob

, respectively, which are highest when compared with the existing

correlations (Figures 46; summarized as Figures 7 and 8). Absolute percent

error was used to test the accuracy of the models and the result compared

with those of other correlations. The ANN model has the lowest value of

4.36% for bubble point error (Figure 9) and lowest error of 1.73% for

oil formation volume factor (Figure 10). The scatter plots in Figures 11

15 depict the predicted T

ob

versus experimental T

ob

values. These cross

plots indicate the degree of agreement between the experimental and the

predicted values. If the agreement is perfect, then all points should lie on

the 45

line indicating the excellent agreement between the experimental

and the calculated data values. The best plot is obtained for ANN data as

shown in Figure 11 and the most scattered points are shown in Figure 13

representing Standing correlation, indicating their poor performance for the

set of data.

2000 E. O. Obanijesu and D. O. Araromi

Figure 2. ANN training plot for B

ob

.

Figure 3. ANN training plot for P

b

.

Nigerian Crude Oil System for Environmental Sustainability 2001

Table 2. Statistical parameters for the B

ob

correlations

ANN Standing Glas Elsharkawy Labedi

Average relative error (%) 0.67 25.28 18.35 1.26 15.57

Average absolute relative error (%) 1.73 25.28 18.35 4.65 15.66

Minimum absolute relative error (%) 0.29 5.72 1.45 1.89 0.39

Maximum absolute relative error (%) 5.90 41.41 34.06 12.30 31.24

Standard deviation (%) 2.49 12.12 10.84 6.36 10.55

Correlation coefcient 0.989 0.881 0.880 0.889

Table 3. Statistical parameters for the P

b

correlations

ANN Standing Glas

Average relative error (%) 2.68 12.59 23.08

Average absolute relative error (%) 4.36 24.71 23.17

Minimum absolute relative error (%) 0.68 0.91 0.36

Maximum absolute relative error (%) 15.18 80.00 86.56

Standard deviation (%) 6.14 15.64 27.46

Correlation coefcient 0.992 0.864 0.868

Figure 4. Cross plot of P

b

for ANN model.

2002 E. O. Obanijesu and D. O. Araromi

Figure 5. Cross plot of P

b

for Standing correlation.

Figure 6. Cross plot of P

b

for Glas correlation.

Nigerian Crude Oil System for Environmental Sustainability 2003

Figure 7. Comparison of P

b

correlation coefcient for different correlations.

Figure 8. Comparison of B

ob

correlation coefcient for different correlations.

Figure 9. Comparison of P

b

average absolute percent relative error (AAPRE) for

different correlations.

2004 E. O. Obanijesu and D. O. Araromi

Figure 10. Comparison of B

ob

average absolute percent relative error (AAPRE) for

different correlations.

Figure 11. Cross plot of B

ob

for articial neural network model.

Nigerian Crude Oil System for Environmental Sustainability 2005

Figure 12. Cross plot of B

ob

for Standing correlation.

Figure 13. Cross plot of B

ob

for Glas correlation.

2006 E. O. Obanijesu and D. O. Araromi

Figure 14. Cross plot of B

ob

for Elsharkawy correlation.

Figure 15. Cross plot of B

ob

for Labedi correlation.

Nigerian Crude Oil System for Environmental Sustainability 2007

CONCLUSION

The developed model provides better predictions and higher accuracy for

1

b

and T

ob

values, and achieves the lowest minimum error, lowest standard

deviation, and correlation coefcient when compared to empirical correlation

developed by the earlier researchers. Since it successfully predicts the bubble

point pressure and oil formation volume factor for data falling within the

range of data used in this study which were specically acquired from Niger-

Delta region of Nigeria, this model is recommended for prediction of the

reservoirs within the region.

REFERENCES

Baba, A., and Deniz, O. (2004). Effect of warfare waste on soil. Int. J.

Environ. Pollution 22:657675.

CMI. (2002). Biodegradation of aromatic heterocycles from petroleum, pro-

duced water, and pyrogenic sources in marine sediments: Transformation

pathway studies and evaluation of remediation approaches. Coastal Ma-

rine Institute, Louisiana State University, Catallo, USA. Available from

http://cmi.lsu.edu/showReports.asp?table=Q400. Accessed on April 12,

2006.

Elsharkawy, A. M. (1998). Modeling the properties of crude oil and gas

systems using RBF network. Paper SPE 49961, SPE Asia Pacic Oil

and Gas Conference, Perth, Australia, October 1214.

Gharbi, R. B., and Elsharkawy, A. M. (1997). Neural network model for

estimating the PVT properties of Middle East crude oils. Paper SPE

37695, SPE Middle East Oil Show and Conference, Manama, Bahrain,

March 1517.

Gharbi, R. B., and Elsharkawy, A. M. (2003). Predicting the bubble point

pressure and formation-volume-factor of worldwide crude oil systems. J.

Pet. Sci. Tech. 21:5379.

Greaves, M. A., Mujtaba, I. M., Barolo, M., Trotta, A., and Hussain, M.

A. (2003). Neural Network Approach to Dynamic Optimization of Batch

230 Distillation Application to a Middle-Vessel Column, Vol. 81(3).

Guo, H., Lee, S. C., Chan, L. Y., and Li, W. M. (2004). Risk assessment of

exposure to volatile organic compounds in different indoor environments.

Environ. Res. 94:5766.

Howari, F. M. (2004). Heavy metal speciation around landll in UAE. Int. J.

Environ. Pollution 22:721731.

Hussain, M. A., and Ho, P. Y. (2004). Adaptive sliding mode control with

neural network based hybrid models. J. Process Control 14:157176.

Mohammed, M. F., Kang, D., and Aneja, V. P. (2002). Volatile organic

compounds in some urban locations in United States. Chemosphere 47:

863882.

2008 E. O. Obanijesu and D. O. Araromi

Mujtaba, I. M., and Hussain, M. A. (2001). Application of Neural Networks

and Other Learning Technologies in Process Engineering. London: Im-

perial College Press.

Obanijesu, E. O., Bello, O. O., Osinowo, F. A. O., and Macaulay, S. R. A.

(2004). Development of a packed-bed reactor for the recovery of metals

from industrial wastewaters. Int. J. Environ. Pollution 22:701709.

Osman, E. A., Abdel-Wahhab, O. A., and Al-Marhoun, M. A. (2001). Pre-

diction of oil PVT properties using neural networks. Paper SPE 68233,

SPE Middle East Oil Show, Manama, Bahrain, March 1720.

Petrosky, G. E., and Farshad, F. (1993). Pressure volume temperature for the

Gulf of Mexico. Paper SPE 26644, SPE Annual Technical Conference

and Exhibition, Houston, Texas, October 36.

SPE. (2006). Glossary of Industry Terms. Society of Petroleum Engineers.

Available from http://www.spe.org/spe/jsp/basic/01104_1710,00.html.

Accessed on April 3, 2006.

NOMENCLATURE

API oil API gravity

1

s

solution gas oil ratio

1

b

bubble point pressure (psia)

1

s

separator pressure

T reservoir temperature (

F)

T

r

reservoir temperature (

R)

T

s

separator temperature (

F)

T

k

reservoir temperature (

K)

;

o

oil specic gravity (air D 1.0)

;

g

gas specic gravity (air D 1.0)

;

gs

separator gas specic gravity (air D 1.0)

T

ob

bubble point oil formation volume factor (RB/STB)

T

.k/

i

the target value of the output neuron for the given kth data pattern

,

.k/

i

the prediction for the i th output neuron given the kth data pattern

M number of training data pattern

N number of neurons in the output layer

Вам также может понравиться

- Advanced ComputingДокумент528 страницAdvanced ComputingRahul SarafОценок пока нет

- AnnexureD PDFДокумент1 страницаAnnexureD PDFMukesh ShahОценок пока нет

- COVID Information Booklet - V6Документ44 страницыCOVID Information Booklet - V6shwethaОценок пока нет

- How To Use Google Docs For Hinglish Typing?Документ2 страницыHow To Use Google Docs For Hinglish Typing?Rahul SarafОценок пока нет

- Dask Parallel Computing Cheat SheetДокумент2 страницыDask Parallel Computing Cheat SheetxjackxОценок пока нет

- Marriott Find & Reserve - Reservation ConfirmationДокумент2 страницыMarriott Find & Reserve - Reservation ConfirmationRahul SarafОценок пока нет

- CRM Project RC DocumentДокумент19 страницCRM Project RC DocumentSambit MohantyОценок пока нет

- Annexure CДокумент2 страницыAnnexure CAsif ShaikhОценок пока нет

- Dinner Menu: To Start With Desserts Soup SidesДокумент1 страницаDinner Menu: To Start With Desserts Soup SidesRahul SarafОценок пока нет

- OrangeHRM FRS SampleДокумент22 страницыOrangeHRM FRS SampleSambit MohantyОценок пока нет

- Awesome Fastpages: AdviceДокумент2 страницыAwesome Fastpages: AdviceRahul SarafОценок пока нет

- COVID19 Management Algorithm 22042021 v1Документ1 страницаCOVID19 Management Algorithm 22042021 v1shivani shindeОценок пока нет

- Algebra Cheat Sheet: Basic Properties & FactsДокумент4 страницыAlgebra Cheat Sheet: Basic Properties & FactsAnonymous wTQriXbYt9Оценок пока нет

- 2020 04 24 Thoughts On PandemicДокумент1 страница2020 04 24 Thoughts On PandemicRahul SarafОценок пока нет

- An APL Compiler: Tilman P. OttoДокумент12 страницAn APL Compiler: Tilman P. OttoRahul SarafОценок пока нет

- Anomaly ND Condition Monitoring 2Документ18 страницAnomaly ND Condition Monitoring 2Rahul SarafОценок пока нет

- Spe 0216 0059 JPTДокумент2 страницыSpe 0216 0059 JPTRahul SarafОценок пока нет

- Sustainable Competitive Advantage Burger KingДокумент7 страницSustainable Competitive Advantage Burger KingAinul Mardhiyah0% (1)

- World Oil - March 2018 - Optimizing Drilling DynamicsДокумент4 страницыWorld Oil - March 2018 - Optimizing Drilling DynamicsRahul SarafОценок пока нет

- Us20070203648a1 PDFДокумент11 страницUs20070203648a1 PDFRahul SarafОценок пока нет

- AUTODIFFДокумент14 страницAUTODIFFRahul SarafОценок пока нет

- Us20070203648a1 PDFДокумент11 страницUs20070203648a1 PDFRahul SarafОценок пока нет

- Eg Knitr Python 01 PDFДокумент1 страницаEg Knitr Python 01 PDFRahul SarafОценок пока нет

- An Introduction To APL For Scientists and Engineers PDFДокумент34 страницыAn Introduction To APL For Scientists and Engineers PDFRahul SarafОценок пока нет

- Tripp 1989Документ2 страницыTripp 1989Rahul SarafОценок пока нет

- Sand Production Prediction Review - Developing An Integrated Approach PDFДокумент12 страницSand Production Prediction Review - Developing An Integrated Approach PDFRahul SarafОценок пока нет

- An Introduction To APL For Scientists and Engineers PDFДокумент34 страницыAn Introduction To APL For Scientists and Engineers PDFRahul SarafОценок пока нет

- Science Corpus NLPДокумент609 страницScience Corpus NLPRahul SarafОценок пока нет

- PREDICTING ROD PUMP DYNAMOMETERДокумент8 страницPREDICTING ROD PUMP DYNAMOMETERRahul SarafОценок пока нет

- Sample - Artificial Lift Systems Market Analysis and Segment Forecasts To 2025Документ44 страницыSample - Artificial Lift Systems Market Analysis and Segment Forecasts To 2025Rahul SarafОценок пока нет

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (265)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (119)

- Wojciech Gryc - Neural Network Predictions of Stock Price FluctuationsДокумент44 страницыWojciech Gryc - Neural Network Predictions of Stock Price FluctuationsjohnsmithxxОценок пока нет

- Time Signature - WikipediaДокумент17 страницTime Signature - WikipediaDiana GhiusОценок пока нет

- Barrels & Actions by Harold HoffmanДокумент238 страницBarrels & Actions by Harold HoffmanNorm71% (7)

- Hospital Managemen T System: Oose LAB FileДокумент62 страницыHospital Managemen T System: Oose LAB FileAASHОценок пока нет

- HP ALM FeaturesДокумент51 страницаHP ALM FeaturesSuresh ManthaОценок пока нет

- PSD60-2R: Operation ManualДокумент22 страницыPSD60-2R: Operation ManualOscar SantanaОценок пока нет

- Welding Machine CatalogueДокумент12 страницWelding Machine CatalogueRodney LanagОценок пока нет

- Geotechnical Engineering Notes 333Документ40 страницGeotechnical Engineering Notes 333TinaОценок пока нет

- Modular Forms Exam - Homework RewriteДокумент2 страницыModular Forms Exam - Homework RewritejhqwhgadsОценок пока нет

- Cylindrical Plug Gage DesignsДокумент3 страницыCylindrical Plug Gage DesignskkphadnisОценок пока нет

- PEA ClocksДокумент50 страницPEA ClocksSuresh Reddy PolinatiОценок пока нет

- A Git Cheat Sheet (Git Command Reference) - A Git Cheat Sheet and Command ReferenceДокумент14 страницA Git Cheat Sheet (Git Command Reference) - A Git Cheat Sheet and Command ReferenceMohd AzahariОценок пока нет

- UMTS Chap6Документ33 страницыUMTS Chap6NguyenDucTaiОценок пока нет

- Preparation of Gases in LaboratoryДокумент7 страницPreparation of Gases in LaboratoryChu Wai Seng50% (2)

- Inductive Proximity Sensors: Brett Anderson ECE 5230 Assignment #1Документ27 страницInductive Proximity Sensors: Brett Anderson ECE 5230 Assignment #1Rodz Gier JrОценок пока нет

- Unified Modeling Language Class Diagram ..Uml)Документ20 страницUnified Modeling Language Class Diagram ..Uml)Yasmeen AltuwatiОценок пока нет

- LAB REPORT-Rock Pore Volume and Porosity Measurement by Vacuum Saturation-GROUP - 5-PETE-2202Документ13 страницLAB REPORT-Rock Pore Volume and Porosity Measurement by Vacuum Saturation-GROUP - 5-PETE-2202Jeremy MacalaladОценок пока нет

- Ne7207 Nis Unit 2 Question BankДокумент2 страницыNe7207 Nis Unit 2 Question BankalgatesgiriОценок пока нет

- Cork Properties Capabilities and ApplicationsДокумент22 страницыCork Properties Capabilities and ApplicationsVijay AnandОценок пока нет

- Complete trip-free loop, PFC and PSC testerДокумент2 страницыComplete trip-free loop, PFC and PSC testerGermanilloZetaОценок пока нет

- Mammography View ChapterДокумент60 страницMammography View ChapterSehar GulОценок пока нет

- Influence of Ring-Stiffeners On Buckling Behavior of Pipelines UnderДокумент16 страницInfluence of Ring-Stiffeners On Buckling Behavior of Pipelines UnderSUBHASHОценок пока нет

- It Tigear2Документ2 страницыIt Tigear2rrobles011Оценок пока нет

- ME4111 Engineering and Mechanical PrinciplesДокумент5 страницME4111 Engineering and Mechanical PrinciplesEdvard StarcevОценок пока нет

- Unit - L: List and Explain The Functions of Various Parts of Computer Hardware and SoftwareДокумент50 страницUnit - L: List and Explain The Functions of Various Parts of Computer Hardware and SoftwareMallapuram Sneha RaoОценок пока нет

- Seminar ReportДокумент45 страницSeminar Reportmanaskollam0% (1)

- Product Documentation: Release NotesДокумент3 страницыProduct Documentation: Release NotesArmando CisternasОценок пока нет

- Nov. AbwДокумент50 страницNov. Abwjbyarkpawolo70Оценок пока нет

- Chapter 13: The Electronic Spectra of ComplexesДокумент42 страницыChapter 13: The Electronic Spectra of ComplexesAmalia AnggreiniОценок пока нет

- DebugДокумент14 страницDebugMigui94Оценок пока нет