Академический Документы

Профессиональный Документы

Культура Документы

Hadoop in Theory and Practice 131201085108 Phpapp01

Загружено:

premwin10 оценок0% нашли этот документ полезным (0 голосов)

37 просмотров96 страницHadoop book

Авторское право

© © All Rights Reserved

Доступные форматы

PDF, TXT или читайте онлайн в Scribd

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документHadoop book

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате PDF, TXT или читайте онлайн в Scribd

0 оценок0% нашли этот документ полезным (0 голосов)

37 просмотров96 страницHadoop in Theory and Practice 131201085108 Phpapp01

Загружено:

premwin1Hadoop book

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате PDF, TXT или читайте онлайн в Scribd

Вы находитесь на странице: 1из 96

Adam Kawa

Data Engineer @ Spotify

Apache Hadoop

In Theory And Practice

Get insights to offer a better product

More data usually beats better algorithms

Get of insights to make better decisions

Avoid guesstimates

Take a competitive advantage

Why Data?

Store data reliably

Analyze data quickly

Cost-effective way

Use expressible and high-level language

What Is Challenging?

A big system of machines, not a big machine

Failures will happen

Move computation to data, not data to computation

Write complex code only once, but right

Fundamental Ideas

A system of multiple animals

An An open-source open-source Java software Java software

Storing Storing and and processing processing of very of very large data sets large data sets

A A clusters of clusters of commodity machines commodity machines

A A simple programming model simple programming model

Apache Hadoop

Two main components:

HDFS - a distributed file system

MapReduce a distributed processing layer

Apache Hadoop

Many other tools belong to Apache Hadoop Ecosystem

Component

HDFS

Store large datasets in a distributed, scalable and fault-tolerant way

High throughput

Very large files

Streaming reads and writes (no edits)

Write once, read many times

The Purpose Of HDFS

It is like a big truck

to moe heay stuff

!not "errari#

Do NOT use, if you have

Low-latency requests

Random reads and writes

Lots of small files

Then better to consider

RDBMs,

File servers,

Hbase or Cassandra...

HDFS Mis-Usage

Split a very large file into smaller (but still large) blocks

Store them redundantly on a set of machines

Splitting Files And Replicating Blocks

The default block size is 64MB

Minimize the overhead of a disk seek operation (less than 1%)

A file is just sliced into chunks after each 64MB (or so)

It does NOT matter whether it is text/binary, compressed or not

It does matter later (when reading the data)

Spiting Files Into Blocks

$oday% &'(M) or '*+M) is recommended

The default replication factor is 3

It can be changed per a file or a directory

It can be increased for hot datasets (temporarily or permanently)

Trade-off Trade-off between

Reliability, availability, performance

Disk space

Replicating Blocks

The Master node keeps and manages all metadata information

The Slave nodes store blocks of data and serve them to the client

Master And Slaves

Master node !called ,ame,ode#

-lae nodes !called .ata,odes#

*no NameNode HA, no HDFS Replication

Classical* HDFS Cluster

Manages metadata

.oes some

house/keeping

operations for

,ame,ode

-tores and retriees

blocks of data

Performs all the metadata-related operations

Keeps information in RAM (for fast lookup)

The filesystem tree

Metadata for all files/directories (e.g. ownership, permissions)

Names and locations of blocks

Metadata (not all) is additionally stored on disk(s) (for reliability)

The filesystem snapshot (fsimage) + editlog (edits) files

HDFS NameNode

Stores and retrieves blocks of data

Data is stored as regular files on a local filesystem (e.g. ext4)

e.g. blk_-992391354910561645 (+ checksums in a separate file)

A block itself does not know which file it belongs to!

Sends a heartbeat message to the NN to say that it is still alive

Sends a block report to the NN periodically

HDFS DataNode

NOT a failover NameNode

Periodically merges a prior snapshot (fsimage) and editlog(s) (edits)

Fetches current fsimage and edits files from the NameNode

Applies edits to fsimage to create the up-to-date fsimage

Then sends the up-to-date fsimage back to the NameNode

We can configure frequency of this operation

Reduces the NameNode startup time

Prevents edits to become too large

HDFS Secondary NameNode

hadoop fs -ls -R /user/kawaa

hadoop fs -cat /toplist/2013-05-15/poland.txt less

hadoop fs -put logs.txt /incoming/logs/user

hadoop fs -count /toplist

hadoop fs -chown kawaa!kawaa /toplist/2013-05-15/poland.a"ro

Exemplary HDFS Commands

It is distributed% but it gies you a beautiful abstraction0

Block data is never sent through the NameNode

The NameNode redirects a client to an appropriate DataNode

The NameNode chooses a DataNode that is as close as possible

Reading A File From HDFS

1 hadoop fs /cat 2toplist2'3&4/3*/&*2poland5t6t

)locks locations

7ots of data comes

from .ata,odes

to a client

Network topology defined by an administrator in a supplied script

Convert IP address into a path to a rack (e.g /dc1/rack1)

A path is used to calculate distance between nodes

HDFS Network Topology

Image source: Hadoop: The Definitive Guide by Tom White

Pluggable (default in #lock$lacement$olic%&efault.'a"a)

1st replica on the same node where a writer is located

Otherwise random (but not too busy or almost full) node is used

2nd and the 3rd replicas on two different nodes in a different rack

The rest are placed on random nodes

No DataNode with more than one replica of any block

No rack with more than two replicas of the same block (if possible)

HDFS Block Placement

Moves block from over-utilized DNs to under-utilized DNs

Stops when HDFS is balanced

Maintains the block placement policy

HDFS Balancer

the utili8ation of eery .,

differs from the utili8ation

of the cluster by no

more than a gien threshold

9uestions

HDFS

9uestion

Why a block itself does NOT know

which file it belongs to?

HDFS Block

9uestion

Why a block itself does NOT know

which file it belongs to?

Answer

Design decision simplicity, performance

Filename, permissions, ownership etc might change

It would require updating all block replicas that belongs to a file

HDFS Block

9uestion

Why NN does NOT store information

about block locations on disks?

HDFS Metadata

9uestion

Why NN does NOT store information

about block locations on disks?

Answer

Design decision simplicity

They are sent by DataNodes as block reports periodically

Locations of block replicas may change over time

A change in IP address or hostname of DataNode

Balancing the cluster (moving blocks between nodes)

Moving disks between servers (e.g. failure of a motherboard)

HDFS Metadata

9uestion

9uestion

How many files represent a block replica in HDFS?

HDFS Replication

9uestion

9uestion

How many files represent a block replica in HDFS?

Answer

Answer

Actually, two files:

The first file for data itself

The second file for blocks metadata

Checksums for the block data

The blocks generation stamp

HDFS Replication

by default less than &:

of the actual data

9uestion

Why does NOT the default block placement strategy take the disk

space utilization (%) into account?

HDFS Block Placement

It only checks% if a node

a# has enough disk space to write a block% and

b# does not sere too many clients 555

9uestion

Why does NOT the default block placement strategy take the disk

space utilization (%) into account?

Answer

Some DataNodes might become overloaded by incoming data

e.g. a newly added node to the cluster

HDFS Block Placement

"acts

HDFS

Runs on the top of a native file system (e.g. ext3, ext4, xfs)

HDFS is simply a Java application that uses a native file system

HDFS And Local File System

HDFS detects corrupted blocks

;hen writing

Client computes the checksums for each block

Client sends checksums to a DN together with data

;hen reading

Client verifies the checksums when reading a block

If verification fails, NN is notified about the corrupt replica

Then a DN fetches a different replica from another DN

HDFS Data Integrity

Stats based on Yahoo! Clusters

An average file 1.5 blocks (block size = 128 MB)

An average file 600 bytes in RAM (1 file and 2 blocks objects)

100M files 60 GB of metadata

1 GB of metadata 1 PB physical storage (but usually less*)

<-adly% based on practical obserations% the block to file ratio tends to

decrease during the lifetime of the cluster

.ekel $ankel% =ahoo0

HDFS NameNode Scalability

Read/write operations throughput limited by one machine

~120K read ops/sec

~6K write ops/sec

MapReduce tasks are also HDFS clients

Internal load increases as the cluster grows

More block reports and heartbeats from DataNodes

More MapReduce tasks sending requests

Bigger snapshots transferred from/to Secondary NameNode

HDFS NameNode Performance

Single NameNode

Keeps all metadata in RAM

Performs all metadata operations

Becomes a single point of failure (SPOF)

HDFS Main Limitations

Introduce multiple NameNodes in form of:

HDFS Federation

HDFS High Availability (HA)

HDFS Main Improvements

"ind More>

http://slidesha.re/15zZlet

In practice

HDFS

?roblem

DataNode can not start on a server for some reason

Usually it means some kind of disk failure

( ls /disk/hd12/

ls! reading director% /disk/hd12/! )nput/output error

org.apache.hadoop.util.&isk*hecker(&isk+rror+xception!

,oo man% failed "olumes - current "alid "olumes! 11-

"olumes configured! 12- "olumes failed! 1- .olume failures tolerated! 0

org.apache.hadoop.hdfs.ser"er.datanode.&ata/ode! 012,&34/5607!

Increase dfs5datanode5failed5olumes5tolerated

to aoid e6pensie block replication when a disk fails

!and @ust monitor failed disks#

It was exciting this see stuff breaking!

In practice

HDFS

?roblem

A user user can not run resource-intensive Hive queries can not run resource-intensive Hive queries

It happened It happened immediately after expanding the cluster immediately after expanding the cluster

.escription

The The queries are valid queries are valid

The queries are The queries are resource-intensive resource-intensive

The queries run successfully on a small dataset successfully on a small dataset

But they fail on a large dataset fail on a large dataset

Surprisingly they run successfully through other user accounts successfully through other user accounts

The user has right permissions to HDFS directories and Hive tables right permissions to HDFS directories and Hive tables

The NameNode is throwing thousands of warnings and exceptions

14592 times only during 8 min (4768/min in a peak)

,ormally

Hadoop is a very trusty elephant Hadoop is a very trusty elephant

The The username username comes comes from the client machine from the client machine (and is not verified) (and is not verified)

The The groupname is resolved on the NameNode server groupname is resolved on the NameNode server

Using the shell command '' Using the shell command ''id -7n 8username9'' ''

If a user does not have an account on the NameNode server If a user does not have an account on the NameNode server

The The +xit*ode+xception +xit*ode+xception exception is thrown exception is thrown

Possible Fixes

Possible Fixes

Create an user account on the NameNode server (dirty, insecure)

Use AD/LDAP for a user-group resolution AD/LDAP for a user-group resolution

hadoop.securit%.group.mapping.ldap.: settings

If you also need the full-authentication, deploy Kerberos

Aur "i6

We decided to use We decided to use LDAP for a user-group resolution LDAP for a user-group resolution

However, However, LDAP settings in Hadoop did not work for us LDAP settings in Hadoop did not work for us

Because Because posix7roup posix7roup is not a supported filter group class in is not a supported filter group class in

hadoop.securit%.group.mapping.ldap.search.filter.group hadoop.securit%.group.mapping.ldap.search.filter.group

We found We found a workaround using a workaround using nsswitch.conf nsswitch.conf

7esson 7earned

Know who is going to use your cluster Know who is going to use your cluster

Know Know who is abusing the cluster who is abusing the cluster (HDFS access and MR jobs) (HDFS access and MR jobs)

Parse the NameNode logs Parse the NameNode logs regularly regularly

Look for Look for ;<,<= ;<,<=, , +RR3R +RR3R, , +xception +xception messages messages

Especially before and after expanding the cluster Especially before and after expanding the cluster

Component

MapReduce

Programming model inspired by functional programming

map>) and reduce>) functions processing 8ke%- "alue9 pairs

Useful for processing very large datasets in a distributed way

Simple, but very expressible

MapReduce Model

Map And Reduce Functions

Map And Reduce Functions -

Counting Word

MapReduce Job

Input data is diided into

splits and conerted into

Bkey% alueC pairs

Inokes map!# function

multiple times

Keys are sorted%

alues not !but

could be#

Inokes reduce!#

"unction multiple times

MapReduce Example: ArtistCount

Artist% -ong% $imestamp% Dser

Key is the offset of the line

from the beginning

of the line

;e could specify which artist

goes to which reducer

!Hash?aritioner is default one#

map>)nteger ke%- +nd0ong "alue- *ontext context)!

context.write>"alue.artist- 1)

reduce>0tring ke%- )terator8)nteger9 "alues- *ontext context)!

int count ? 0

for each " in "alues!

count @? "

context.write>ke%- count)

MapReduce Example: ArtistCount

?seudo/code in

non/e6isting language E#

MapReduce Combiner

Make sure that the Combiner

combines fast and enough

!otherwise it adds oerhead only#

Data Locality in HDFS and MapReduce

Ideally% Mapper code is sent

to a node that has

the replica of this block

)y default% three replicas

should be aailable somewhere

on the cluster

Batch processing system

Automatic parallelization and distribution of computation

Fault-tolerance

Deals with all messy details related to distributed processing

Relatively easy to use for programmers

Java API, Streaming (Python, Perl, Ruby )

Apache Pig, Apache Hive, (S)Crunch

Status and monitoring tools

MapReduce Implementation

Classical MapReduce Daemons

Keeps track of $$s%

schedules @obs and tasks e6ecutions

Funs map and reduce tasks%

Feports to Gob$racker

Manages the computational resources

Available TaskTrackers, map and reduce slots

Schedules all user jobs

Schedules all tasks that belongs to a job

Monitors tasks executions

Restarts failed and speculatively runs slow tasks

Calculates job counters totals

JobTracker Reponsibilities

Runs map and reduce tasks

Reports to JobTracker

Heartbeats saying that it is still alive

Number of free map and reduce slots

Task progress, status, counters etc

TaskTracker Reponsibilities

It can consists of 1, 5, 100 and 4000 nodes

Apache Hadoop Cluster

MapReduce Job Submission

Image source: Hadoop: The Definitive Guide by Tom White

$asks are started

in a separate GHM

to isolate a user code

form Hadoop code

$hey are copied with

a higher replication

factor

!by default% &3#

MapReduce: Sort And Shuffle Phase

Ather maps tasks

Ather reduce tasks

Feduce phase Map phase

Image source: Hadoop: The Definitive Guide by Tom White

Specifies which Reducer should get a given 8ke%- "alue9 pair

Aim for an even distribution of the intermediate data

Skewed data may overload a single reducer

And make a job running much longer

MapReduce: Partitioner

Scheduling a redundant copy of the remaining, long-running task

The output from the one that finishes first is used

The other one is killed, since it is no longer needed

An optimization, not a feature to make jobs run more reliably

Speculative Execution

Enable, if tasks often experience external problems e.g. hardware

degradation (disk, network card), system problems, memory

unavailability..

Atherwise

Speculative execution can reduce overall throughput

Redundant tasks run with similar speed as non-redundant ones

Might help one job, all the others have to wait longer for slots

Redundantly running reduce tasks will

transfer over the network all intermediate data

write their output redundantly (for a moment) to directory in HDFS

Speculative Execution

"acts

MapReduce

Very customizable

)nput/3utput ;ormat, Record Reader/4riter,

$artitioner,4ritaAle- *omparator

Unit testing with MRUnit

HPROF profiler can give a lot of insights

Reuse objects (especially keys and values) when possible

Split 0tring efficiently e.g. 0tring2tils instead of 0tring.split

More about Hadoop Java API

http://slidesha.re/1c50IPk

Java API

Tons of configuration parameters

Input split size (~implies the number of map tasks)

Number of reduce tasks

Available memory for tasks

Compression settings

Combiner

Partitioner

and more...

MapReduce Job Configuration

9uestions

MapReduce

9uestion

Why each line in text file is, by default, converted to

<offset, line> instead of <line_number, line>?

MapReduce Input <Key, Value> Pairs

9uestion

Why each line in text file is, by default, converted to

<offset, line> instead of <line_number, line>?

Answer

If your lines are not fixed-width,

you need to read file from the beginning to the end

to find line_number of each line (thus it is not parallelized).

MapReduce Input <Key, Value> Pairs

In practice

MapReduce

I noticed that a bottleneck seems to be coming from the map tasks.

Is there any reason that we can't open any of the allocated reduce slots

to map tasks?

Regards,

Chris

How to

hard/code the

number of

map and

reduce

slots

efficientlyI

We initially started with 60/40

But today we are closer to something like 70/30

Accupied Map And Feduce -lots $ime -pend In Accupied -lots

$his may change again soon 555

We are currently introducing a new feature to Luigi

Automatic settings of

Maximum input split size (~implies the number of map tasks)

Number of reduce task

More settings soon (e.g. size of map output buffer)

The goal is each task running 5-15 minutes on average

)ecause een perfect manual setting

may become outdated

because input si8e grows

The current PoC ;)

It should help in extreme cases

short-living maps

short-living and long-living reduces

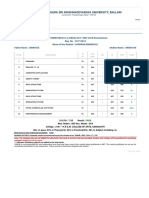

type # map # reduce avg map time avg reduce time job execution time

old_1 4826 25 46sec 1hrs, 52mins, 14sec 2hrs, 52mins, 16sec

new_1 391 294 4mins, 46sec 8mins, 24sec 23mins, 12sec

type # map # reduce avg map time avg reduce time job execution time

old_2 4936 800 7mins, 30sec 22mins, 18sec 5hrs, 20mins, 1sec

new_2 4936 1893 8mins, 52sec 7mins, 35sec 1hrs, 18mins, 29sec

In practice

MapReduce

?roblem

Surprisingly, Hive queries are running extremely long

Thousands task are constantly being killed

Anly & task failed%

'6 more task

were killed

than

were completed

Logs show that the JobTracker gets a request to kill the tasks

Who actually can send a kill request?

User (using e.g. mapred 'oA -kill-task)

JobTracker (a speculative duplicate, or when a whole job fails)

Fair Scheduler

.iplomatically% itJs called preemption

Key Abserations

Killed tasks came from ad-hoc and resource-intensive Hive queries

Tasks are usually killed quickly after they start

Surviving tasks are running fine for long time

Hive queries are running in their own Fair Scheduler's pool

Eureka0

FairScheduler has a license to kill!

Preempt the newest tasks

in an over-share pool

to forcibly make some room

for starving pools

Hive pool was running over its minimum and fair shares

Other pools were running under their minimum and fair shares

-o that

Fair Scheduler was (legally) killing Hive tasks from time to time

"air -cheduler can kill to be KI,.555

?ossible "i6es

Disable the preemption

Tune minimum shares based on your workload

Tune preemption timeouts based on your workload

Limit the number of map/reduce tasks in a pool

Limit the number of jobs in a pool

Switch to Capacity Scheduler

7essons 7earned

A scheduler should NOT be considered as the ''black-box''

It is so easy to implement a long-running Hive query

More About

Hadoop Adventures

http://slidesha.re/1ctbTHT

In the reality

Hadoop is fun!

Questions?

Stockholm and Sweden

Check out spotify.com/jobs or @Spotifyjobs

for more information

kawaa@spotify.com

HakunaMapData.com

Want to join the band?

Thank you!

Вам также может понравиться

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (119)

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (265)

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2219)

- How You Can Create An App and Start Earning PDFДокумент61 страницаHow You Can Create An App and Start Earning PDFhui ziva100% (1)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (890)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- 6th Central Pay Commission Salary CalculatorДокумент15 страниц6th Central Pay Commission Salary Calculatorrakhonde100% (436)

- Tableau Certification Study GuideДокумент26 страницTableau Certification Study Guidecancelthis0035994100% (1)

- Scada Controlling Automatic Filling PlantДокумент38 страницScada Controlling Automatic Filling PlantDejan RadivojevicОценок пока нет

- Atm Surveillance SystemДокумент6 страницAtm Surveillance Systemjoshna guptaОценок пока нет

- DecisionSpace Well Planning Onshore Methods Volume 2 - 5000.10Документ180 страницDecisionSpace Well Planning Onshore Methods Volume 2 - 5000.10bengamОценок пока нет

- Jolly Phonics Parents - Teachers GuideДокумент8 страницJolly Phonics Parents - Teachers Guidepremwin1Оценок пока нет

- BW AP SolutionДокумент66 страницBW AP Solutionpremwin1Оценок пока нет

- C ProgrammingДокумент29 страницC Programmingpremwin1Оценок пока нет

- Artificial Intelligence-Is The Field That Studies The Synthesis and Analysis ofДокумент1 страницаArtificial Intelligence-Is The Field That Studies The Synthesis and Analysis ofpremwin1Оценок пока нет

- Class 7th - Going To 8th Ftre Practice Paper Mat-1Документ3 страницыClass 7th - Going To 8th Ftre Practice Paper Mat-1premwin1Оценок пока нет

- Mi 3Документ1 страницаMi 3premwin1Оценок пока нет

- Intro To AcctgДокумент38 страницIntro To AcctgThang DianalОценок пока нет

- A Case For Project Revenue Management: Peter Varani PMPДокумент40 страницA Case For Project Revenue Management: Peter Varani PMPpremwin1Оценок пока нет

- Metrics MastersДокумент33 страницыMetrics MastersNaokhaiz AfaquiОценок пока нет

- Final Sepedi Stories (Grade 2 Booklet) - 1Документ166 страницFinal Sepedi Stories (Grade 2 Booklet) - 1phashabokang28Оценок пока нет

- Single-Pass-Parallel-Processing-ArchitectureДокумент5 страницSingle-Pass-Parallel-Processing-ArchitectureAyan NaskarОценок пока нет

- Media Characteristics and Online Learning Technology: Patrick J. Fahy Athabasca UniversityДокумент36 страницMedia Characteristics and Online Learning Technology: Patrick J. Fahy Athabasca UniversityDennis BayengОценок пока нет

- Designing For Older AdultsДокумент44 страницыDesigning For Older AdultsMacoy SambranoОценок пока нет

- Topics:: Highline Excel 2016 Class 10: Data ValidationДокумент31 страницаTopics:: Highline Excel 2016 Class 10: Data ValidationeneОценок пока нет

- ABC Adderss:ABC India PVT Ltd. Bengaluru (Ready To Relocate)Документ5 страницABC Adderss:ABC India PVT Ltd. Bengaluru (Ready To Relocate)ruby guptaОценок пока нет

- Excitation Systems: This Material Should Not Be Used Without The Author's ConsentДокумент31 страницаExcitation Systems: This Material Should Not Be Used Without The Author's ConsentshiranughieОценок пока нет

- Data ONTAP 83 Express Setup Guide For 80xx SystemsДокумент40 страницData ONTAP 83 Express Setup Guide For 80xx Systemshareesh kpОценок пока нет

- Neural Networks for Digit Recognition in Street View ImagesДокумент5 страницNeural Networks for Digit Recognition in Street View ImagesbharathiОценок пока нет

- TCP/IP Protocols and Their RolesДокумент4 страницыTCP/IP Protocols and Their RolesFuad NmtОценок пока нет

- Non-Linear Analysis of Bolted Steel Beam ConnectionsДокумент9 страницNon-Linear Analysis of Bolted Steel Beam ConnectionsmirosekОценок пока нет

- Crash 20200906 183647Документ2 страницыCrash 20200906 183647Nofiani LestariОценок пока нет

- 02 Computer OrganizationДокумент179 страниц02 Computer Organizationdespoina alexandrouОценок пока нет

- Dbms-Lab-Manual-Ii-Cse-Ii-Sem OkДокумент58 страницDbms-Lab-Manual-Ii-Cse-Ii-Sem OkmallelaharinagarajuОценок пока нет

- Optical Character Recognition - ReportДокумент33 страницыOptical Character Recognition - Reportsanjoyjena50% (2)

- #CTFsДокумент6 страниц#CTFsgendeludinasekОценок пока нет

- JD Edwards Enterpriseone Joint Venture Management Frequently Asked QuestionsДокумент2 страницыJD Edwards Enterpriseone Joint Venture Management Frequently Asked QuestionsasadnawazОценок пока нет

- Konica Minolta AccurioPress - C14000 - C12000 - BrochureДокумент8 страницKonica Minolta AccurioPress - C14000 - C12000 - BrochureRICKOОценок пока нет

- PL SQL Chapter 2Документ32 страницыPL SQL Chapter 2designwebargentinaОценок пока нет

- Replacement, AMC & Warranty Management Solution - TallyWarmДокумент12 страницReplacement, AMC & Warranty Management Solution - TallyWarmMilansolutionsОценок пока нет

- Oracle HackingДокумент2 страницыOracle HackingadiОценок пока нет

- Oxe Um ALE SoftPhone 8AL90653ENAA 1 enДокумент36 страницOxe Um ALE SoftPhone 8AL90653ENAA 1 enlocuras34Оценок пока нет

- Vijayanagara Sri Krishnadevaraya University, BallariДокумент1 страницаVijayanagara Sri Krishnadevaraya University, BallariSailors mОценок пока нет

- Gas Turb 14Документ379 страницGas Turb 14IzzadAfif1990Оценок пока нет

- Amendment57 HCДокумент4 страницыAmendment57 HCMengistu TayeОценок пока нет

- Analytical Forensic Investigation With Data Carving ToolsДокумент12 страницAnalytical Forensic Investigation With Data Carving ToolsInternational Journal of Innovative Science and Research TechnologyОценок пока нет