Академический Документы

Профессиональный Документы

Культура Документы

ROBT205-Lab 06 PDF

Загружено:

rightheartedИсходное описание:

Оригинальное название

Авторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

ROBT205-Lab 06 PDF

Загружено:

rightheartedАвторское право:

Доступные форматы

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

Lab 06 Sampling and FIR filtering

Lab Report: You need to write a lab report for all parts of this session (starting from section 4.3). Include

all the codes, resulting figures and answers to the questions in your lab report.

1. Objectives

a. To become familiar with digital image manipulation in Matlab

b. To investigate sampling, aliasing and reconstruction principles

c. To investigate convolution and FIR filtering

2. Equipment

a. Personal Computer with the Windows 7 Operating System

b. Matlab and SPFirst toolbox

3. Introduction

The objective in the first part of this lab is to introduce digital images as a useful signal type. We will

show how the A-to-D sampling and the D-to-A reconstruction processes are carried out for digital

images. In particular, we will show a commonly used method of image zooming (reconstruction) that

gives poor resultsa later lab will revisit this issue and do a better job.

In the second part of this lab, you will learn how to implement FIR filters in MATLAB, and then study the

response of FIR filters to various signals, and speech. As a result, you should learn how filters can create

interesting effects such as echoes. In addition, we will use FIR filters to study the convolution operation

and properties such as linearity and time-invariance.

In the experiments of this lab, you will use firfilt(), or conv(), to implement 1-D filters and

conv2() to implement two-dimensional (2-D) filters. The 2-D filtering operation actually consists of

1-D filters applied to all the rows of the image and then all the columns.

4. Digital images

In this lab we introduce digital images as a signal type for studying the effect of sampling, aliasing and

reconstruction. An image can be represented as a function x(t

1

,t

2

) of two continuous variables

representing the horizontal (t

2

) and vertical (t

1

) coordinates of a point in space

1

. For monochrome images,

the signal x(t

1

, t

2

) would be a scalar function of the two spatial variables, but for color images the function

x(

) would have to be a vector-valued function of the two variables

2

. Moving images (such as TV) would

add a time variable to the two spatial variables.

Monochrome images are displayed using black and white and shades of gray, so they are called grayscale

images. In this lab we will consider only sampled gray-scale still images. A sampled gray-scale still

image would be represented as a two-dimensional array of numbers of the form

x|m, n] = x(m

1

, n

2

) 1 m H, onJ 1 n N

where T

1

and T

2

are the sample spacings in the horizontal and vertical directions. Typical values of M and

N are 256 or 512; e.g., a 512512 image which has nearly the same resolution as a standard TV image. In

MATLAB we can represent an image as a matrix, so it would consist of M rows and N columns. The matrix

entry at (m, n) is the sample value x[m, n]called a pixel (short for picture element).

An important property of light images such as photographs and TV pictures is that their values are always

non-negative and finite in magnitude; i.e.,

1

The variables t

1

and t

2

do not denote time, they represent spatial dimensions. Thus, their units would be inches or some other

unit of length.

2

For example, an RGB color system needs three values at each spatial location: one for red, one for green and one for blue.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

u x|m, n]

m

<

This is because light images are formed by measuring the intensity of reflected or emitted light which

must always be a positive finite quantity. When stored in a computer or displayed on a monitor, the

values of x[m, n] have to be scaled relative to a maximum value X

max

. Usually an eight-bit integer

representation is used. With 8-bit integers, the maximum value (in the computer) would be Xmax = 2

8

1

= 255, and there would be 2

8

= 256 different gray levels for the display, from 0 to 255.

4.1. Displaying Images

As you will discover, the correct display of an image on a gray-scale monitor can be tricky, especially

after some processing has been performed on the image. We have provided the function show_img.m

in the SP First Toolbox to handle most of these problems

3

, but it will be helpful if the following points are

noted:

1. All image values must be non-negative for the purposes of display. Filtering may introduce negative

values, especially if differencing is used (e.g., a high-pass filter).

2. The default format for most gray-scale displays is eight bits, so the pixel values x[m, n] in the image

must be converted to integers in the range

u x|m, n] 2SS = 2

8

1.

3. The actual display on the monitor is created with the show_img function

4

. The show_img

function will handle the color map and the true size of the image. The appearance of the image can

be altered by running the pixel values through a color map. In our case, we want grayscale

display where all three primary colors (red, green and blue, or RGB) are used equally, creating what

is called a gray map. In MATLAB the gray color map is set up via

colormap(gray(256))

which gives a 2563 matrix where all 3 columns are equal. The function colormap(gray(256))

creates a linear mapping, so that each input pixel amplitude is rendered with a screen intensity

proportional to its value (assuming the monitor is calibrated). For our lab experiments, non-linear

color mappings would introduce an extra level of complication, so they will not be used.

4. When the image values lie outside the range [0,255], or when the image is scaled so that it only

occupies a small portion of the range [0,255], the display may have poor quality. In this lab, we will

use show_img.m to automatically rescale the image: This requires a linear mapping of the pixel

values

5

:

x

|m, n] = x|m, n] +

The scaling constants and can be derived from the min and max values of the image, so that all

pixel values are recomputed via:

x

|m, n] = _2SS.999(

x|m, n] x

mn

x

m

x

mn

)_

where |xj is the floor function, i.e., the greatest integer less than or equal to x.

4.2. MATLAB Function to Display Images

You can load the images needed for this lab from *.mat files. Any file with the extension *.mat is in

MATLAB format and can be loaded via the load command. To find some of these files, look for *.mat

in the SP First toolbox or in the MATLAB directory called toolbox/matlab/demos. Some of the

image files are named echart.mat and zone.mat, but there are others within MATLABs demos. The

default size is 256 256, but alternate versions are available as 512 512 images under names such as

3

If you have the MATLAB Image Processing Toolbox, then the function imshow.m can be used instead.

4

If the MATLAB function imagesc.m is used to display the image, two features will be missing: (1) the color map may be

incorrect because it will not default to gray, and (2) the size of the image will not be a true pixel-for-pixel rendition of the image

on the computer screen.

5

The MATLAB function show_img has an option to perform this scaling while making the image display.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

zone512.mat. After loading, use the command whos to determine the name of the variable that holds

the image and its size.

Although MATLAB has several functions for displaying images on the CRT of the computer, we have

written a special function show_img() for this lab. It is the visual equivalent of soundsc(), which

we used when listening to speech and tones; i.e., show_img() is the D-to-C converter for images.

This function handles the scaling of the image values and allows you to open up multiple image display

windows.

Here is the help on show_img:

function [ph] = show_img(img, figno, scaled, map)

%SHOW_IMG display an image with possible scaling

% usage: ph = show_img(img, figno, scaled, map)

% img = input image

% figno = figure number to use for the plot

% if 0, re-use the same figure

% if omitted a new figure will be opened

% optional args:

% scaled = 1 (TRUE) to do auto-scale (DEFAULT)

% not equal to 1 (FALSE) to inhibit scaling

% map = user-specified color map

% ph = figure handle returned to caller

%----

Notice that unless the input parameter figno is specified, a new figure window will be opened.

4.3. Get Test Images

In order to probe your understanding of image display, do the following simple displays:

(a) Load and display the 326 426 lighthouse image from lighthouse.mat. This image can be

find in the MATLAB files link. The command load lighthouse will put the sampled image into

the array ww. Use whos to check the size of ww after loading. When you display the image it might

be necessary to set the colormap via colormap(gray(256)).

(b) Use the colon operator to extract the 200

th

row of the lighthouse image, and make a plot of that row

as a 1-D discrete-time signal.

ww200 = ww(200,:);

Observe that the range of signal values is between 0 and 255. Which values represent white and

which ones black? Can you identify the region where the 200

th

row crosses the fence?

4.4. Synthesize a Test Image

In order to probe your understanding of the relationship between MATLAB matrices and image display,

you can generate a synthetic image from a mathematical formula. Include the code, results, and

answers to the questions in your lab report.

a) Generate a simple test image in which all of the columns are identical by using the following outer

product:

xpix = ones(256,1)*cos(2*pi*(0:255)/16);

Display the image and explain the gray-scale pattern that you see. How wide are the bands in number

of pixels? How can you predict that width from the formula for xpix?

b) In the previous part, which data value in xpix is represented by white? Which one by black?

c) Explain how you would produce an image with bands that are horizontal. Give the formula that

would create a 400400 image with 5 horizontal black bands separated by white bands. Write the

MATLAB code to make this image and display it.

4.5. Printing Multiple Images on One Page

The phrase what you see is what you get can be elusive when dealing with images. It is VERY

TRICKY to print images so that the hard copy matches exactly what is on the screen, because there is

usually some interpolation being done by the printer or by the program that is handling the images. One

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

way to think about this in signal processing terms is that the screen is one kind of D-to-A and the printer

is another kind, but they use different (D-to-A) reconstruction methods to get the continuous-domain

(analog) output image that you see.

Furthermore, if you try to put two images of different sizes into subplots of the same MATLAB figure, it

wont work because MATLAB wants to force them to be the same size. Therefore, you should display your

images in separate MATLAB Figure windows. In order to get a printout with MULTIPLE IMAGES ON

THE SAME PAGE, use the following procedure:

1. In MATLAB, use show_img and trusize to put your images into separate figure windows at the

correct pixel resolution.

2. Use the Windows program called PAINT to assemble the different images onto one page. This

program can be found under Accessories.

3. For each MATLAB figure window, press ALT and the PRINT-SCREEN key at the same time, which

will copy the active window contents to the clipboard.

4. After each window capture in step 3, paste the clipboard contents into PAINT

6

.

5. Arrange the images so that you can make a comparison for your lab report.

6. Print the assembled images from PAINT to a printer.

4.6. Sampling of Images

Images that are stored in digital form on a computer have to be sampled images because they are stored in

an MN array (i.e., a matrix). The sampling rate in the two spatial dimensions was chosen at the time the

image was digitized (in units of samples per inch if the original was a photograph). For example, the

image might have been sampled by a scanner where the resolution was chosen to be 300 dpi (dots per

inch)

7

. If we want a different sampling rate, we can simulate a lower sampling rate by simply throwing

away samples in a periodic way. For example, if every other sample is removed, the sampling rate will be

halved (in our example, the 300 dpi image would become a 150 dpi image). Usually this is called sub-

sampling or down-sampling

8

.

Down-sampling throws away samples, so it will shrink the size of the image. This is what is done by the

following scheme:

wp = ww(1:p:end,1:p:end);

when we are downsampling by a factor of p.

One potential problem with down-sampling is that aliasing might occur. This can be illustrated in a

dramatic fashion with the lighthouse image. Load the lighthouse.mat file which has the

image stored in a variable called ww. When you check the size of the image, youll find that it is not

square. Now down-sample the lighthouse image by a factor of 2. What is the size of the down-

sampled image? Notice the aliasing in the downsampled image, which is surprising since no new values

are being created by the down-sampling process. Describe how the aliasing appears visually

9

. Which parts

of the image show the aliasing effects most dramatically?

4.7. Lab Exercises: Sampling, Aliasing and Reconstruction

4.7.1. Down-Sampling

For the lighthouse picture, downsampled by two in the previous section:

a) Describe how the aliasing appears visually. Compare the original to the downsampled image.

Which parts of the image show the aliasing effects most dramatically?

b) This part is challenging: explain why the aliasing happens in the lighthouse image by using

a frequency domain explanation. In other words, estimate the frequency of the features that are

6

An alternative is to use the free program called IRFANVIEW, which can do image editing and also has screen capture

capability. It can be obtained from http://www.irfanview.com/english.htm.

7

For this example, the sampling periods would be T

1

= T

2

= 1/300 inches.

8

The Sampling Theorem applies to digital images, so there is a Nyquist Rate that depends on the maximum spatial frequency in

the image.

9

One difficulty with showing aliasing is that we must display the pixels of the image exactly. This almost never happens because

most monitors and printers will perform some sort of interpolation to adjust the size of the image to match the resolution of the

device. In MATLAB we can override these size changes by using the function truesize which is part of the Image Processing

Toolbox. In the SP First Toolbox, an equivalent function called trusize.m is provided.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

being aliased. Give this frequency as a number in cycles per pixel. (Note that the fence provides a

sort of spatial chirp where the spatial frequency increases from left to right.) Can you relate

your frequency estimate to the Sampling Theorem?

You might try zooming in on a very small region of both the original and downsampled images.

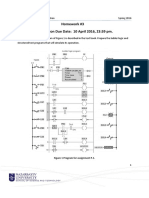

4.7.2. Reconstruction of Images

When an image has been sampled, we can fill in the missing samples by doing interpolation. For images,

this would be analogous to the examples shown in Chapter 4 for sine-wave interpolation which is part of

the reconstruction process in a D-to-A converter. We could use a square pulse or a triangular pulse or

other pulse shapes for the reconstruction.

Figure 1: 2-D Interpolation broken down into row and column operations: the gray dots indicate repeated

data values created by a zero-order hold; or, in the case of linear interpolation, they are the interpolated

values.

For these reconstruction experiments, use the lighthouse image, down-sampled by a factor of 3. You

will have to generate this by loading in the image from lighthouse.mat to get the image which is in

the array called xx. A down-sampled lighthouse image should be created and stored in the variable

xx3. The objective will be to reconstruct an approximation to the original lighthouse image, which

is 256 256, from the smaller down-sampled image.

a) The simplest interpolation would be reconstruction with a square pulse which produces a zero-

order hold. Here is a method that works for a one-dimensional signal (i.e., one row or one

column of the image), assuming that we start with a row vector xr1, and the result is the row

vector xr1hold.

xr1 = (-2).(0:6);

L = length(xr1);

nn = ceil((0.999:1:4*L)/4); %<-- Round up to the integer part

xr1hold = xr1(nn);

Plot the vector xr1hold to verify that it is a zero-order hold version derived from xr1. Explain

what values are contained in the indexing vector nn. If xr1hold is treated as an interpolated

version of xr1, then what is the interpolation factor?

b) Now return to the down-sampled lighthouse image, and process all the rows of xx3 to fill

in the missing points. Use the zero-order hold idea from part (a), but do it for an interpolation

factor of 3. Call the result xholdrows. Display xholdrows as an image, and compare it to

the downsampled image xx3; compare the size of the images as well as their content.

c) Now process all the columns of xholdrows to fill in the missing points in each column and

call the result xhold. Compare the result (xhold) to the original image lighthouse.

d) Linear interpolation can be done in MATLAB using the interp1 function (thats interp-one).

Its default mode is linear interpolation, which is equivalent to using the *linear option, but

interp1 can also do other types of polynomial interpolation. Here is an example on a 1-D

signal:

n1 = 0:6;

xr1 = (-2).n1;

tti = 0:0.1:6; %-- locations between the n1 indices

xr1linear = interp1(n1,xr1,tti); %-- function is INTERP-ONE

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

stem(tti,xr1linear)

For the example above, what is the interpolation factor when converting xr1 to xr1linear?

e) In the case of the lighthouse image, you need to carry out a linear interpolation operation on

both the rows and columns of the down-sampled image xx3. This requires two calls to the

interp1 function, because one call will only process all the columns of a matrix

10

. Name the

interpolated output image xxlinear. Include your code for this part in the lab report.

f) Compare xxlinear to the original image lighthouse. Comment on the visual appearance

of the reconstructed image versus the original; point out differences and similarities. Can the

reconstruction (i.e., zooming) process remove the aliasing effects from the down-sampled

lighthouse image?

g) Compare the quality of the linear interpolation result to the zero-order hold result. Point out

regions where they differ and try to justify this difference by estimating the local frequency

content. In other words, look for regions of low-frequency content and high-frequency

content and see how the interpolation quality is dependent on this factor.

A couple of questions to think about: Are edges low frequency or high frequency features? Are

the fence posts low frequency or high frequency features? Is the background a low frequency or

high frequency feature?

5. Sampling, Convolution, and FIR Filtering

The goal of this part of the lab is to learn how to implement FIR filters in MATLAB, and then study the

response of FIR filters to various signals, including images and speech. As a result, you should learn how

filters can create interesting effects such as blurring and echoes. In addition, we will use FIR filters to

study the convolution operation and properties such as linearity and time-invariance.

In the experiments of this lab, you will use firfilt( ), or conv(), to implement 1-D filters and

conv2() to implement two-dimensional (2-D) filters. The 2-D filtering operation actually consists of

1-D filters applied to all the rows of the image and then all the columns.

5.1. Two GUIs

This lab involves on the use of two MATLAB GUIs: one for sampling and aliasing and one for

convolution.

1. con2dis: GUI for sampling and aliasing. An input sinusoid and its spectrum is tracked through

A/D and D/A converters.

1. dconvdemo: GUI for discrete-time convolution. This is exactly the same as the MATLAB

functions conv() and firfilt() used to implement FIR filters.

5.2. Overview of Filtering

For this lab, we will define an FIR filter as a discrete-time system that converts an input signal x[n] into

an output signal y[n] by means of the weighted summation:

y|n] = b

k

k=0

x|n k] (1)

Equation (1) gives a rule for computing the n

th

value of the output sequence from certain values of the

input sequence. The filter coefficients {b

k

} are constants that define the filters behavior. As an example,

consider the system for which the output values are given by

y|n] =

1

S

x|n] +

1

S

x|n 1] +

1

S

x|n 2]

=

1

S

{x|n] +x|n 1] +x|n 2]]

(2)

This equation states that the nth value of the output sequence is the average of the nth value of the input

10

Use a matrix transpose in between the interpolation calls. The transpose will turn rows into columns.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

sequence x[n] and the two preceding values, x[n 1] and x[n 2]. For this example the b

k

s are b

0

= 1/3 ,

b

1

= 1/3 , and b

2

= 1/3 .

MATLAB has a built-in function, filter( ), for implementing the operation in (1), but we have also

supplied another M-file firfilt( ) for the special case of FIR filtering. The function filter

implements a wider class of filters than just the FIR case. Technically speaking, the firfilt function

implements the operation called convolution. The following MATLAB statements implement the three-

point averaging system of (2):

nn = 0:99; %<--Time indices

xx = cos( 0.08*pi*nn ); %<--Input signal

bb = [1/3 1/3 1/3]; %<--Filter coefficients

yy = firfilt(bb, xx); %<--Compute the output

In this case, the input signal xx is a vector containing a cosine function. In general, the vector bb

contains the filter coefficients {bk} needed in (1). These are loaded into the bb vector in the following

way:

bb = [b0, b1, b2, ... , bM].

In MATLAB, all sequences have finite length because they are stored in vectors. If the input signal has, for

example, L samples, we would normally only store the L samples in a vector, and would assume that x[n]

= 0 for n outside the interval of L samples; i.e., we do not have to store any zero samples unless it suits

our purposes. If we process a finite-length signal through (1), then the output sequence y[n] will be longer

than x[n] by M samples. Whenever firfilt( ) implements (1), we will find that

length(yy) = length(xx)+length(bb)-1

In the experiments of this lab, you will use firfilt( ) to implement FIR filters and begin to

understand how the filter coefficients define a digital filtering algorithm. In addition, this lab will

introduce examples to show how a filter reacts to different frequency components in the input.

5.3. Run the GUIs

The first objective of this part of the lab is to demonstrate usage of the two GUIs. First of all, you must

download the ZIP files for each and install them. Each one installs as a directory containing a number of

files. You can put the GUIs on the matlabpath, or you can run the GUIs from their home directories.

5.4. Sampling and Aliasing Demo

In this demo, you can change the frequency of an input signal that is a sinusoid, and you can change the

sampling frequency. The GUI will show the sampled signal, x[n], its spectrum and also the reconstructed

output signal, y(t) with its spectrum. Figure 2 shows the interface for the con2dis GUI. In order to see

the entire GUI, you must select Show All Plots under the Plot Options menu.

Perform the following steps with the con2dis GUI:

(a) Set the input to x() = cos (4u)

(b) Set the sampling rate to f

s

= 24 samples/sec.

(c) Determine the locations of the spectrum lines for the discrete-time signal, x[n], found in the middle

panels. Click the Radian button to change the axis to from

to .

(d) Determine the formula for the output signal, y(t) shown in the rightmost panels. What is the output

frequency in Hz?

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

Figure 2. Continuous to discrete demo interface.

5.5. Discrete-Time Convolution Demo

In this demo, you can select an input signal x[n], as well as the impulse response of the filter h[n]. Then

the demo shows the flipping and shifting used when a convolution is computed. This corresponds to the

sliding window of the FIR filter. Figure 3 shows the interface for the dconvdemo GUI.

Perform the following steps with the dconvdemo GUI.

(a) Click on the Get x[n] button and set the input to a finite-length pulse: x[n] = (u[n] u[n 10]).

(b) Set the filter to a three-point averager by using the Get h[n] button to create the correct impulse

response for the three-point averager. Remember that the impulse response is identical to the b

k

s for

an FIR filter. Also, the GUI allows you to modify the length and values of the pulse.

(c) Use the GUI to produce the output signal.

(d) When you move the mouse pointer over the index n below the signal plot and do a click-hold, you

will get a hand tool that allows you to move the n-pointer. By moving the pointer horizontally you

can observe the sliding window action of convolution. You can even move the index beyond the

limits of the window and the plot will scroll over to align with n.

5.6. Filtering via Convolution

You can perform the same convolution as done by the dconvdemo GUI by using the MATLAB function

firfilt, or conv. The preferred function is firfilt.

a) Do the filtering with a 3-point averager. The filter coefficient vector for the 3-point averager is

defined via:

bb = 1/3*ones(1,3);

Use firfilt to process an input signal that is a length-10 pulse:

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

x|n] = ]

1 foi n = u,1,2,S,4,S,6,7,8,9

u elsewheie

NOTE: in MATLAB indexing can be confusing. Our mathematical signal definitions start at n = 0, but

MATLAB starts its indexing at 1. Nevertheless, we can ignore the difference and pretend that

MATLAB is indexing from zero, as long as we dont try to write x[0] in MATLAB. Thus we can

generate the length-10 pulse and put it inside of a longer vector via xx =

[ones(1,10),zeros(1,5)].

Figure 3. Interface for discrete-time convolution GUI.

(b) To illustrate the filtering action of the 3-point averager, it is informative to make a plot of the input

signal and output signals together. Since x[n] and y[n] are discrete-time signals, a stem plot is

needed. One way to put the plots together is to use subplot(2,1,*) to make a two-panel

display:

nn = first:last; %--- use first=1 and last=length(xx)

subplot(2,1,1);

stem(nn,xx(nn))

subplot(2,1,2);

stem(nn,yy(nn),filled) %--Make black dots

xlabel(Time Index (n))

This code assumes that the output from firfilt is called yy. Try the plot with first equal to

the beginning index of the input signal, and last chosen to be the last index of the input. In other

words, the plotting range for both signals will be equal to the length of the input signal (which was

padded with five extra zero samples).

(c) Explain the filtering action of the 3-point averager by comparing the plots in the previous part. This

filter might be called a smoothing filter. Note how the transitions in x[n] from zero to one, and from

one back to zero, have been smoothed.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

5.7. Sampling and Aliasing

Use the con2dis GUI to do the following problem:

(a) Input frequency is 12 Hz.

(b) Sampling frequency is 15 Hz.

(c) Determine the frequency of the reconstructed output signal

(d) Determine the locations in of the lines in the spectrum of the discrete-time signal. Give numerical

values.

(e) Change the sampling frequency to 12 Hz, and explain the appearance of the output signal.

5.8. Discrete-Time Convolution

In this section, you will generate filtering results needed in a later section. Use the discrete-time

convolution GUI, dconvdemo, to do the following:

(a) Set the input signal to be x[n] = (0.9)

n

(u[n] u[n 10]). Use the Exponential signal type within

Get x[n].

(b) Set the impulse response to be |n] = |n] u.9|n 1]. Once again, use the Exponential signal

type within Get h[n].

(c) Illustrate the output signal y[n] and explain why it is zero for almost all points. Compute the

numerical value of the last point in y[n], i.e., the one that is negative and non-zero.

5.9. Loading Data

In order to exercise the basic filtering function firfilt, we will use some real data. In MATLAB you

can load data from a file called labdat.mat by using the load command as follows:

load labdat

The data file labdat.mat contains two filters and three signals, stored as separate MATLAB variables:

x1: a stair-step signal such as one might find in one sampled scan line from a TV test pattern image.

xtv: an actual scan line from a digital image.

x2: a speech waveform (oak is strong) sampled at fs = 8000 samples/second.

h1: the coefficients for a FIR discrete-time filter of the form of (1).

h2: coefficients for a second FIR filter.

After loading the data, use the whos function to verify that all five vectors are in your MATLAB

workspace.

5.10. Filtering a Signal

You will now use the signal vector x1 as the input to an FIR filter.

(a) For the warm-up, you should do the filtering with a 5-point averager. The filter coefficient vector for

the 5-point averager is defined via:

bb = 1/5*ones(1,5);

Use firfilt to process x1. How long are the input and output signals?

(b) To illustrate the filtering action of the 5-point averager, you must make a plot of the input signal and

output signal together. Since x

1

[n] and y

1

[n] are discrete-time signals, a stem plot is needed. One

way to put the plots together is to use subplot(2,1,*) to make a two-panel display:

nn = first:last;

subplot(2,1,1);

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

stem(nn,x1(nn))

subplot(2,1,2);

stem(nn,y1(nn),filled) %--Make black dots

xlabel(Time Index (n))

This code assumes that the output from firfilt is called y1. Try the plot with first equal to

the beginning index of the input signal, and last chosen to be the last index of the input. In other

words, the plotting range for both signals will be equal to the length of the input signal, even though

the output signal is longer.

(c) Since the previous plot is quite crowded, it is useful to show a small part of the signals. Repeat the

previous part with first and last chosen to display 30 points from the middle of the signals.

(d) Explain the filtering action of the 5-point averager by comparing the plots from parts (b) and (c). This

filter might be called a smoothing filter. Note how the transitions from one level to another have

been smoothed. Make a sketch of what would happen with a 2-point averager.

5.11. Filtering Images: 2-D Convolution

One-dimensional FIR filters, such as running averagers and first-difference filters, can be applied to one

dimensional signals such as speech or music. These same filters can be applied to images if we regard

each row (or column) of the image as a one-dimensional signal. For example, the 50

th

row of an image is

the N-point sequence xx[50,n] for 1 n N, so we can filter this sequence with a 1-D filter using

the conv or firfilt operator.

One objective of this lab is to show how simple 2-D filtering can be accomplished with 1-D row and

column filters. It might be tempting to use a for loop to write an M-file that would filter all the rows.

This would create a new image made up of the filtered rows:

y

1

[m, n] = x[m, n] x[m, n 1]

However, this image y

1

[m, n] would only be filtered in the horizontal direction. Filtering the columns

would require another for loop, and finally you would have the completely filtered image:

y

2

[m, n] = y

1

[m, n] y

1

[m 1, n]

In this case, the image y

2

[m, n] has been filtered in both directions by a first-difference filter These

filtering operations involve a lot of conv calculations, so the process can be slow. Fortunately, MATLAB

has a built-in function conv2( ) that will do this with a single call. It performs a more general filtering

operation than row/column filtering, but since it can do these simple 1-D operations it will be very helpful

in this lab.

(a) Load in the image echart.mat with the load command (it will create the variable echart

whose size is 257 256). We can filter all the rows of the image at once with the conv2( )

function. To filter the image in the horizontal direction using a first-difference filter, we form a row

vector of filter coefficients and use the following MATLAB statements:

bdiffh = [1, -1];

yy1 = conv2(echart, bdiffh);

In other words, the filter coefficients bdiffh for the first-difference filter are stored in a row vector

and will cause conv2( ) to filter all rows in the horizontal direction. Display the input image

echart and the output image yy1 on the screen at the same time. Compare the two images and

give a qualitative description of what you see.

(b) Now filter the eye-chart image echart in the vertical direction with a first-difference filter to

produce the image yy2. This is done by calling yy2 = conv2(echart,bdiffh) with a

column vector of filter coefficients. Display the image yy2 on the screen and describe in words how

the output image compares to the input.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

6. Lab Exercises: FIR Filters

In the following sections we will study how a filter can produce the following special effects:

1. Echo: FIR filters can produce echoes and reverberations because the filtering formula (1) contains

delay terms. In an image, such phenomena would be called ghosts.

2. Deconvolution: one FIR filter can (approximately) undo the effects of anotherwe will investigate a

cascade of two FIR filters that distort and then restore an image. This process is called deconvolution.

6.1. Deconvolution Experiment for 1-D Filters

Use the function firfilt( ) to implement the following FIR filter

w|n] = x|n] u.9x|n 1] (3)

on the input signal x[n] defined via the MATLAB statement: xx = 256*(rem(0:100,50)<10); In

MATLAB you must define the vector of filter coefficients bb needed in firfilt.

(a) Plot both the input and output waveforms x[n] and w[n] on the same figure, using subplot. Make

the discrete-time signal plots with MATLABs stem function, but restrict the horizontal axis to the

range 1 n 7S,. Explain why the output appears the way it does by figuring out (mathematically)

the effect of the filter coefficients in (3).

(b) Note that w[n] and x[n] are not the same length. Determine the length of the filtered signal w[n], and

explain how its length is related to the length of x[n] and the length of the FIR filter.

6.1.1. Restoration Filter

The following FIR filter

y|n] = r

I

|n l]

I=0

( lcr 2)

can be used to undo the effects of the FIR filter in the previous section (see the block diagram in Fig. 4).

It performs restoration, but it only does this approximately. Use the following steps to show how well it

works when r = 0.9 and M = 22.

a. Process the signal w[n] from (3) with FILTER-2 to obtain the output signal y[n].

b. Make stem plots of w[n] and y[n] using a time-index axis n that is the same for both signals. Put the

stem plots in the same window for comparisonusing a two-panel subplot.

c. Since the objective of the restoration filter is to produce a y[n] that is almost identical to x[n], make a

plot of the error (difference) between x[n] and y[n] over the range 1 n Su.

6.1.2. Worst-Case Error

a) Evaluate the worst-case error by doing the following: use MATLABs max() function to find the

maximum of the difference between y[n] and x[n] in the range 1 n Su.

b) What does the error plot and worst case error tell you about the quality of the restoration of x[n]?

How small do you think the worst case error has to be so that it cannot be seen on a plot?

6.1.3. An Echo Filter

The following FIR filter can be interpreted as an echo filter.

y

1

[n] = x

1

[n] + r x

1

[n P] (4)

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

Explain why this is a valid interpretation by working out the following:

a. You have an audio signal sampled at f

s

= 8000 Hz and you would like to add a delayed version of the

signal to simulate an echo. The time delay of the echo should be 0.2 seconds, and the strength of the

echo should be 90% percent of the original. Determine the values of r and P in (4); make P an integer.

b. Describe the filter coefficients of this FIR filter, and determine its length.

c. Implement the echo filter in (4) with the values of r and P determined in part (a). Use the speech

signal in the vector x2 found in the file labdat.mat. Listen to the result to verify that you have

produced an audible echo.

d. Implement an echo filter and apply it to your synthesized music from Lab #4. You will have to

change the calculation of P if you used f

s

= 11025 Hz. Reduce the echo time (from 0.2 secs. down to

zero) and try to determine the shortest echo time that can be perceived by human hearing.

6.2. Cascading Two Systems

More complicated systems are often made up from simple building blocks. In the system of Fig. 4 two

FIR filters are connected in cascade. For this section, assume that the filters in Fig. 4 are described by

the two equations:

|n] = x|n] qx|n 1] ( lcr 1)

y|n] = r

I

|n l]

I=0

( lcr 2)

Figure 4. Cascading two FIR filters: the second filter attempts to deconvolve the distortion introduced

by the first.

6.1.4. Overall Impulse Response

a. Implement the system in Fig. 4 using MATLAB to get the impulse response of the overall cascaded

system for the case where q = 0.9, r = 0.9 and M = 22. Use two calls to firfilt(). Plot the

impulse response of the overall cascaded system.

b. Work out the impulse response h[n] of the cascaded system by hand to verify that your MATLAB result

in part (a) is correct.

c. In a deconvolution application, the second system (FIR FILTER-2) tries to undo the convolutional

effect of the first. Perfect deconvolution would require that the cascade combination of the two

systems be equivalent to the identity system: y[n] = x[n]. If the impulse responses of the two systems

are h

1

[n] and h

2

[n], state the condition on h

1

[n] * h

2

[n] to achieve perfect deconvolution

11

.

6.1.5. Distorting and Restoring Images

If we pick q to be a little less than 1.0, then the first system (FIR FILTER-1) will cause distortion when

applied to the rows and columns of an image. The objective in this section is to show that we can use the

second system (FIR FILTER-2) to undo this distortion (more or less). Since FIR FILTER-2 will try to undo

the convolutional effect of the first, it acts as a deconvolution operator.

a. Load in the image echart.mat with the load command. It creates a matrix called echart.

b. Pick q = 0.9 in FILTER-1 and filter the image echart in both directions: apply FILTER-1 along the

11

Note: the cascade of FIR FILTER-1 and FILTER-2 does not perform perfect deconvolution.

Robt 205 Signals and Sensing with Lab Lab Manual Fall 2014

horizontal direction and then filter the resulting image along the vertical direction also with FILTER-1.

Call the result ech90.

(a) Deconvolve ech90 with FIR FILTER-2, choosing M = 22 and r = 0.9. Describe the visual

appearance of the output, and explain its features by invoking your mathematical understanding of the

cascade filtering process. Explain why you see ghosts in the output image, and use some previous

calculations to determine how big the ghosts (or echoes) are, and where they are located. Evaluate the

worst-case error in order to say how big the ghosts are relative to black-white transitions which are

0 to 255.

6.1.6. A Second Restoration Experiment

a) Now try to deconvolve ech90 with several different FIR filters for FILTER-2. You should set r =

0.9 and try several values for M such as 11, 22 and 33. Pick the best result and explain why it is

the best. Describe the visual appearance of the output, and explain its features by invoking your

mathematical understanding of the cascade filtering process. HINT: determine the impulse

response of the cascaded system and relate it to the visual appearance of the output image.

Hint: you can use dconvdemo to generate the impulse responses of the cascaded systems, like

you did in the Warm-up.

b) Furthermore, when you consider that a gray-scale display has 256 levels, how large is the worst-

case error (from the previous part) in terms of number of gray levels? Do this calculation for each

of the three filters in part (a). Think about the following question: Can your eyes perceive a gray

scale change of one level, i.e., one part in 256? Include all images and plots for the previous two

parts to support your discussions in the lab report.

Вам также может понравиться

- 7060 Image Sensors - ProcessingДокумент11 страниц7060 Image Sensors - ProcessingFawad KhanОценок пока нет

- Lab 2: Introduction To Image Processing: 1. GoalsДокумент4 страницыLab 2: Introduction To Image Processing: 1. GoalsDoan Thanh ThienОценок пока нет

- Digital Image Processing: Lab Assignements #2: Image Filtering in The Spatial Domain and Fourier TransformДокумент4 страницыDigital Image Processing: Lab Assignements #2: Image Filtering in The Spatial Domain and Fourier Transformraw.junkОценок пока нет

- Dip Manual PDFДокумент60 страницDip Manual PDFHaseeb MughalОценок пока нет

- hw1 PDFДокумент6 страницhw1 PDFrightheartedОценок пока нет

- ROBT308Lecture22Spring16 PDFДокумент52 страницыROBT308Lecture22Spring16 PDFrightheartedОценок пока нет

- Chap 11 SP 1 SolutionsДокумент19 страницChap 11 SP 1 SolutionsDavidFer DuraznoОценок пока нет

- Lab 3 DSPBEE13Документ7 страницLab 3 DSPBEE13MaryamОценок пока нет

- DSP - P4 Digital Images - A-D & D-AДокумент9 страницDSP - P4 Digital Images - A-D & D-AArmando CajahuaringaОценок пока нет

- Dip 03Документ7 страницDip 03Noor-Ul AinОценок пока нет

- DSP Lab6Документ10 страницDSP Lab6Yakhya Bukhtiar KiyaniОценок пока нет

- IPMVДокумент17 страницIPMVParminder Singh VirdiОценок пока нет

- Laboratory 1: DIP Spring 2015: Introduction To The MATLAB Image Processing ToolboxДокумент7 страницLaboratory 1: DIP Spring 2015: Introduction To The MATLAB Image Processing ToolboxAshish Rg KanchiОценок пока нет

- Dip 04 UpdatedДокумент12 страницDip 04 UpdatedNoor-Ul AinОценок пока нет

- Multimedia System Design Lab 7 PDFДокумент10 страницMultimedia System Design Lab 7 PDFCh Bilal MakenОценок пока нет

- 1 PreliminariesДокумент11 страниц1 PreliminariesMaria Rizette SayoОценок пока нет

- Digital Image Processing LabДокумент30 страницDigital Image Processing LabSami ZamaОценок пока нет

- Digital Image ProcessingДокумент40 страницDigital Image ProcessingAltar TarkanОценок пока нет

- Simulation Speckled Synthetic Aperture Radar Images After Noise Filtering Using MatLabДокумент4 страницыSimulation Speckled Synthetic Aperture Radar Images After Noise Filtering Using MatLabMajed ImadОценок пока нет

- SD-V ManualДокумент64 страницыSD-V ManualA. B. PARDIKARОценок пока нет

- MATLABДокумент24 страницыMATLABeshonshahzod01Оценок пока нет

- Lab ReportДокумент73 страницыLab ReportMizanur RahmanОценок пока нет

- Lab Manual: Department of Computer Science & EngineeringДокумент26 страницLab Manual: Department of Computer Science & EngineeringraviОценок пока нет

- Image Processing Lab Manual 2017Документ40 страницImage Processing Lab Manual 2017samarth50% (2)

- Digital Image ProcessingДокумент9 страницDigital Image ProcessingRini SujanaОценок пока нет

- Experiment - 02: Aim To Design and Simulate FIR Digital Filter (LP/HP) Software RequiredДокумент20 страницExperiment - 02: Aim To Design and Simulate FIR Digital Filter (LP/HP) Software RequiredEXAM CELL RitmОценок пока нет

- Experiment 9: The DTFT of A Sequence X (N) Is Defined by FollowingДокумент7 страницExperiment 9: The DTFT of A Sequence X (N) Is Defined by FollowingRUTUJA MADHUREОценок пока нет

- Lab #3 Digital Images: A/D and D/A: Shafaq Tauqir 198292Документ12 страницLab #3 Digital Images: A/D and D/A: Shafaq Tauqir 198292Rabail InKrediblОценок пока нет

- DIP NotesДокумент22 страницыDIP NotesSuman RoyОценок пока нет

- Digital Image ProcessingДокумент15 страницDigital Image ProcessingDeepak GourОценок пока нет

- Image Processing Lab ManualДокумент19 страницImage Processing Lab ManualIpkp KoperОценок пока нет

- DIP+Important+Questions+ +solutionsДокумент20 страницDIP+Important+Questions+ +solutionsAditya100% (1)

- Digital Image Processing and Analysis Laboratory 4: Image EnhancementДокумент5 страницDigital Image Processing and Analysis Laboratory 4: Image EnhancementDarsh SinghОценок пока нет

- 12 Lab LapenaДокумент12 страниц12 Lab LapenaLe AndroОценок пока нет

- Image Processing: ObjectiveДокумент6 страницImage Processing: ObjectiveElsadig OsmanОценок пока нет

- Lab 4Документ3 страницыLab 4Kavin KarthiОценок пока нет

- F1 PDFДокумент13 страницF1 PDFShailendra TiwariОценок пока нет

- Dip PracticalfileДокумент19 страницDip PracticalfiletusharОценок пока нет

- BM6712 Digital Image Processing LaboratoryДокумент61 страницаBM6712 Digital Image Processing LaboratoryB.vigneshОценок пока нет

- Experiment 1: Digital ImageДокумент17 страницExperiment 1: Digital ImagehardikОценок пока нет

- DV1614: Basic Edge Detection Using PythonДокумент5 страницDV1614: Basic Edge Detection Using PythonvikkinikkiОценок пока нет

- Case Study in MatlabДокумент47 страницCase Study in MatlabscribdssrОценок пока нет

- Image Processing Part 3Документ5 страницImage Processing Part 3Felix2ZОценок пока нет

- Basic Operations in Image Processing: ObjectivesДокумент6 страницBasic Operations in Image Processing: ObjectivesAamir ChohanОценок пока нет

- Open Frameworks and OpenCVДокумент43 страницыOpen Frameworks and OpenCVДенис ПереваловОценок пока нет

- MultimediaДокумент10 страницMultimediaRavi KumarОценок пока нет

- Merging Images Using Matlab: S.Triaška,-M.Gažo Institute of Control and Industrial InformaticsДокумент5 страницMerging Images Using Matlab: S.Triaška,-M.Gažo Institute of Control and Industrial InformaticsJoão PiresОценок пока нет

- MP 2Документ3 страницыMP 2VienNgocQuangОценок пока нет

- Image Processing: Chapter (3) Part 3:intensity Transformation and Spatial FiltersДокумент41 страницаImage Processing: Chapter (3) Part 3:intensity Transformation and Spatial FiltersArSLan CHeEmAaОценок пока нет

- Pixel Operations: 4c8 Dr. David CorriganДокумент26 страницPixel Operations: 4c8 Dr. David Corriganpavan_bmkrОценок пока нет

- Digital Image Processing Lab.: Prepared by Miss Rabab Abd Al Rasool Supervised by Dr. Muthana HachimДокумент47 страницDigital Image Processing Lab.: Prepared by Miss Rabab Abd Al Rasool Supervised by Dr. Muthana HachimRishabh BajpaiОценок пока нет

- U-1,2,3 ImpanswersДокумент17 страницU-1,2,3 ImpanswersStatus GoodОценок пока нет

- Dip 05Документ11 страницDip 05Noor-Ul AinОценок пока нет

- Digital Image Definitions&TransformationsДокумент18 страницDigital Image Definitions&TransformationsAnand SithanОценок пока нет

- Dip Assignment Questions Unit-1Документ8 страницDip Assignment Questions Unit-1OMSAINATH MPONLINEОценок пока нет

- Lab ManualДокумент28 страницLab ManualWaleed AwanОценок пока нет

- Study & Run All The Programs in Matlab & All Functions Also: List of ExperimentsДокумент10 страницStudy & Run All The Programs in Matlab & All Functions Also: List of Experimentsmayank5sajheОценок пока нет

- Sub BandДокумент7 страницSub BandAriSutaОценок пока нет

- Image Processing ProjectДокумент12 страницImage Processing ProjectKartik KumarОценок пока нет

- CS 359 Medical Image Registration J. M. Fitzpatrick Assignment 1Документ3 страницыCS 359 Medical Image Registration J. M. Fitzpatrick Assignment 1MohamedEssamAbdEl-KaderОценок пока нет

- Histogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionОт EverandHistogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionОценок пока нет

- Line Drawing Algorithm: Mastering Techniques for Precision Image RenderingОт EverandLine Drawing Algorithm: Mastering Techniques for Precision Image RenderingОценок пока нет

- AN00131 - USB CDC ECM Class For Ethernet Over USB - 2.0.2rc1 PDFДокумент31 страницаAN00131 - USB CDC ECM Class For Ethernet Over USB - 2.0.2rc1 PDFrightheartedОценок пока нет

- HW5 PDFДокумент1 страницаHW5 PDFrightheartedОценок пока нет

- BEAM DIAGRAMS AND FORMULAS For Various Static Loading Conditions, AISC ASD 8 EdДокумент7 страницBEAM DIAGRAMS AND FORMULAS For Various Static Loading Conditions, AISC ASD 8 EdEdon MorinaОценок пока нет

- HW3 PDFДокумент2 страницыHW3 PDFrightheartedОценок пока нет

- Robt401 Manipulator Kinematics FINAL PDFДокумент50 страницRobt401 Manipulator Kinematics FINAL PDFrightheartedОценок пока нет

- Root Locus: ROBT303 Linear Control Theory With LabДокумент38 страницRoot Locus: ROBT303 Linear Control Theory With LabrightheartedОценок пока нет

- Robt303 HW3 PDFДокумент1 страницаRobt303 HW3 PDFrightheartedОценок пока нет

- Root Locus: ROBT303 Linear Control Theory With LabДокумент28 страницRoot Locus: ROBT303 Linear Control Theory With LabrightheartedОценок пока нет

- Robt401 Manipulator Kinematics FINAL PDFДокумент50 страницRobt401 Manipulator Kinematics FINAL PDFrightheartedОценок пока нет

- Learning From Data Solutions To Selected Exercises: N N M J J N 2Документ4 страницыLearning From Data Solutions To Selected Exercises: N N M J J N 2rightheartedОценок пока нет

- ROBT308HW03Spring16 PDFДокумент7 страницROBT308HW03Spring16 PDFrightheartedОценок пока нет

- Robt304 Project Report PDFДокумент13 страницRobt304 Project Report PDFrightheartedОценок пока нет

- ROBT308Lecture24Spring16 PDFДокумент28 страницROBT308Lecture24Spring16 PDFrightheartedОценок пока нет

- 1: The Learning ProblemДокумент27 страниц1: The Learning ProblemrightheartedОценок пока нет

- Flow Map Painting in Substance Painter (By Satoshi Arakawa)Документ10 страницFlow Map Painting in Substance Painter (By Satoshi Arakawa)gamesherlinОценок пока нет

- Calculating The Analytic Signal in MAGMAPДокумент3 страницыCalculating The Analytic Signal in MAGMAPPratama AbimanyuОценок пока нет

- Volume 1number 2PP 508 513Документ6 страницVolume 1number 2PP 508 513Anubhav SinghОценок пока нет

- Chap 5Документ12 страницChap 5Ayman Younis0% (1)

- Bode Plot ExamplesДокумент3 страницыBode Plot ExamplesZaidoon MohsinОценок пока нет

- LAB 8: FIR Filter Design ObjectivesДокумент9 страницLAB 8: FIR Filter Design Objectivesmjrahimi.eee2020Оценок пока нет

- Data Sheet: KV N3AmДокумент2 страницыData Sheet: KV N3AmSteven KhooОценок пока нет

- 154Документ2 страницы154Alison RinconОценок пока нет

- Noise Reduction and Destriping Using Local Spatial Statistics and Quadratic Regression From Hyperion ImagesДокумент19 страницNoise Reduction and Destriping Using Local Spatial Statistics and Quadratic Regression From Hyperion Imageskashi fuuastОценок пока нет

- Automatic Speaker Recognition SystemДокумент11 страницAutomatic Speaker Recognition SystemVvb SatyanarayanaОценок пока нет

- DTFT Periodicity PropertyДокумент1 страницаDTFT Periodicity Propertyanish25Оценок пока нет

- LNF-LNR4 8CДокумент3 страницыLNF-LNR4 8CBenjamín Varela UmbralОценок пока нет

- DSP QBДокумент4 страницыDSP QBakhila pemmarajuОценок пока нет

- Sl-Series: Sound Level Limiter & ControllerДокумент4 страницыSl-Series: Sound Level Limiter & ControllerJoelcio Ribeiro GomesОценок пока нет

- BE EE 8th Sem TIME RESPONSE ANALYSIS First Order System by Kanchan ThoolДокумент10 страницBE EE 8th Sem TIME RESPONSE ANALYSIS First Order System by Kanchan ThoolEEE ACEECОценок пока нет

- PCM Examples - TutorialДокумент14 страницPCM Examples - TutorialSamОценок пока нет

- 6 Element IntermediateДокумент49 страниц6 Element IntermediateNita SusantiОценок пока нет

- Lossless and Lossy CompressionДокумент7 страницLossless and Lossy CompressionNii LaryeaОценок пока нет

- EEC 160: Signal Analysis and Communications: Fall 2013Документ2 страницыEEC 160: Signal Analysis and Communications: Fall 2013eva1235xОценок пока нет

- Subwoofer: DIGITAL10, DIGITAL12, SUB6, SUB10, SUB 125Документ4 страницыSubwoofer: DIGITAL10, DIGITAL12, SUB6, SUB10, SUB 125Sameer SippyОценок пока нет

- Publication - TIA-603-D Date - June 2009Документ8 страницPublication - TIA-603-D Date - June 2009VIkasОценок пока нет

- DSP Question BanksДокумент21 страницаDSP Question BanksPanku RangareeОценок пока нет

- Ddx3216 Eng Rev BДокумент56 страницDdx3216 Eng Rev BBrendan ShurillaОценок пока нет

- Denon AVR1705Документ2 страницыDenon AVR1705turucОценок пока нет

- SB RinjaniДокумент16 страницSB RinjanimaxОценок пока нет

- Lab11 - Data Communication in MatlabДокумент8 страницLab11 - Data Communication in MatlabJavier Ruiz ThorrensОценок пока нет

- Biomedical Signal ProcessingДокумент2 страницыBiomedical Signal ProcessingPavithra SivanathanОценок пока нет

- Phonic Summit ManualДокумент29 страницPhonic Summit ManualmanuarroОценок пока нет