Академический Документы

Профессиональный Документы

Культура Документы

Grammaticality, Acceptability, Possible Words and Large Corpora-4

Загружено:

Ντάνιελ ΦωςАвторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

Grammaticality, Acceptability, Possible Words and Large Corpora-4

Загружено:

Ντάνιελ ΦωςАвторское право:

Доступные форматы

Morphology

DOI 10.1007/s11525-014-9234-z

Grammaticality, acceptability, possible words and large

corpora

Laurie Bauer

Received: 14 February 2013 / Accepted: 27 February 2014

Springer Science+Business Media Dordrecht 2014

Abstract The related notions of possible word, actual word and productivity are

difficult to work with because of the difficulty with the notion of actual word. When

large corpora are used as a source of data, there are some benefits for the practising

morphologist, but the notion of actual word becomes even more difficult. This is

because it rapidly becomes clear that in corpora there may be more than one form for

the same morphosemantic complex, so that rules may have multiple outputs. One of

the factors that may help determine the output of a variable rule in morphology is the

productivity of the process involved. If that is the case, the notion of productivity has

to be reevaluated.

Keywords Actual word Possible word Productivity Grammaticality Corpus

linguistics

1 Introduction

Notions of possible words, actual words and productivity have become well established in recent morphological studies, and these notions are entangled with each

other.1 If you cannot tell what words are actual words, you cannot recognize new

words and therefore cannot tell whether something is or is not productive. The notion

of new word and the notion of productivity both require that there be a notion of an

1 An earlier version of this paper was presented at the conference on Data-Rich Approaches to English

Morphology, held in Wellington, New Zealand, in July 2012. I should like to thank attendees at the conference, Liza Tarasova and Natalia Beliaeva, and referees for Morphology for feedback on earlier versions,

and Jonathan Newton for the example in (2). The research for this paper was supported by a grant from

the Royal Society of New Zealand through its Marsden Fund to the author.

L. Bauer ( )

Victoria University, Wellington, New Zealand

e-mail: Laurie.bauer@vuw.ac.nz

L. Bauer

actual word. The notion of an actual word, though, is incredibly fraught. The proposal from Halle (1973) that all words which are the output of the grammar should

be marked with a value for a feature [ lexical insertion] these days seems impossibly nave. Part of the difficulty here is that Halle is working with the notion of an

ideal speaker-listener (Chomsky 1965); these days there is much more focus on an

individual speaker in a speech community. Also, these days, we have access to large

corpora of text which repeatedly show that the individual linguist has very little idea

about what might be a possible word of the language. Part of the topic of this paper

is a consideration of what happens when large corpora are used to provide data for

morphologists.

It would no doubt be possible to remove all the problems to which these notions

give rise by defining a synchronic state of a language or variety so narrowly that

no neologism or exposure to unfamiliar words is possible. But to do this would be

contrary to all the beliefs about the productivity of the language system that have been

in vogue since before the onset of the generative period in linguistics. If we cannot

produce or meet new words, then we have no need for rules at all because everything

can be listed. At the same time, we lose all ability to account for the fact that there is

a well-attested ability for real speakers to create words to which they have never been

exposed.

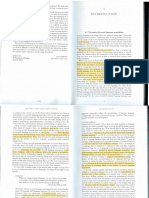

In this paper I begin by returning to such well-worn notions as grammaticality and

acceptability and the way they apply in morphology; I then look at the notions of

possible words and productivity; in the core of the paper, I look at the way in which

the data available from large corpora influence the study of such phenomena, and the

benefits and problems such devices give rise to; and finally, I return to the notion of

productivity in the light of such observations.

2 Grammaticality and acceptability

Since Chomsky (1957), linguists have been familiar with the notions of grammaticality and acceptability. Grammaticality is defined by what a particular grammar

can have as its output, while acceptability is speaker-oriented and depends upon

what speakers will consider appropriate. The two need not match, as is shown by

Chomskys (1957:15) celebrated example in (1), which is presumed to be grammatical but not acceptable, while (2), a quotation from Paul McCartney (Live and Let

Die, 1973), is presumably acceptable (the structure may be heard relatively frequently

from speakers) but not grammatical (because the same preposition is both maintained

in its original position and strandedon the construction in (2) see further Radford

et al. 2012).

(1)

Colorless green ideas sleep furiously.

(2)

But if this ever changing world in which we live in

Makes you give in and cry

Say live and let die

While the distinction between grammaticality and acceptability is an important

one, it is clear that in general terms it is expected that acceptability will follow

Grammaticality, acceptability, possible words and large corpora

from grammaticality (always assuming that the pragmatics associated with the lexical

items chosen to fill the relevant syntactic slots is appropriate). Sentence (1) is unacceptable because (among other things) sleeping is not something which can pragmatically be felicitously described as being done furiously. It is the pragmatics which is

odd. If a grammar of English produced sequences like (3), on the other hand, it would

be taken that the grammar did not reflect the way the language was used by speakers,

and should be corrected, because here it is the fundamental order of elements which

is wrong, not just the pragmatics.

(3)

Furiously ideas colorless sleep green.

That is, the distinction between grammaticality and acceptability is not necessarily

a comment on the vagaries of human perception, but a comment on how far linguists

are expected to make their grammars approximate the kind of output speakers/writers

actually produce. An alternative formulation is that linguists expect their grammars

to overgenerate (that is, to produce output sequences which real speakers would not

use), but will accept this as a criticism of their grammars only if certain, ill-defined,

boundaries are overstepped. For some discussion of this problem in terms of selectional restrictions, see Chomsky (1965:148163).

This notion of grammaticality was carried forward into morphological studies by,

for instance, Aronoff (1976). In another famous passage, which has set the tone for

much morphological work, Aronoff (1976:1718) says

The simplest task of a morphology, the least we demand of it, is the enumeration of the class of possible words of a language.

This, in effect, keeps the Chomskyan focus on grammaticality: the notion of enumeration is not obviously different from the Chomskyan use of generation. It also

aligns morphology (perhaps, more specifically, word-formation) with syntax in implicitly claiming that just as we cannot list the actual sentences of a language, so

we cannot list the actual words, but we can provide a statement by which we can

determine whether a given form can be expected to be admissible as a word of the

language provided that it is pragmatically adequate. I have previously (Bauer 1983:

Sect. 4.2) argued in favor of this position, in a way that I would no longer wish to

argue.

Enumerating the possible words of the language has generally been seen as a matter of stating the relevant rules of word-formation (which Aronoff terms WFRs

Word Formation Rules). The notion of a rule as it has developed within generative

grammar has typically demanded some form or structure which is affected by the rule

(the input), some process which has an effect on that input, and, by implication, the

output form created by the rule. When we consider morphology, this means that rules

(or, specifically, WFRs) create new derivatives from bases which are simpler words

or obligatorily bound bases.2 This is, in essence, not greatly different from what we

might characterize as a pre-generative structuralist position, except in that the rule

2 In the case of conversion I assume that this still applies, in that the simpler word has not undergone the

identity operation which creates the derivative. Clearly, an alternative position would be possible, in which

case the characterization of a rule given here would have to be modified slightly.

L. Bauer

notation is formulated as a rule of creation, while the structuralists would probably

have seen their formulae as being pieces of analysis. Given that it is a commonplace

of generative theory that rules are not directional, but work equally for production

and perception (Lyons 1991:43), it is not clear just how much importance should be

given to this difference.

There are, of course, now alternatives to such an explicit statement of rules, in particular connectionism eschews any explicit statement of relationship between input

and output, although it maintains the input and output. The same might be said for

Optimality Theoretic formulations, where the optimal output emerges from the set of

prioritized constraints. Nonetheless, even in such cases, there is an input (typically a

word) and an output (a word) linked by some, presumably generalized, procedure.

The precise status of the output is not necessarily clear. Is the output of any such

rule an actual word (and if so, is the rule a once-only rule: Aronoff 1976:22), or is it

a possible word (aka a potential word)? To some extent, this is the problem that was

solved by Halles [ lexical insertion] feature, although it was never clear how the

feature marking (and the status it attempted to encode) was supposed to be changed

from minus to plus.

Difficulties begin to emerge with these notions before we go any further. The status

of potential words is not consistent in the eyes of real speakers. Some potential words

are not real words (4), (6), (8) or are words which have just been made up (5),

others, if we follow Aronoff (1976), are (because of the great productivity of the

processes involved) so automatic as to be unnoticeable to the speaker in context,

and cannot be blocked by actual words. On the other hand, some words which have

currency in the community may not have been noticed by individual speakers, and

so may not function as actual words (4), (7). At the very least, the status of the

individual speaker and the community need some clarification if a coherent view of

this aspect of linguistic structure is to be properly understood.

(4)

She. . . pulled on another pair of disposable gloves. Gemma wondered if there

was a proper name for a glovophiliac. (Lord, Gabrielle 2002. Baby did a bad

bad thing. Sydney: Hodder, p. 272.)

(5)

Theyre moist and cinnamony and . . . is that a word?

Is what a word?

Cinnamony.

Hannah laughed. If its not, it ought to be. (Fluke, Joanne 2003. Meringue

pie murder. New York: Kensington.)

(6)

He knew he wasnt going to get a penny out of Bairn, so he sent his chief

crucifixionist to make an example.

Loudermilk wiped the legs of his glasses I dont think thats a word. (Estelman, Loren D. 2007. American detective. New York: Doherty, p. 125.)

(7)

Id need an enforcement arm. For my benignity, I mean. If thats a word.

It should be, Tamara said. (Lescroart, John 2012. The hunter. New York:

Dutton, p. 139.)

(8)

She had always been one of the popular kidsnot the leader, not the trendsetter just . . . a belonger, she thought, knowing that wasnt a real word. It should

have been. (Hoag, Tami 2013. The 9th girl. New York: Dutton, p. 151.)

Grammaticality, acceptability, possible words and large corpora

3 Possible words and productivity

If the output of a WFR is a potential word, we see why productivity is important for

rule-based systems. There are plenty of actual words of any natural language which

are not potential words. Mice, an actual plural of mouse, is a potential phonological

word of English, but not a potential plural of mouse, in the sense that there is no WFR

in current English which could produce mice with this meaning. Length, an actual

nominalization from long is not a potential nominalization (we will need to come

back to this claim). Thus actual words are not a subset of possible words (perhaps

more strictly, are not a subset of the words which could be formed by current WFRs

for the expression of the relevant morphological categories). Terminology in this area

has varied (Bauer 1983:48), but is generally now carried on in terms of certain forms

being lexicalized, while some rules are productive. Productive rulesthe only ones

that a generative linguist of Aronoffs persuasion is presumably concerned withare

those rules which can produce possible words. The implications of such a view are not

always welcome: some linguists such as Jackendoff (1975) maintain non-productive

rules in their descriptions, naming them redundancy rules; there may or may not be a

firm distinction made between redundancy rules and productive morphological rules.

But if the existence of a (non-redundancy) rule shows that there is productivity

(sensu availability), it says nothing about productivity (sensu profitability) (Corbin

1987; translation of the terms from Carstairs-McCarthy 1992:37). Availability says

merely that the rule can be used; profitability says something about how likely it is

that the rule will be used. There is quite a lot of debate in the literature as to how

much profitability is a matter of linguistics and how much it is a matter of pragmatic

need for the word-formation process in question (for different reasons why it might

not be linguistic, see Harris 1951:255 and Koefoed 1992:16 and discussion in Bauer

2001:28). Only if it is linguistic can it be built into a rule notation like the generative

one.

There is much in the descriptions of morphological processes to suggest that some

degree of profitability is caused by linguistic factors. Domains are often specified in

linguistic terms. For example, the English de-adjectival -en]V (as in blacken) is often

said to attach to bases ending in an obstruent; the de-verbal -al]N (as in arrival) requires bases ending with a stressed syllable; -able]A (as in extendable) applies freely

to transitive verbs; and so on. In other approaches to productivity, it is claimed that

productive affixes are more parsable than non-productive ones, with the base being

more frequent than the derivative, the semantics of the whole being transparent, and

the phonology indicating clearly the presence of a boundary between base and affix

(Hay 2003). While frequency is not strictly a linguistic notion, relating to use rather

than to structure, the other factors here are linguistic.

But even where there are linguistic constraints on the application of WFRs, it

seems undeniable that there is still a certain haphazardness to their application. There

are, for instance, many final-stressed verbs in English for which there is no corresponding -al]N nominalization current in the relevant speech communities. Verbs

such as conduct, contrive (contrast arrive), demand, imply, impose (contrast propose), maintain (contrast retain according to the OED3 ), prefer (contrast refer), rely

3 OED. The Oxford English dictionary on-line. www.oed.com.

L. Bauer

(contrast deny) are among those for which the OED lists no current -al]N nominalization. That being the case, either there must be some other constraint on the suffix

-al]N , or else the set of verbs which take the suffix is fundamentally unpredictable,

despite the formal restrictions which can be stated to modify the application of the

rules. That is, the formal constraints do not delimit a set of acceptable words, but a

much larger set, some of which may be unacceptable or ungrammatical. Similar comments can be made in regard to -able adjectivalizations. Here, though, the process is

rather more profitable in current English, and the unattested or new forms with -able

seem rather less outlandish. Forms with transitive verb bases not listed in the OED

include disgustable (listed as obsolete), Googleable, grillable (boilable and roastable

are listed), inculcatable, parkable, pedestrianizable, roquetable, spyable. Even if it

isin principlepredictable that something should be possible, it is not predictable

from general principles which items will have become actual words. At this level at

least, the profitability of WFRs is variable. This affects acceptability for many speakers, for whom an acceptable word may be defined as being somewhere in the set of

item-familiar words and those created by extremely productive processes.

The parallel from syntax would suggest that this is irrelevant: most sentences do

not occur and the job of a generative grammar is to say whether a string is a sentence or not, rather than whether a sentence occurs or not. In morphology it is less

clear to what extent this is true, unless by fiat. First, there is a lay prejudice in favor of

words existing rather than being created on-line (but see also Di Sciullo and Williams

1987:14: Most of the words are listed.). We might dismiss this as lay ignorance.

But there is a fair amount of evidence that derivational productivity is rather difficult

for speakers (at least in some language types and some formation-types), and may

be avoided (see e.g. Bauer 1996). Secondly, sentences are maximally productive because they are indefinitely extendable.4 In purely practical terms, words cannot be

indefinitely extended: their function is to name entities, actions and states, etc. and an

infinite name (or even a name which took several minutes to say) would not function

appropriately in the language system. Even in languages which allow apparently free

recursion in compounds (like German, for instance), it appears that compounds over

four elements long are very rare (Fleischer and Barz 2007:98). It is not that compounds cannot be longerthey can; it is that in general terms they are not. One of the

things we learn from Fabb (1988) is that far fewer sequences of affixes in English are

found than appear theoretically possible. If we look at the number of concatenated

affixes attested in any given word, it is typically (at least in an Indo-European language like English) relatively small (Ljung 1970 gives figures of three prefixes and

four suffixes for English, which seems slightly short in the light of words such as

sensationalization (OED), but which makes the point; it may also be more realistic

where the base is not lexicalized). While there are places where affixes are added to

phrasal or sentential bases, and there is no reason to presume that such bases can4 Referees for Morphology rightly query the use of the term infinite in this context, yet Chomsky

(1975:78) talks of an infinite set of grammatical utterances, Fromkin et al. (1999:9) of an infinite set of

new sentences and Carnie (2007:16) says Language is a productive (probably infinite) system, including in his reasoning that sentences can always be extended by the addition of an extra sub-part. Perhaps

words cannot always be so extended. I find Matthews (1979:24) helpful: A generativist says that the

speakers mind controls an infinite set of sentences. But this is not a statement of observed fact. It is part

of a theory.

Grammaticality, acceptability, possible words and large corpora

not contain syntactic recursion (Cat-in-the-hattish seems perfectly possible5 ), this is

not clearly morphological recursion. Repetition of the same morph in a single word

can be found in morphological systems, as illustrated below from Labrador Eskimo

(Miller 1993:47) in (9), from Japanese (Miyagawa 1999:257) in (10) and from English in (11). It is, however, always the exceptional pattern in morphology, never the

rule, and is usually tightly constrained.6

(9)

Taku-jau-tit-tau-gasigi-jau-juk

see-PASS - CAUS - PASS-believe-PASS-3sS

S/he was believed to have been made to be seen

(10)

Taroo-ga Hanoko-ni Ziroo-o Mitiko-ni aw-ase-sase-ru

Taro-NOM Hanoko-DAT Jiro-ACC Michiko-DAT meet-CAUS - CAUS - PRES

Taro will cause (make/let) Hanoko to cause Jiro to meet Michiko

(11)

Meta-meta-rules, pre-preseason (both COCA)

To some extent the same is true of syntactic rules: no living person has the time to

utter or write a sentence of more than very limited length. It must be acknowledged,

though, that the extent of recursion is greater in syntax, the length of sentences is

(almost by definition) greater than the length of words, and the extent to which one

extra constituent may be freely added to sentences is greater than the extent to which

that can be done to words. So the productivity of morphological rules is far more constrained than that of syntactic rules. It is further constrained by the fact that affixes

are (on the whole) grammatical elements, and stringing grammatical elements is not

necessarily any easier in morphology than it is in syntax. Despite the possibility of

sentences such as What did you bring the book that I didnt want to be read to out

of down for? (at least as a joke showing the necessity for preposition stranding), sequences of prepositions, pronouns, conjunctions and the like are severely limited, and

sequences of affixes arein most language typesalso severely limited, presumably

for similar reasons, namely that it makes little sense to specify a number of functions

unless the things to which those functions apply are also specified.

The question is whether words with longer affixal strings are unacceptable or ungrammatical (or neither of those two options). I suspect that such a question is not

easily answerableif at all: it is not clear what would count as evidence. But the fact

that the question is worth asking suggests that morphological productivity is not just

the same as syntactic productivity.

So we must conclude that the division between grammaticality and acceptability,

which was very important in the development of the notion of linguistic rules, is not

as easily made in morphology as it is in syntax, and that while availability might be

accounted for in terms of such rules, profitability remains unaccounted for.

If it is hard to distinguish between grammaticality and acceptability in wordformation, and we cannot necessarily trust notions of acceptability, then we might

need some alternative way to get at the same underlying notion. Accordingly, at this

point, I turn to consider the contribution of large corpora to morphological research.

5 And can be attested at http://qahatesyou.com/wordpress/category/philosophy/ (accessed 9 Jan 2013).

6 Indeed, even the Japanese example below may not stand up to close scrutiny, since Miyagawa notes a

slightly different function for the two causative markers, despite their shared form and shared meaning.

L. Bauer

4 Large corpora

There are places where large corpora can be extremely useful for the morphologist,

and places where large corpora raise practical problems for the morphologist. In this

section, I shall consider each of these cases in turn, before returning to the implications of what corpora have to tell us for productivity and possible words.

4.1 Benefits of large corpora

Payne and Huddleston (2002:449) make the claim that although it is perfectly acceptable to have various London schools and colleges (presumably derived from an

underlying or implicit various London schools and London colleges), it is not possible

to have *ice-lollies and creams corresponding to ice-lollies and ice-creams (the asterisk is theirs). This, they claim, is because we are dealing with two different types of

construction here: ice-cream is a compound, and does not allow coordination within

it, while London college is a phrasal construction, and does allow coordination within

it. I have elsewhere (Bauer 1998) queried the logic of such an approach to the distinction between phrases and compounds, and shall not repeat that here. What I want

to say here is that the intuitions which tell them that ice-lollies and creams cannot be

part of English are clearly faulty, because when we look at large enough corpora (in

this case, when we look at what we can find via Google) we find examples like those

repeated below.

(12)

Living on the broken dreams of ice lollies and creams

http://www.melodramatic.com/node/70347?page=1 (accessed 12 Jan 2011)

Far too many ice-lollies and creams had been consumed but we were all happy

little campers

http://yacf.co.uk/forum/index.php?topic=33253.120 (accessed 16 Jan 2011)

These nine months were filled with dripping ice lollies and creams, spilt soft

drinks and lost maltesers

http://keeptrackkyle.blogspot.com/2006/07/tidying-up.html (accessed 16 Jan

2011)

to play with their buckets and spades, to paddle in the water, and to suck lots

of ice lollies and creams

http://www.governessx.com/Introduction/IntroductionLibrary/

GovernessXLibraryABSissCDictionaryS.htm (accessed 16 Jan 2011)

Wooden Toy Ice Creams And Lollies With Crate

http://www.jlrtoysandleisure.co.uk/wooden-creams-lollies-with-crate-p-293.

html (accessed 16 Jan 2011)

Ice Creams and Lollies

http://blog.annabelkarmel.com/recipes/ice-creams-and-lollies.html (accessed

16 Jan 2011)

It is true that some of the examples in (12) (and the same is true in other examples

cited later) are headlines, and the grammar of headlines may not be exactly the same

Grammaticality, acceptability, possible words and large corpora

as the grammar of other structures; nevertheless, there are sufficient examples here to

show that the type that is predicted not to occur does actually occur in a large enough

corpus.

If it is the case that, presented with sufficient data, we can find examples of precisely the type that are predicted not to occur, then the theoretical position supported

by the predictions loses credibility. In this case, the firm distinction between compounds and phrases has to be less secure than is claimed.

To take another example, Jensen (1990:119) cites a claim from Zwicky (1969) that

genitive forms of ablaut plurals that end in /s/ are impossible: *geeses, *mices. Such

forms are certainly rare (there are no hits in COCA, for instance), but that does not

mean that they are impossible. The examples in (13) are also found via Google.

(13)

Newborn Mices Hearts Can Heal Themselves

http://www.nytimes.com/2011/03/01/science/01obmice.html?_r=0 (Accessed

19 Oct 2012)

Without B-Raf and C-Raf proteins mices fur turns white

http://www.news-medical.net/news/20121006/Without-B-Raf-and-C-Rafproteins-mices-fur-turns-white.aspx (accessed 19 Oct 2012)

Male Birth Control Possible? JQ1 Compound Decreases Mices Sperm Count,

Quality

http://www.huffingtonpost.com/2012/08/16/male-birth-control-jq1-spermcount_n_1784361.html (accessed 19 Oct 2012)

Researchers Plant Short-Term Memories into Mices Brains. Read more at

http://www.medicaldaily.com/articles/12018/20120910/researchers-plantshort-term-memories-mices-brains.htm#s5d6fwt4hEfE1uW4.99 (accessed 19

Oct 2012)

Forget me not: scientists trigger mices memories with light

http://www.smartplanet.com/blog/science-scope/forget-me-not-scientiststrigger-mices-memories-with-light/12518 (accessed 19 Oct 2012)

What can I use for my mices bedding?

http://www.guineapigcages.com/forum/others/43989-mice-beddingalternative-what-can-i-use-my-mices-bedding.html (Accessed 19 Oct 2012)

FLYING WITH GEESES EYES ON NEW YORK CITY

http://www.feeldesain.com/earthflight-flying-with-geeses-eyes-on-newyork-city.html (Accessed 19 Oct 2012)

I saw that my geeses wings are tinged brown at the edges and turned in

http://au.answers.yahoo.com/question/index?qid=20120623005124AA04aSv

(accessed 19 Oct 2012)

As a third and final example, consider the affixation of the prefix un- to certain

implicitly negative adjectives. The original claim lies at least as far back as Jespersen

(1917), but is connected in particular with the work of Zimmer (1964). In the forty

years since that work appeared, any number of linguists have repeated the example,

apparently convinced that it represents a real constraint on un- prefixation (despite

L. Bauer

what Zimmer himself says). However, Bauer et al. (2013) cite the examples in (14)

from large corpora. Clearly the intuitions of many linguists have been unreliable, and

there is no such absolute restriction on un- prefixation.

(14)

unafraid, unangry, unanxious, un-bald, unbare, unbitter, unbogus, uncoy, uncrazy, uncruel, undead, unevil, unfake, unfraught, unhostile, unhumid, unjealous, unlame, unlazy, unmad, unpoor, unsick, unsordid, unsurly, untimid, unugly, unvulgar, unweary

One tactic available to linguists who make claims about the impossibility of all

these forms is to assert that the examples in (12), (13) and (14) are ungrammatical

and errors. I have personally been given such a response by a referee for an article

submitted for publication to a well-known journal. The trouble with such claims in

the face of evidence like that cited here is that it hard to see how it can be justified

except circularly. It may be that there are different dialects of English, some of which

allow and others of which do not allow the constructions illustrated, but a simpler

explanation is just that we do not need to discuss things which several geese own all

that often, and that such usages are correspondingly rare. As a result, we can find

such examples only when we look at very large corpora, and that the relatively low

number of hits even in such corpora does not indicate ungrammaticality, but simply

rarity.

4.2 Difficulties presented by large corpora

Not only do we find really useful examples like those cited above to help us formulate

true generalizations about the way in which morphological structure is used, we also

find unhelpful examples. To begin with an isolated example, consider the text in (15).

(15)

Could that polish have been tainted with cyanide? Could Susan have been the

tainterer? Was there such a word as tainterer? Maybe she was a tainteress?

(Sarah Strohmeyer, 2004. Bubbles: a broad. New York: Dutton, p. 73.)

The book (as is clear from its title) is intended to be humorous, and this passage

could be intended to be non-serious; on the face of it, however, we have an unfamiliar

derivative, tainterer, formed with double affixation of -er, and then another derivative,

tainteress, formed without any resyllabification of the final <r>that is we do not

find taintress (contrast actress). There is an argument to be made that either of these

leads to an ungrammatical form.7

So how are examples like those in (15) to be interpreted? Do we take them as being

indicators of a new pattern in English? Do we take them to be the continuation of an

old pattern (tainterer can be found in Google with reference to nineteenth-century

professions, presumably denoting a dyer)? Do we take them to be ungrammatical

7 There is some variability as to the resyllabification of the final <r> in English: manageress shows no

resyllabification, and this is not simply a matter of whether we are dealing with -er or -or, since we find

doctoress, mayoress and waitress. The -erer sequence can be found in forms like adulterer (where only

one of the -er sequences is an independent morph) or in fruiterer, which is unusual in its structure, and not

obviously productive.

Grammaticality, acceptability, possible words and large corpora

and unacceptableerrors, perhaps? If they are isolated (as this example is), do we

just ignore them?

A more complex, and more worrying example, is provided by adjectives with into be found in COCA (Davies 2008) and the BNC (British National Corpus 2007).

Among the thousands of tokens of words beginning with the letters <in>, <im>, <il>,

<ir> in COCA and the BNC, there are several hundred types which represent negative

adjectives, and appear to have the affix to be seen in inconclusive, indirect, inordinate,

etc. Among these instances of the negative prefix, we find a handful of forms which

appear to indicate the productivity of the prefix, in that these words do not appear to

be in general usage in the community and are not, for instance, in the OED. Examples

are given in (16).

(16)

immedical, inactual, inadult, inattentional, incompoundable, inconservative,

indescriptible, indominable, inexorcizable, inexplicatable, inextractable, injuvenile, intesticular

This is interesting in its own right, since we might expect to find that un- is the productive negative prefix in English, and that in- is not productive or at best marginally

so (as suggested by Marchand 1969:170; Bauer 1983:219; though contrast Baayen

and Lieber 1991). Perhaps rather more interesting, however, are those forms where

the in- prefix is found where some other prefix is established in the community. Some

examples are given in (17).

(17)

inadapted, inapparent (O), inappeasable (O), inarguable (O), inartful (O),

inartistic (O), inassimilable (O), incivil (O), indemonstrable (O), inequal (O),

infathomable, infavorable, ingenerous, inimaginable, inintelligent, ininteresting, instable (O), intenable

Those words marked with (O) in (17) are listed in the OED, even though other

forms seem to be more usual today. There are, for instance, 20k hits for inappeasable

on Google, but over 150k for unappeasable. Not all the cases are that clear-cut, but

the examples in (17) appear to provide evidence that in- is productive enough to

take over items which are well-established with some other, apparently synonymous,

affix.

This is interesting on two fronts. First, it suggests that in- is more productive than

even words like those in (16) would indicate: it is not only found in new words, it has

the power to oust old (presumably item-familiar) words. In general terms, this is the

behavior associated with productive processes: plural -s and past tense -ed spread to

new bases much more easily than ablaut or the pattern seen in catch-caught.

The second point is that data such as that illustrated in (17) appears to contradict everything we are told about blocking. Blocking (Aronoff 1976; Rainer 1988) is

supposed to prevent the coining (or possibly only the establishment) of new words

which have the same meaning as actual listed words (or possibly only actual listed

words which use the same bases). Negative prefixes in English provide considerable

evidence that this is not the case (see Bauer et al. 2013 for more discussion). Even in

the OED we find sets such as those in (18).

L. Bauer

(18)

ahistorical

anhistorical

alogical

apolitical

atypical

unhistorical

illogical

impolitical

impolite

improper

irredeemable

unlogical

unpolitical

unpolite

unproper

unredeemable

untypical

Even though blocking is not the main focus of this paper, it is noteworthy that

large corpora will often give apparent evidence of the failure of blocking, and again

it can be hard to interpret such evidence as is provided. In the case of the negative

prefixes, my own opinion is that the evidence is overwhelmingly against there being

any general principle of blocking, though I do not know why negative adjectives

should be so open to multiple, synonymous affixation patterns (if, indeed, this is not

merely a misleading impression).

Among the negated adjectives which might have been listed in (17), I should like

to draw particular attention to a small set illustrated in (19).

(19)

inbearable, inbelievable, inmodest, inpracticable, inpenetrable, inprescribable

There are not very many instances like those in (19), and most of them are hapaxes

in the corpora, but even in (19) we seem to have a recurring pattern. We have evidence

here of a number of instances of the prefix in- occurring in the default allomorph inrather than the expected allomorph im- before a bilabial. The obvious, immediate

conclusion is that the rules of allomorphy in English are not quite as automatic as

linguists tend to consider them to be, and that users are writing an unassimilated

form or perhaps a morphophonemic representation. Unfortunately, there are other

possibilities, which mean that we have to be careful in rushing to that conclusion.

On the standard QWERTY keyboard, <m> and <n> are adjacent keys: it is therefore

possible that all of these (a very small number in the thousands of tokens of words

with initial <in> or <im>) are simply typographical errors. It is also the case that <i>

and <u> are adjacent keys, and so any of these might be a typographical error for a

form with initial un-, and if that is the case, there is no *um- allomorph, and the <n>

would be expected. Given examples of the type shown in (17), alternation between

in- and un- prefixed negatives cannot be unexpected, and thus even unmodest must be

considered a possible form. So how are we supposed to interpret evidence like that in

(19), and what would it take to convince us that we need to move to a new analysis?

It seems to me that we can go some way toward answering this question. At the

very least we would want to find a number of independent occurrences of the same

form in places where the text is clearly serious rather than ludic. I think the examples

cited in (19) would be more convincing if it were not the case that there were two

alternative routes to them by simple error. Even though we find similar spellings

before labials in other parts of speech, the number of cases we have attested is not yet

enough to indicate conclusively that the end of in- allomorphy is a linguistic change

in progress; it is, however, enough to make us aware of the possibility that something

is happening in this area of language.

Grammaticality, acceptability, possible words and large corpora

However, the real point here is that a large corpus can provide us with data that

we cannot interpret. If it is dangerous to assume that the examples are an accurate

reflection of competence, it is equally dangerous to assume that they are not. More

widely, such examples seem to require us to review the notion of a possible word for a

new generation of researchers who standardly have this kind of data-source available

to them.

4.3 Grammar and corpora

All this raises questions about notions of grammaticality (and hence notions of what

is in the grammar) and what we find in a corpus. Matthews (1979) discusses such

matters by using an analogy with a map. A valid motorists map of Paris might show

an undifferentiated shaded area, surrounded by the boulevard priphrique; another

map might show streets including Place Pigalle and Place du Tertre; yet another might

show the difference in height above sea level between those two streets by means of

contour lines; but maps do not usually differentiate between three-storey buildings

and ten-storey buildings lining the streets. At some level, the detail is deliberately left

off the map without invalidating the map. Do we do the same with our grammars? Do

corpora inevitably bring us face-to-face with the question of building heights, when

all we want to know is how to drive to Montmartre?

If that is the case, it seems to me, then uses of corpora like those in Sect. 4.1 are

fully justified because they are meeting specific claims with data that is on the same

degree of abstraction as the question that was introduced by the original claimants.

Uses like those in Sect. 4.2, on the other hand, may be irrelevant because they may

introduce a whole new level of evidence and a different notion of relevance. But that

seems unhelpful for linguistics. Part of the value of a paper such as Hundt (2013)

is that by searching corpora it discovers that a grammatical construction BE + Past

Participle as perfect is not an error, but a systematically used way of marking the

perfect in much of the English-speaking world. (See also Britain 2000 for another

surprising feature that is more general than might have been thought.) That is an

example like that in (20) from the BNC is in use (acceptable? grammatical?) from a

range of English speakers.

(20)

both Martin and Ian are been having a similar trauma

So part of the role of corpora is to expand our notion of what might be acceptable

or grammatical and challenge our preconceptions about errors. That means that we

cannot rule out the uses of corpora in Sect. 4.2 after all, even if we are unsure about

the weight to give examples that we find. An alternative might be to say that there

is some kind of threshold which corpus studies must cross before their findings are

accepted as mainstream usage. The uses discussed by Hundt (2013) are above that

threshold, while the negative prefixes in Sect. 4.2 are below it. That makes intuitive

sense, but we have no way of operationalizing that threshold. How do we quantify

the number of hits required in a corpus of size n for the construction to be taken

as part of what linguists should be accounting for? There does not seem to be any

non-controversial way of progressing.

L. Bauer

5 Back to possible words and productivity

5.1 Variable outputs

We now need to return to the notion of possible word, both in the light of the discussion above and in the light of some modern discussions of the ways in which WFRs

need to be formulated.

Krott (2001; Krott et al. 2002, 2007) argues in some detail that linking elements

in Dutch and German compounds are best predicted not by rules which give a single possible output for any input, but by a model in which the linking elements are

determined in some analogical way, which can only be modeled with some statistical process to determine the outcome. Such a solution is not at this stage universally

adopted (Dressler et al. 2001; Neef 2009) but raises the notion of the output form of a

word being a probability rather than a well-defined unique form. Similar conclusions

are drawn within the kind of prosodic morphology discussed by Lappe (2007). Various factors may play a role in constraining the output of morphological processes,

and they do not always agree on what the output will be. In some instances, the output

will, indeed, be variable. Lappe cites, for example, variable infixation in Tagalog loan

words (Orgun and Sprouse 1999, who provide the examples in (21)).

(21)

gradwet

plantsa

preno

grumadwetgumradwet

plumantsapumlantsa

pumrenoprumeno

to graduate

to iron

to break

Thornton (2012) shows for Italian that even in inflectional morphology, cell-mates

(alternative forms representing the same grammatical word) may persist for centuries.

In English, too, we find occasional alternative outputs to morphological problems,

such as the co-existence of un-fucking-believable and unbe-fucking-lievable,8 orient

and orientate as the verb corresponding to orientation, or deduce and deduct as the

verb corresponding to deduction, and it may be fair to add at least some of the forms

in (18).9 We can also add the verbs of English that have alternative past tense and

past participle forms (sometimes in different varieties, but not always), forms that

show variable fricative-voicing before plural marking (path may have a plural /pa:Ts/

or /pa:Dz/), and the many other places where there is variability in morphological

realization of the same slot in some lexical paradigm. In a world where such forms are

not uniquely specified, the notion of possible word becomes rather more awkward

to deal with, and corpus-use brings us face to face, in a way that has not previously

been the case, with the notion that such outputs are variable.

8 Unfuckingremarkable is apparently (Google) less remarkable than unrefuckingmarkable, despite claims

in the literature that the placement of expletives in such words is largely prosodically determined.

9 The distinction between e.g. deduct and deduce as corresponding to deduction (in the Sherlock Holmes

sense of deduction, not the arithmetical one) is usually discussed as formation versus back-formation. This

assumes a model where speakers always go from the morphologically simpler to the morphologically more

complex when coining words. Speakers make a large number of what would normatively be called errors

in the stress patterns on verbs because they try to retain the stress pattern from a morphologically more

complex derivative in the morphologically simpler verb. That is we hear forms like propagte rather than

the expected prpagate, presumably influenced by propagtion. At the very least this calls into question

the way in which real speakers operate.

Grammaticality, acceptability, possible words and large corpora

There have, of course, been many ways of presenting variable material in grammars, and there seems little point in running through them or trying to evaluate

them against each other (see, for example, Labov 1972: Chap. 8; Skousen 1989;

Bender 2006; Albright 2009); there are also a number of publications which have

pointed to variable degrees of productivity in different (social or linguistic) environments (see, for example, Plag et al. 1999; Baayen 2009). The point here is not really

how to model variable behavior, but how to conceptualize the underlying categories

when we have variable outputs.

At the very least we need a way of stating the variable outputs of rules. Now we

face the problems that have always faced the notion of variable rule in sociolinguistics. If this is done simply by allowing two or more rules to specify different outcomes

from the same input, we are leaving a lot to the interpretation of the rules. If it is done

with some kind of formula we cannot necessarily predict the outcome on any given

occasion (in fact, given how little we know about the various factors influencing the

outcome, it might be safer to say that this is likely always to be true). Selecting forms

from among stored exemplars does not require a rule of the same kind at all, but

doesnt explain the same phenomena. Using stored exemplars to predict new forms,

possibly as opposed to actual words, begins with a denial that there is a single input

form. Rather multiple factors may be important (including the frequency of the bases

involved, degrees of phonological similarity with other bases, semantic content, pragmatic value). Among these other factors may be degree of productivity of the affixes

concerned. That is, part of the reason that we do not (or, probably more accurately,

rarely) find bluth as the nominalization from blue, is that -th is not productive (available) in English. Part of the reason we are unlikely to find a new word in -ment is that

it is of low productivity, even though it is not necessarily of low frequency (intendment in the BNC is listed as obsolete in the OED, and provides a rare instance of an

apparently innovative form using this suffix). If that is the case, productivity takes on

a whole new importance as being part of what indicates the degree of possibility of a

word.

The implication here is that the moment we move away from the standard notion

of rule with a defined input and output, and instead consider something that has an

aleatoric component in the creation of the output, the notion of a possible word is

changed. How important that change is may remain unclear. But it is certainly the

case that we cannot predict a unique output for all inputs, and this must influence our

notion of blocking, since a rule may permit multiple possible outputs. This suggests

that any view of blocking as pre-emption of a paradigm slot by a particular form must

be made more nuanced if it is to continue to have any credibility.

Such a change also has an impact on our view of productivity. Productivity no

longer provides a single appropriate output for any given morphological problem, but

a range of more or less likely outputs. To the extent that these outputs likelihood can

be influenced by local context (the words in the preceding environment, for instance,

as seems to be the case with tainteress in (15)) productivity is probably undecidable.

Tainterer may be an entirely appropriate output from the agent noun corresponding

to the verb taint, it just so happens that it is one that comes relatively low on some

hierarchy of probabilities for potential words. If this is the case, then arguing from

the non-existence of particular morphological patterns becomes theoretically suspect

in its own right.

L. Bauer

5.2 Two examples

It might be easier to think about the problem and how to resolve it if we consider

some actual examples. Here I shall consider two, the example of nominal -th and the

example of negative prefixation which has already been introduced.

5.2.1 Nominal -th

It is one of the most accepted findings of studies of English word-formation that

suffixation of -th (as we find in words like warmth, truth, depth, and so on, is no

longer productive, neither profitable not available; e.g. Plag 2003:44). On the other

hand, various sources point to the fact that coolth occurs from time to time, apparently

in defiance of the lack of productivity of the affix.

For people brought up in the generative tradition, the answer is simple. Coolth

is an established word, first noted in English in the sixteenth century (OED) and

available to speakers ever since, and present in the linguistic community though rarely

used. Even if occurrences in corpora are extremely rare, the argument would run, the

average speaker hears so much more than is present in any corpus, that most speakers

will have experience of the form and be aware of it. That is how it has survived.

Others argue that even -th, the posterboy of unproductive morphology, remains

marginally productive, and the occasional occurrences of coolth, like that from Elizabeth Peters in (22), cited from the OED, are instances of the productivity of the

affix.

(22)

Hear it we did, in the coolth of the evening, as twilight spread her violet veils

across the garden. [1991]

These two views are associated with rather different theoretical standpoints (or

potential theoretical standpoints). In the first view, we can talk of the production of

coolth as being made possible by a once-only rule for the speech community, and

coolth having become a property of that community, to be exploited by later users.

It is compatible with a process of affixation having become unavailable. The second

view is rather different (although what the real implications are may remain unclear).

Each speaker has an individual lexicon, which overlaps in large part with the lexica

of other speakers in the community. When the speaker coins a word (or the listener

hears a new word) it becomes part of the individuals lexicon, and may be put there

on the basis of a once-only rule which applies only to the output of the individual

speaker. Thus, many speakers may individually coin the same word, which may or

may not then become shared with the speech community as a whole.

These two views have very different implications for blocking (Aronoff 1976,

though see my earlier comments on this notion and Bauer et al. 2013). In the first

view, blocking may only affect the registration of a word as part of the established

vocabulary of a community; in the second view, blocking succeeds or fails at the

level of the individual, which may have implications for the community as a whole,

depending on how much the community ignores individuals creations which are not

item-familiar.

Do we have any evidence on these two views? In this particular case, it seems to

me, the evidence is in favor of the first view. This is because only coolth seems to

Grammaticality, acceptability, possible words and large corpora

turn up as a new or innovative use of -th. Bluth is in the Urban Dictionary (http://

www.urbandictionary.com/) but as a verb and a contraction of BlueTooth. Greenth

occurs a number of times in Google, but is also found in the OED from the eighteenth century. Gloomth, used today mainly as a trade name, is also an eighteenth

century creation. Bluth and Brownth and Lowth are found as proper names. Highth is

a Middle English word that is largely replaced by height, but has a few remnants in

dictionaries. Smellth and spoilth get a number of hits on Google, of which many are

intended as representations of third person singular verbs, and few are unambiguous

nominalizations. Cheapth, usually a proper noun or the result of a typographical error

on Google, gets a few uninterpretable hits where it appears to be an adjective, not a

noun. Given what I have said earlier, I cannot rule out the possibility that there is a

larger pattern here of genuine neologisms using -th, but I see no evidence for it. The

repetition of a few words which are continually recoined does not seem to count as

sufficient evidence for marginal productivity.

5.2.2 Negative prefixation

I shall ignore here the problem posed by the orthography <in> before a bilabial, which

was covered earlier. The larger questions are the apparent productivity of in-, and the

general pattern of apparently synonymous prefixes.

The main difficulty with the productivity of in- at the expense of un- is that it

could easily be a typographical error, <i> for <u> which are adjacent keys. At some

point, though, this excuse fails to hold. What we do not know is what that threshold

should be for accepting that there is a visible trend here. Renouf (2013) gives ample

evidence that well-established words may have an occurrence of 0.5 in a million

words of text. Here we would not necessarily expect the individual items to have

comparable frequency in texts if they are new (Renouf 2013), but we might want to

postulate a level of something like one occurrence of the pattern in a million words

of running text as a measure of productivity (though this will be reconsidered below).

In Sect. 4.2 I cited thirty examples of the pattern, but may not have found all of the

relevant examples. Thirty examples in a corpus which was, at the time the sample was

taken, 400m words of running text is well under one attestation per million words.

I do not believe that this rules out this pattern as a productive one, but I would suggest

that if these figures are at all accurate (and they can be verified by other researchers)

they are not yet sufficient to indicate a trend in English word-formation. They can

be no more than suggestive of trends to watch for.

The same cannot be claimed of the patterns with contrasting negative prefixes.

That so many of these are established in the lexicographical tradition as well as in the

corpus-based evidence seems to imply some stability to a pattern of synonymy. Of

course, in individual cases there may be other factors at play. It may be that untypical

is gradually replacing atypical as the default negative of typical (better evidence is required), and there has been a long normative attempt to distinguish between immoral

and amoral on semantic grounds, that is they are claimed not to be synonymous at all

(that is often difficult to confirm from the citations in the corpus). Nonetheless synonymy appears to be the rule, though we still lack good evidence on factors such as

register, age, ethnicity, etc. COCA examples of competing prefixes are given in (23).

L. Bauer

(23)

works of the sort are generally viewed as inartistic, one-dimensional, tendentious, and, at the extreme, propagandistic.

Well, thats an unartistic idea about dancing. Its a plebeian, low-class idea.

My friends and I were the most unpolitical people in the world

Nobody is apolitical, but Rick is about as apolitical as a guy you can find

[sic]

a perpetual damnation by some unappeasable figure of authority

inappeasable Society would have himand had got him.

This is an ahistorical position that serves as a justification of a status quo

But a broader historical truthfulness mitigates such unhistorical contrivances.

Networks may be dishistorical, but they have a schematic shape

Were very unsimilar in the family ties. She hates children, I want 10.

girls and boys are deeply dissimilar creatures from day one.

Synonymy may or may not be a more general tendency across English, but at

least in this area it is more pervasive than one might expect, and there seems to be

sufficient evidence of coining in the face of synonymy that we cannot simply dismiss

it as some kind of peripheral phenomenon. This implies that contrasting affixes have

some degree of productivity in parallel contexts, which is to say that morphological

processes compete for the same space and that there may be more than one answer

for a particular slot.

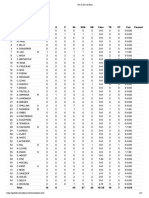

Using Baayens measure of productivity, Baayen and Lieber (1991) report that

there is little difference between the productivity of un- and in-, giving values

of 0.0005 and 0.0004 respectively, compared with a figure of 0.0001 for simplex

adjectivesthis compares with 0.005 for -ish, for instance). Intuitively, this is an

unexpected result, which they have some difficulty in explaining.

A small experiment indicates the problem. Relevant adjectives in in- and un- which

occur just once in the BNC (100,000 words of running text) were extracted, and

those in which the last level of word-formation did not involve the negative prefix

were deleted. The remaining words were checked in the COD (Pearsall 2002), and

those listed in the dictionary were excluded. The remaining words act as a proxy for

relevant neologisms in the BNC. (In fact, some of the items not in the COD were

item-familiar; but there will have been some words which were genuine neologisms

with more than one occurrence in the corpus.) The list of words in in- has 35 members (with an extra eight if all the other allomorphs or in- are included), while the list

of words in un- contains 1019 members. This represents a huge difference in usage

in potentially neologistic environments. The experimental method is, of course, not

without its problems (Would a larger corpus have found a more equal distribution

though the BNC is larger than the corpus used by Baayen and Lieber? Is it true that

neologisms are likely to appear once only? How far can any corpus represent what

the native speaker is exposed to? Was the COD the best dictionary to choose for

this purpose?), but it suggests genuine differences in profitability, with in- not even

showing up as productive according to the one per million test I proposed above (see

also note 10 below). But if in- is to be counted as productive at all, it is much less

so than un- (on the figures here). My suggestion is that the reason it is less used in

neologisms is that there is a more frequent pattern of new or rare forms with un-,

which is thus more conceptually available to speakers. That is, the profitability of un-

Grammaticality, acceptability, possible words and large corpora

is one of the factors in explaining that un- is more profitable than in-. Phrased less

circularly, the speakers experience of the profitability of affix in the immediate past

is one of the factors that a speaker uses in determining the outcome of competition

between morphological processes. That being the case, profitability is an input criterion in judging matters of productiveness, not just an output. The precise nature of the

mechanism whereby this worksassuming that it does holdought to be available

to experimental observation.

Beyond that, however, the examples discussed above seem to indicate that even

marginal cases of profitability can be important in providing viable alternative possible words. However the example of -th is interpreted, some of the prefixes which

show up in negatives seem to be of very low profitability (the one per million level

suggested above as a working indicator of productivity now seems far too high to

be reasonable) and yet are available when the conditions demand them. I have come

very little closer to determining what those conditions might be, but have, I think,

indicated how difficult it is to determine what might be and what is not a potential

word.

5.2.3 Outcomes

We can summarize some of this and say that forms which could be typographical errors need a higher level of support than others; that with any new pattern, we need to

set a moderate threshold below which we will not consider a pattern (here the figure

of one occurrence of the pattern per million word of running text has been proposed,

but other figures could beand have beensuggested10 ); that ideally any discussion

of the reality of productivity of patterns would have to take into consideration questions such as register, variety and diachronic development. Other factors are already

canvassed in the literature, e.g. words that occur only in headlines or in poetic diction should probably be discounted (Bauer 2001:57), or at least counted as being in a

register of their own. Words in overtly humorous contexts should probably be treated

circumspectly (see also above with reference to tainterer). Schultink (1961) wants

to exclude all words which are consciously formed, but not only does this seem too

restrictive, it is not operationalizable (Plag 1999:14; Bauer 2001:68).

6 Conclusion

The notion of actual word is a highly fraught one, although it seems absolutely basic

to any study of morphological productivity. When the evidence that is provided by

large corpora is brought to bear on the problem, the issues with the notion seem to

get worse rather than better. Attested may not imply acceptable; acceptable may not

imply grammatical; attested may or may not imply actual.

When we are considering word-formation, arguments from asterisks may be inadvisable from a practical point of view, but there is also evidence from variability

10 Baayen and Lieber have the frequency of new morphologically simplex words as the baseline, above

which things count as productive, which is a much better justified level and rather more inclusive than this

proposal, which is really just included to allow for the argument.

L. Bauer

of morphological outcomes that they are likely to be theoretically inadequate. This

conclusion arises if we take a point of view that there is some statistical element

involved in determining the potentiality of an unfamiliar word. In other words, we

assumealong with a growing number of scholarsthat word-formation rules do

not necessarily have a single possible output but may have several. Such a notion

raises questions about our traditional views of productivity, since we may no longer

be able to decide definitely in any given set of circumstances what is or is not a

possible word. Our view of productivity is also changed if we see the degree of productivity of any particular morphological process as being part of the input (one of

the conditioning factors) in a word-formation rule, and not simply the result of the

use of that word-formation rule. Apparently, even very low productivity levels have

an effect.

Clearly, conclusions like this should be controversial: they have many implications

for the form of the morphological component and the nature of morphology. Thus this

paper is intended to open up discussion in this area, and provoke responses about the

nature of productivity, the form of word-formation rules, and the nature of possible

words.

References

Albright, A. (2009). Modeling analogy as probabilistic grammar. In J. P. Blevins & J. Blevins (Eds.),

Analogy in grammar: form and acquisition (pp. 185204). Oxford: Oxford University Press.

Aronoff, M. (1976). Word formation in generative grammar. Cambridge: MIT Press.

Baayen, R. H. (2009). Corpus linguistics in morphology: morphological productivity. In A. Luedeling

& M. Kyt (Eds.), Corpus linguistics: an international handbook (pp. 900919). Berlin: Mouton

De Gruyter.

Baayen, H., & Lieber, R. (1991). Productivity and English derivation: a corpus-based study. Linguistics,

29, 801843.

Bauer, L. (1983). English word-formation. Cambridge: Cambridge University Press.

Bauer, L. (1996). Is morphological productivity non-linguistic? Acta Linguistica Hungarica, 43, 1931.

Bauer, L. (1998). When is a sequence of two nouns a compound in English? English Language and Linguistics, 2, 6586.

Bauer, L. (2001). Morphological productivity. Cambridge: Cambridge University Press.

Bauer, L., Lieber, R., & Plag, I. (2013). The Oxford reference guide to English morphology. Oxford:

Oxford University Press.

Bender, E. M. (2006). Variation and formal theories of language: HPSG. In K. Brown (Ed.), Encyclopedia

of language and linguistics (2nd ed., Vol. 13, pp. 326329). Oxford: Elsevier.

Britain, D. (2000). As far as analysing grammatical variation and change in New Zealand English with

relatively few tokens <is concerned/>. In A. Bell & K. Kuiper (Eds.), Focus on New Zealand English

(pp. 198220). Amsterdam: Benjamins.

Carnie, A. (2007). Syntax (2nd ed.). Malden: Blackwell.

Carstairs-McCarthy, A. (1992). Current morphology. London: Routledge.

Chomsky, N. (1957). Syntactic structures. Paris: Mouton.

Chomsky, N. (1965). Aspects of the theory of syntax. Cambridge: MIT Press.

Chomsky, N. (1975). The logical structure of linguistic theory. New York: Plenum.

Corbin, D. (1987). Morphologie drivationelle et structuration du lexique (Vols. 1, 2). Tbingen:

Niemeyer.

Davies, M. (2008). The Corpus of Contemporary American English (COCA): 400+ million words. 1990present. http://www.americancorpus.org/.

Di Sciullo, A. M., & Williams, E. (1987). On the definition of word. Cambridge: MIT Press.

Dressler, W. U., Libben, G., Stark, J., Pons, C., & Jarema, G. (2001). The processing of interfixed German

compounds. In: Yearbook of morphology 1999 (pp. 185220).

Grammaticality, acceptability, possible words and large corpora

Fabb, N. (1988). English suffixation is constrained only by selectional restrictions. Natural Language &

Linguistic Theory, 6, 527539.

Fleischer, W., & Barz, I. (2007). Wortbildung der deutschen Gegenwartssprache. Tbingen: Niemeyer.

Fromkin, V., Blair, D., & Collins, P. (1999). An introduction to language (4th Australian ed.). Sydney:

Harcourt.

Halle, M. (1973). Prolegomena to a theory of word formation. Linguistic Inquiry, 4, 316.

Harris, Z. S. (1951). Structural linguistics. Chicago: University of Chicago Press.

Hay, J. (2003). Causes and consequences of word structure. New York: Routledge.

Hundt, M. (2013). Error, feature, or (incipient) change? In Englishes today conference, Vigo, 1819 October 2013.

Jackendoff, R. (1975). Morphological and semantic regularities in the lexicon. Language, 51, 639671.

Jensen, J. T. (1990). Morphology. Amsterdam: Benjamins.

Jespersen, O. (1917). Negation in English and other languages. Copenhagen: Bianco Lunos Bogtrykkeri.

Koefoed, G. A. T. (1992). Analogie is geen taalverandering. Forum der Letteren, 33, 1117.

Krott, A. (2001). Analogy in morphology. Doctoral dissertation, Katholieke Universiteit Nijmegen.

Krott, A., Schreuder, R., & Baayen, R. H. (2002). Linking elements in Dutch noun noun compounds:

constituent families as analogical predictors for response latencies. Brain and Language, 81, 723

735.

Krott, A., Schreuder, R., Baayen, R. H., & Dressler, W. U. (2007). Analogical effects on linking elements

in German compounds. Language and Cognitive Processes, 22, 2557.

Labov, W. (1972). Sociolinguistic patterns. Philadelphia: University of Pennsylvania Press.

Lappe, S. (2007). English prosodic morphology. Dordrecht: Springer.

Ljung, M. (1970). English denominal adjectives. Lund: Acta Universitatis Gothoburgensis.

Lyons, J. (1991). Chomsky (3rd ed.). London: Fontana.

Marchand, H. (1969). The categories and types of present-day English word-formation (2nd ed.). Munich:

Beck.

Matthews, P. H. (1979). Generative grammar and linguistic competence. London: Allen & Unwin.

Miller, D. G. (1993). Complex verb formation. Amsterdam: Benjamins.

Miyagawa, S. (1999). Causatives. In N. Tsujimura (Ed.), The handbook of Japanese linguistics (pp. 236

268). Malden: Blackwell.

Neef, M. (2009). IE, Germanic German. In R. Lieber & P. Stekauer (Eds.), The Oxford handbook of

compounding (pp. 386399). Oxford: Oxford University Press.

Orgun, C. O., & Sprouse, R. L. (1999). From MParse to control: deriving ungrammaticality. Phonology,

16, 191224.

Payne, J., & Huddleston, R. (2002). Nouns and noun phrases. In R. Huddleston & G. K. Pullum (Eds.),

The Cambridge grammar of the English language (pp. 323523). Cambridge: Cambridge University

Press.

Pearsall, J. (Ed.) (2002). The concise Oxford dictionary (10th ed., revised). Oxford: Oxford University

Press.

Plag, I. (1999). Morphological productivity. Berlin: Mouton de Gruyter.

Plag, I. (2003). Word-formation in English. Cambridge: Cambridge University Press.

Plag, I., Dalton-Puffer, C., & Baayen, H. (1999). Morphological productivity across speech and writing.

English Language and Linguistics, 3, 209228.

Radford, A., Felser, C., & Boxell, O. (2012). Preposition copying and pruning in present-day English.

English Language and Linguistics, 16, 403426.

Rainer, F. (1988). Towards a theory of blocking: the case of Italian and German quality nouns. In Yearbook

of Morphology (pp. 155185).

Renouf, A. (2013). A finer definition of neology in English: the life-cycle of a word. In H. Hasselgrd, J.

Ebeling, & S. O. Ebeling (Eds.), Corpus perspectives on patterns of lexis (pp. 177207). Amsterdam:

Benjamins.

Skousen, R. (1989). Analogical modeling of language. Dordrecht: Kluwer Academic.

The British National Corpus, version 3 (BNC XML Edition) (2007). Distributed by Oxford University

Computing Services on behalf of the BNC Consortium. http://www.natcorp.ox.ac.uk/.

Thornton, A. M. (2012). Reduction and maintenance of overabundance: a case study on Italian verb

paradigms. Word Structure, 5, 183207.

Zimmer, K. E. (1964). Affixal negation in English and other languages: an investigation of restricted

productivity. Word, 20, 2145.

Zwicky, A. M. (1969). Phonological constraints in syntactic descriptions. Papers in Linguistics, 1, 411

463.

Вам также может понравиться

- Mage M20 Book of Secrets 2017 PDFДокумент301 страницаMage M20 Book of Secrets 2017 PDFLuan Herrera100% (28)

- The Nightmare SwitchДокумент8 страницThe Nightmare SwitchDouglas FernandesОценок пока нет

- Mage The Awakening - RulebookДокумент402 страницыMage The Awakening - RulebookThomas Mac100% (11)

- M20 - How Do You DO That PDFДокумент143 страницыM20 - How Do You DO That PDFLucas Danto Octávio95% (19)

- The Hajj Collected Essays BM PDFДокумент288 страницThe Hajj Collected Essays BM PDFDi Biswas100% (1)

- The Hajj Collected Essays BM PDFДокумент288 страницThe Hajj Collected Essays BM PDFDi Biswas100% (1)

- Flytes of Fancy - Boasting and Boasters From Beowulf To Gangsta RapДокумент12 страницFlytes of Fancy - Boasting and Boasters From Beowulf To Gangsta RapΝτάνιελ ΦωςОценок пока нет

- WTS PointДокумент6 страницWTS PointGülsüm KaralarОценок пока нет

- Grosman, A. - 'Rameau and Zarlino. Polemics in The Traite de L'harmonie'Документ17 страницGrosman, A. - 'Rameau and Zarlino. Polemics in The Traite de L'harmonie'Mauro SarquisОценок пока нет

- Cybersecurityaself TeachingintroductionДокумент221 страницаCybersecurityaself TeachingintroductionChandra Mohan SharmaОценок пока нет

- Open The Box A Behavioural Perspective On The Re 2020 Journal of PurchasingДокумент16 страницOpen The Box A Behavioural Perspective On The Re 2020 Journal of PurchasingSunita ChayalОценок пока нет

- SODEXOv SEIU-Complaint2011Документ130 страницSODEXOv SEIU-Complaint2011Stern BurgerОценок пока нет

- Aronoff & Schvaneveldt (Productivity)Документ9 страницAronoff & Schvaneveldt (Productivity)ithacaevadingОценок пока нет

- Joanne M. Braxton-Maya Angelou's I Know Why The Caged Bird Sings - A Casebook (Casebooks in Contemporary Fiction) (1998) PDFДокумент173 страницыJoanne M. Braxton-Maya Angelou's I Know Why The Caged Bird Sings - A Casebook (Casebooks in Contemporary Fiction) (1998) PDFΝτάνιελ Φως100% (7)

- Fate Core System ElectronicДокумент310 страницFate Core System ElectronicJason Keith50% (2)

- Brown and Frazer The Acquisition of SyntaxДокумент38 страницBrown and Frazer The Acquisition of SyntaxRobert HutchinsonОценок пока нет

- DLL Grade 9 Lesson 1 Quarter 1Документ4 страницыDLL Grade 9 Lesson 1 Quarter 1CJ Ramat Ajero75% (12)

- Möllendorff-1892-A Manchu Grammar PDFДокумент59 страницMöllendorff-1892-A Manchu Grammar PDFItelmeneОценок пока нет

- RabanitoДокумент10 страницRabanitoJESÚS EDILBERTO REYES RAMÍREZОценок пока нет

- Critical ZonesДокумент113 страницCritical ZonesLarissa BarcellosОценок пока нет

- Buku ProseДокумент42 страницыBuku ProseNovi RisnawatiОценок пока нет

- Language in Child Chimp and GorillaДокумент23 страницыLanguage in Child Chimp and GorillaGuadalupe MОценок пока нет

- Metal Catalysts Supported On Biochars: Part I Synthesis and CharacterizationДокумент12 страницMetal Catalysts Supported On Biochars: Part I Synthesis and CharacterizationArmonistasОценок пока нет

- Global, and Interdisciplinary CurriculumДокумент15 страницGlobal, and Interdisciplinary CurriculumMatildeAmbiveriОценок пока нет

- ConflictBarometer 2016Документ208 страницConflictBarometer 2016yawarmiОценок пока нет

- UntitledДокумент321 страницаUntitled谷光生Оценок пока нет

- Intellectual Dependency: Late Ottoman Intellectuals Between Fiqh and Social ScienceДокумент37 страницIntellectual Dependency: Late Ottoman Intellectuals Between Fiqh and Social ScienceAMОценок пока нет

- Threshing MachineДокумент15 страницThreshing MachineAbel SamuelОценок пока нет

- "THE MALLARD" July 2023Документ16 страниц"THE MALLARD" July 2023Steve WilliamsonОценок пока нет

- Co-Operative Philosophy NotesДокумент44 страницыCo-Operative Philosophy NotesNaomi NyambasoОценок пока нет

- Metal Cluster ComplexesДокумент13 страницMetal Cluster ComplexesKeybateОценок пока нет

- 3 Buf 000553 R 2Документ393 страницы3 Buf 000553 R 2Rodrigo SampaioОценок пока нет

- Adv Unit9 ExtraPracticeДокумент2 страницыAdv Unit9 ExtraPracticetatteqattaxОценок пока нет

- Context-Sensitivity and Its Feedback The Two-Sidedness of Humanistic DiscourseДокумент35 страницContext-Sensitivity and Its Feedback The Two-Sidedness of Humanistic DiscourseMatheus MullerОценок пока нет