Академический Документы

Профессиональный Документы

Культура Документы

Complete Research

Загружено:

ChesterMalulekeИсходное описание:

Авторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

Complete Research

Загружено:

ChesterMalulekeАвторское право:

Доступные форматы

RESEARCH IN

CLOUD

COMPUTING

Andrew Weiss

Michael Bluvshteyn

A Look into Cloud Infrastructure Technology

This paper is aimed at providing IT professionals with additional insight

on cloud computing platforms and service models. The focus of this

research is contrasting the most popular closed source platform,

VMware, with OpenStack, an open source platform that is becoming the

preferred choice for infrastructure cloud deployments.

Research in Cloud Computing

CONTENTS

ABOUT THE AUTHORS ......................................................................................................... 2

WHO IS THIS PAPER GEARED TOWARDS? ........................................................................... 2

HOW HAS THIS PAPER BEEN ORGANIZED? ........................................................................ 2

A BACKGROUND IN CLOUD COMPUTING .......................................................................... 3

THE PROBLEM AT HAND ...................................................................................................... 3

RESEARCH METHODOLOGY ................................................................................................ 4

WHAT IS OPENSTACK .......................................................................................................... 7

LOGICAL PLATFORM SEGMENTATION .............................................................................. 14

BUILDING AN OPENSTACK ENVIRONMENT ...................................................................... 14

TROUBLESHOOTING OPENSTACK ..................................................................................... 17

WHAT IS VMWARE ............................................................................................................. 19

TYPICAL DEPLOYMENT SCENARIO .................................................................................... 21

BUILDING THE VMWARE ENVIRONMENT .......................................................................... 21

CLOUD SCALABILITY .......................................................................................................... 26

CLOUD MANAGEABILITY ................................................................................................... 27

CLOUD INTEROPERABILITY ................................................................................................ 27

IS THE CLOUD A VIABLE SOLUTION?................................................................................. 27

WHICH SOLUTION IS BETTER FOR THE GIVEN SCENARIO? ............................................... 28

CONCLUSIONS DRAWN FROM THIS RESEARCH ................................................................ 29

WHERE TO FROM HERE? .................................................................................................... 29

GLOSSARY.......................................................................................................................... 30

REFERENCES ....................................................................................................................... 31

Page 1

Research in Cloud Computing

ABOUT THE AUTHORS

Andrew Weiss

Andrew is currently finishing his undergraduate degree work in Computer and Information Technology at

Purdue University with a concentration in network engineering. Being an active participant in the IT

community, he is well aware of the challenges and trends IT professionals are facing. His strong interests in

technology have helped him gain knowledge in topics that include cloud computing, systems administration,

and social media strategy. Beginning in the summer of 2012, he will have the opportunity to work as a

consultant for Microsoft and provide enterprise level clients with cloud and infrastructure expertise.

Michael Bluvshteyn

Michael is currently an undergraduate student at Purdue University working on finishing his B.S. in Computer

and Information Technology with a concentration in Network Engineering. He has always been interested in

virtualization and cloud computing. He believes that cloud computing is a way to help organizations of all

sizes achieve more with less, while at the same time, reducing the cost and environmental footprint of IT.

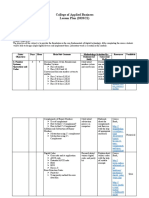

WHO IS THIS PAPER GEARED TOWARDS?

This paper has been written for those interested in learning more about cloud technologies and how they are

evolving. CIOs, IT administrators, and project managers looking to learn more about ways in which they can

adopt the cloud into their own organizations can benefit from this research. While this paper is not a step-bystep guide in deploying OpenStack, it does provide a general outline of the various components of the

platform. A limited amount of technical jargon has been included to ensure that both technical and nontechnical readers can be comfortable with the concepts explored in this report.

HOW HAS THIS PAPER BEEN ORGANIZED?

The paper begins by introducing cloud computing to the reader and exploring the research methodology

followed. From there, both OpenStack and VMware are explained along with how each was deployed. The

section describing the research findings has been divided to include both technologies and any overlap that

was discovered.

Page 2

Research in Cloud Computing

Research in Cloud Computing

A LOOK INTO CLOUD INFRASTRUCTURE TECHNOLOGY

A BACKGROUND IN CLOUD COMPUTING

Cloud computing encompasses a broad spectrum of technologies, resources, and services. Companies around

the world are looking to the cloud to provide infrastructure stability for their businesses. According to a recent

article published in Network World, 2012 alone is expected to see tremendous growth in cloud activity

(Henderson, 2012). Service models that include infrastructure-as-a-service (IaaS), platform-as-a-service

(PaaS), and software-as-a-service (SaaS) will be expanded upon to provide for new management and

security options. The number of mobile devices in the workplace has also been increasing exponentially,

resulting in additional cloud-based usage. With the rapid onset of new cloud technologies comes a need for

knowledge in areas of deployment and implementation.

Back to the basics

What really is cloud computing? Why has the demand for cloud deployment skyrocketed over the last few

years? To answer these questions, it is important to go back to the basics, to the idea of a mainframe. Think

of the cloud as a massive mainframe computer capable of processing hundreds of thousands of transactions

based on various inputs and outputs. End-users connect to this mainframe via a fabric of internetworks that

culminate to one or more central locations. Their data and applications are stored in a central repository that

can be accessed anywhere network connectivity can be established.

The cloud is really no different in that users have the ability to access various services maintained and

delivered by a web of computing devices. These devices interact with each other for the purposes of serving

platforms from which to work, for delivering applications, and for functional infrastructure. While this web of

devices could be in one physical location, high-speed networks allow for the majority of clouds to be

scattered throughout multiple geographic locales.

THE PROBLEM AT HAND

When describing the cloud, the word broad is an understatement. Information technology advocates argue

various meanings of the term cloud computing. While it has officially been defined by the National Institute

of Standards and Technology (NIST), there is still a wealth of knowledge that has yet to be discovered.

Organizations are beginning to ask the question, What can the cloud do for us? Since there is no one right

answer to this, it is important to provide information on technologies that can be leveraged to harness the

cloud. Concerns of manageability and security have been circling throughout the community. This research

will dive further into different cloud solutions that aim to address these concerns.

Page 3

Research in Cloud Computing

RESEARCH METHODOLOGY

In order to examine the issues discussed in more detail, it is important to follow a methodology that resembles

common cloud computing deployment scenarios. To gather the appropriate data, a fully functional cloud

environment is required.

Overview

Typical enterprise IT architectures are comprised of multi-site data centers that contain core operating

infrastructure components. Large corporations utilize multiple, geographically dispersed data centers for

redundancy and disaster recovery scenarios. This research will attempt to emulate these data centers by

creating multiple server nodes in a laboratory environment. Nodes will be interconnected via simulated wide

area network (WAN) links.

The Scenario

The study will recreate the IT environment of a medium size business. The business is large enough to have IT

professionals that are dedicated to specific fields and an IT department that has both developers and systems

engineers. The businesss primary systems are Microsoft-based (i.e. Active Directory, Exchange, etc.). Some

core systems run Linux/Unix (i.e. Oracle, Web Services, etc.) and in-house expertise is used to run both

platforms. The Company has three primary sites, its corporate office in Chicago, IL and engineering/sales

offices in Los Angeles and New York. The IT department resides in Chicago, however, both sales offices have

virtualization nodes for redundancy and for handling local requests. All remote users and smaller remote sites

connect directly to the corporate office for all IT services.

The Objective

The study aims to answer five main questions.

n Is the cloud a viable solution?

n Which virtualization solution is better for the given scenario?

n What are the scalability concerns?

n What are the interoperability concerns?

n What are the manageability requirements?

Is the cloud a viable solution?

With all of the hype surrounding cloud computing, it is easy to assume that it is a solution for every

environment. The main goal of this section is to decide whether or not cloud computing is worthwhile for the

business depicted in the scenario.

What are the scalability concerns?

Organizations need to have the ability to scale their IT resources to support future growth. Our research

intends on learning more about how each platform can accomplish this. While the research environment is

limited to a small-scale deployment, the concepts explored can be applied to large cloud applications.

Page 4

Research in Cloud Computing

What are the interoperability concerns?

Interoperability is a key concern to provide a dynamic and robust environment. This research will look at the

benefits and difficulties of using both products with third-party solutions.

What are the manageability requirements?

Administrators want to be able to manage their environments with limited amounts of maintenance and

troubleshooting. This research is aimed at learning how both OpenStack and VMware offer management

capabilities. How easy or difficult it is to manage each platform will be explained.

Which cloud solution is better for the scenario?

In this study, the focus is primarily on two cloud solutions, VMware and OpenStack. This section will detail

which solution (if any) is better suited for the company depicted in the scenario. Categories will include cost,

performance, ease of implementation, and support.

Page 5

Research in Cloud Computing

Andrew Weiss

Page 6

A Dive into the OpenStack Cloud

Research in Cloud Computing

WHAT IS OPENSTACK

Overview

OpenStack is an open source cloud computing platform originally developed by the hosting company

Rackspace and NASA in a joint collaboration effort. The project allows organizations to deploy cloud

computing services within their existing infrastructure. Scalability and elasticity are the two primary goals of

the OpenStack project. Since its first release in the fall of 2010, OpenStack has had considerable growth

with over 100 corporations participating at the time of this writing.

History of OpenStack

In October 2010, the initial release of OpenStack (code-name Austin) was made available to the public.

Since then, OpenStack has come a long way in both its capabilities and support by the community. The two

original projects include OpenStack Compute and OpenStack Object Storage. The Bexar release in

February 2011 introduced the OpenStack Image Service Project. In April 2011, the OpenStacks Cactus

build was introduced with improvements to all three projects. The latest stable release of OpenStack is

Diablo which was released in September 2011.

Two new projects no longer in incubation are OpenStack Identity and OpenStack Dashboard. These projects

will be included with the next official release of OpenStack, code-name Essex which is due for an April

2012 release. Both of these components were in Release Candidate status at the time of this writing and

were included in the research.

OpenStack Projects

Currently, OpenStack is made up of five foundational projects: OpenStack Compute (code-name Nova),

OpenStack Object Storage (code-name Swift), OpenStack Image Service (code-name Glance),

OpenStack Identity (code-name Keystone), and OpenStack Dashboard (code-name Horizon).

Page 7

Research in Cloud Computing

FIGURE 1 - OPENSTACK COMPONENTS

Each component of OpenStack is designed to provide specific functionality that revolves around the following

key elements:

n Virtual machine provisioning (Compute)

n Hypervisor management (Compute)

n Network management (Compute)

n Virtual machine image management (Image Service)

n Storage (Object Storage)

n Authentication (Identity)

n User interface (Dashboard)

Page 8

Research in Cloud Computing

OpenStack Compute (code-name Nova)

OpenStack Compute represents the critical services required for creating and maintaining a cloud. It is

responsible for provisioning networks, managing hypervisors, and managing virtual machine instances.

Compute, or more commonly known as Nova, has been divided into a set of APIs for both internal

communication between services and external communication with other projects such as OpenStack Image

Service. This API can be referred to as the nova API and its inner-workings are shown in Figure 2 below.

FIGURE 2 - NOVA API DIAGRAM

OpenStack Image Service (code-name Glance)

OpenStack Image Service is responsible for managing pre-created virtual machine images. Administrators

will typically customize operating system images for their specific needs and then use Glance to upload these

images to a database. This database can be either a simple local file store or an OpenStack Object Storage

container (see OpenStack Object Storage (code-name Swift)). As with Nova, Glance is also made up of its

own APIs that are responsible for working with other OpenStack services. A detailed diagram highlighting

Glances APIs is shown below (Figure 3).

Page 9

Research in Cloud Computing

FIGURE 3 - GLANCE API DIAGRAM

OpenStack Object Storage (code-name Swift)

OpenStack Object Storage, also referred to as Swift, is designed to provide massive data storage

capabilities. Its main purpose is to deliver scalable data services with failover capabilities. One of the main

advantages of Swift is its ability to eliminate the numerous complex layers of storage technologies and create

a simple concept of storing data in the form of objects. These objects are then placed into what are called

containers, which are similar to folders in a Windows or Linux OS. Swift also has its own authentication system

built in for those that are not looking for centralized authentication. The APIs that make up Swift are shown in

the diagram below (Figure 4):

Page 10

Research in Cloud Computing

FIGURE 4 - SWIFT API DIAGRAM

OpenStack Identity (code-name Keystone)

One of the new features being included in the latest release of OpenStack is the Identity authentication

service. Keystone provides a way for each project to authenticate when making requests. Authentication calls

are made anytime requests are passed from users to services and from services to other services. Encrypted

tokens are used when such requests are made to ensure their validity. Keystone has the capability of

separating administrators and standard users. It also distributes resources into tenants which are then

assigned to the appropriate user(s). OpenStack Keystone is often used to provide a means for users to login

to a management dashboard (see OpenStack Dashboard section). The APIs that make up Keystone are

shown in Figure 5 below:

FIGURE 5 - KEYSTONE API DIAGRAM

Page 11

Research in Cloud Computing

OpenStack Dashboard (code-name Horizon)

The OpenStack Dashboard provides a graphical user interface from which administrators can manage their

OpenStack cloud. Much of the command-line functionality of OpenStack has been incorporated into an easyto-use management console. Horizon has been built on the Django open-source Python web framework, which

allows for companies to customize the dashboard to suit their needs.

FIGURE 6 - OPENSTACK DASHBOARD LOGIN

OpenStack Logical Architecture

When each of the API diagrams shown above is combined, the entire OpenStack cloud can be visualized from

the ground up (Figure 7).

Page 12

Research in Cloud Computing

FIGURE 7 - OPENSTACK LOGICAL ARCHITECTURE

Development Framework

OpenStack has been built primarily using Python. This has allowed for the design and development of a

highly scalable cloud technology with minimal resource consumption. There is also support for nearly anyone

who is interested in the platform to contribute to the project. With freely available APIs, companies have

been developing their own OpenStack-based solutions.

Page 13

Research in Cloud Computing

LOGICAL PLATFORM SEGMENTATION

While OpenStack has been designed for a multitude of deployment scenarios, the most popular method has

been to separate the projects into three distinct segments:

n Cloud controller

n Compute node(s)

n Storage node(s)

In the planning process, it is necessary to determine the way in which these projects will be distributed, both

logically and physically. This is accomplished depending on the environment in which OpenStack will be

utilized. Smaller organizations may not require high availability and redundancy as opposed to larger

enterprises with data centers. As a result, the amount of hardware required for each segment will vary.

The Cloud Controller

The cloud controller contains the core services necessary to manage an OpenStack cloud. These services

typically include: Compute, Image Service, Identity, and Dashboard.

Compute Node(s)

Each virtual machine host is also known as a compute node. Compute nodes run the Nova Compute service

and contain identical configurations. OpenStack has a built in compute scheduler that allows the

administrator to control how virtual machine instances are allocated across hosts. For the purposes of this

research, simple scheduling was established which allowed for load balancing of instances based on

resource consumption. The cloud controller also had the compute service installed, giving instances the ability

to be provisioned across multiple hosts.

Storage Node(s)

Storage nodes run the required Swift storage services and are synchronized via underlying technologies.

When multiple storage nodes are used, a reverse proxy server directs storage requests to the appropriate

nodes. A multi-node environment provides for high availability data deployments.

BUILDING AN OPENSTACK ENVIRONMENT

The first requirement to conducting this research was to design and build a functional OpenStack testing

environment. Much time was spent initially to learn as much as possible about the platform and the

deployment requirements. Since this is not meant to be a step-by-step deployment guide, only a simplified

overview of how OpenStack is commonly deployed has been included. A separate deployment guide

entitled OpenStack Deployment A Step-by-Step Essex Install Guide can be referred to by those

interested in a detailed set of instructions.

Knowledge area requirements

Since OpenStack is an open source platform, one must have a solid level of understanding of various systems

and software. The ability to troubleshoot and debug is critical when dealing with open source software.

While OpenStack is a stable platform, it is essential that the deployment be conducted in a controlled

environment. Testing should be completed in a development build prior to deploying for production purposes.

Page 14

Research in Cloud Computing

Below is a set of required technical knowledge items that administrators should possess prior to building

OpenStack in their own environments.

n Linux operating system fundamentals

n SQL

n Python fundamentals

n Shell scripting

n Networking fundamentals

n KVM virtualization

Hardware requirements

As outlined above in the Methodology section, it was important that a production enterprise environment

was emulated as closely as possible. OpenStack requires a limited number of resources, and as a result,

there was no need for expensive hardware. Many of the OpenStack components could be run simultaneously

on the same server, which allowed for additional consolidation of equipment.

The environment was separated into the three logical components as previously described: the cloud

controller, compute node, and storage node. The system specifications for the hardware used is as follows:

Cloud controller specifications

n Dell OptiPlex 745

n Intel Core 2 Duo Processor with VT support

n 2 Gigabit Ethernet network interface cards (onboard and PCI-E add-in card)

n 4GB of memory

n 80GB internal hard drive

Compute node specifications

n Dell OptiPlex 745

n Intel Core 2 Duo Processor with VT support

n 2 Gigabit Ethernet network interface cards (onboard and PCI-E add-in card)

n 6GB of memory

n 80GB internal hard drive

Storage node specifications

n Dell OptiPlex 745

n Intel Core 2 Duo Processor

n 2 Gigabit Ethernet network interface cards (onboard and PCI-E add-in card)

Page 15

Research in Cloud Computing

n 2GB of memory

n 2 80GB internal hard drives

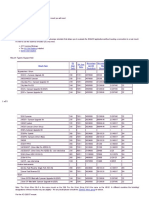

Networking requirements

A critical aspect of OpenStack is a proper network implementation. For the purposes of this research, three

distinct networks were created: a public, private, and virtual machine network. Refer to (Table 1) for the

specifications of each network.

Network Type

Addressing

Public

10.129.1.0/24

Private

192.168.10.0/24

Virtual Machine

172.16.0.0/24

TABLE 1 - NETWORK SPECIFICATIONS

Each physical machine had two network interface cards installed, one for the public network and the other for

the private network. The public network was located on the Purdue Computer and Information Technology

(CIT) network and allowed for external communications. The private network was used for communication

between the hosts themselves. A bridged interface was created on the cloud controller to allow for

communication between the virtual machine network and the public network.

Building the Cloud Controller

Ubuntu 12.04 (LTS) Beta 1 was chosen as the base operating system due to its support of the latest

OpenStack Essex packages. Upon completing the OS installation, the machine was configured with addresses

on both the public and private networks as described in Table 1. OpenStacks community maintains a set of

packages designed for Ubuntu, making it easier to deploy the required components without having to compile

the code from source. The latest release candidate packages for the OpenStack Essex build were installed on

the cloud controller (Table 2).

Component

Package

Identity

keystone 2012.1~rc1

Compute

nova 2012.1~rc1

Image

Service

glance 2012.1~rc1

Dashboard

horizon 2012.1~rc1

TABLE 2 - CLOUD CONTROLLER PACKAGES

The recommended order of installation and configuration of the cloud controller was followed, as indicated

below:

1. MySQL database server

2. Keystone

3. Nova

Page 16

Research in Cloud Computing

4. Glance

5. Horizon

A database server is required to store all of the metadata and tables generated by the OpenStack

components. Keystone is typically the first service installed because each of the components require

authentication in order for intercommunications to be established. The services were configured according to

documentation obtained from various sources (refer to the References section).

Building the Compute Node

Prior to deploying additional compute nodes, it is essential to have a working cloud controller. The compute

host for this research used Ubuntu 12.04 (LTS) Beta 1 as its operating system, and its networking stack was

configured similarly to that of the cloud controller. Once the core Nova services were correctly installed and

configured on the cloud controller, the Nova Compute and network services were installed onto the additional

host and the configuration files were duplicated. Nova was smart enough to determine that another compute

node was installed, and it automatically included it as an available resource.

Building the Storage Node

While a traditional OpenStack storage environment would include multiple storage hosts, this research was

only conducted on a single node. Ubuntu 12.04 (LTS) Beta 1 was installed and configured for both public and

private networking. The swift 2012~rc1 package was installed and setup appropriately.

TROUBLESHOOTING OPENSTACK

Troubleshooting became an important skill throughout this research on OpenStack. Fortunately, each package

creates its own log files from which relevant debugging information can be parsed. Each of the components

was configured for all levels of debugging so that the commands and their outputs could be analyzed. Since

there was no formal troubleshooting approach defined prior to this research, a procedure for doing so was

developed.

n Analyze the appropriate log files

n Ensure the configuration files are correct and error-free

n Make sure the required services are running

Page 17

Research in Cloud Computing

Michael Bluvshteyn

Page 18

A Dive into the VMware Cloud

Research in Cloud Computing

WHAT IS VMWARE

Overview

VMware is a global company providing virtualization software for home computing as well as the enterprise.

VMware has quickly become the global leader in virtualization and cloud infrastructure with more than

300,000 customers and 25,000 partners. VMware also has a large software portfolio, allowing it to provide

solutions for individuals and companies of any size.

History of VMware

VMware was founded in 1998 by Diane Greene, Mendel Rosenblum, Scott Devine, Edward Wang and

Edouard Bungion. VMwares most notable product, and the one that helped shape the company into what it is

today, was the first Type 2 hypervisor. This hypervisor was eventually used in VMwares first product,

VMware workstation. That, along with their Type 1 hypervisor, form the basis of all VMware-based cloud

products today. VMware was acquired by EMC in August 2007 and is run as a partial subsidiary of EMC to

this day. EMC released 10% of VMware shares in an IPO on August 2007.

VMware Products

VMware has a large portfolio of products; however, the three that were focused on for this paper were

VMware vSphere, vCenter, and vCloud Director.

vSphere

vSphere, formerly known as VMware Infrastructure 4, is VMwares cloud computing and virtualization

operating system. They key component of vSphere is VMware ESXi which is the platform on which all

enterprise virtualization and cloud solutions from VMware are built.

vCenter

vCenter, formerly known as Virtual Center, is a centralized management tool for vSphere.

vCloud Director

vCloud Director is a cloud computing management platform. vCloud Director interfaces with virtualized

resources allowing users to gain self-service access to them through a services catalogue. vCloud Director

allows tasks that previously would have required significant IT involvement to be completed automatically.

Page 19

Research in Cloud Computing

VMware Cloud Components

FIGURE 8 - VCLOUD DIRECTOR DIAGRAM

Each of the products used in our VMware cloud provides the following components:

VMware ESXi (vSphere)

n Hypervisor management

n Network management

n Storage (to utilize all features of VMware ESX and vCenter, a SAN is required for storage)

vCenter

n Virtual machine provisioning

n Virtual machine image management

n Authentication

vCloud Director

vCloud Director provides additional functionality, including the auto provisioning of virtual machines as well as

entire IaaS clouds. vCloud Director allows for the creation of complex business rules for the distribution of

virtual machines and networks.

n Virtual machine provisioning

n Virtual machine image management

n Authentication

Page 20

Research in Cloud Computing

vSphere Client

vSphere Client is used to interface with both ESXi and vCenter.

n User interface

vCloud Director GUI Interface

vCloud Director GUI interface can be used to provision and create new virtual machines and networks, as well

as create logical pools of resources (VDCs) that can then be consumed by internal and external clients.

vCloud Director also allows the ability to over-commit resources between pools and virtual machines. Internal

and external clients can be assigned to VDCs using a subscription (dedicated) or on demand (pay-as-you-go)

model. Users can access assigned VDCs through a self-service web portal. The vCloud portal can include

custom application or libraries, which have approved operating system templates and complete application

stacks created by the administrator. Users can then instantly and effortlessly deploy from any library to

which they have access.

n User interface

TYPICAL DEPLOYMENT SCENARIO

While VMware can be deployed in various scenarios, a typical deployment would include one or more ESXi

nodes, one stand-alone server running a 64-bit version of Windows Server (cannot be a domain controller),

and one or more SANs depending on the storage and redundancy requirements of the organization. A

configured Active Directory environment is also required for vCenter to be installed.

It is necessary to take great care in the planning process to make ensure the designed VMware solution meets

the hardware and redundancy needs of the organization. VMware can be configured to provide high

reliability and failover if required. If using a SAN, VMware can also be configured to automatically balance

VM loads over available ESXi nodes.

BUILDING THE VMWARE ENVIRONMENT

As with any enterprise software deployment, the first requirement was planning. It was critical to figure out

the requirements and features that needed to be used, as this would affect the way the environment was built.

Since this paper is not meant to be a step-by-step deployment guide, this section will provide a general

overview of the environment. Further information, including best practices and installation guides, can be

found on VMwares website located at www.vmware.com.

Knowledge area requirements

All of the VMware products installed in this environment have been established according to industry

standards. VMware has taken great lengths to make the installation as easy as possible. As a result, it is still

required to have a solid understanding of both Windows and Linux fundamentals. Below is a set of technical

knowledge items that administrators should be familiar with before proceeding with the install.

n Linux operating system fundamentals

n Microsoft SQL Server fundamentals

n Microsoft Active Directory

Page 21

Research in Cloud Computing

n Networking fundamentals

n Storage and SAN concepts

Hardware requirements

It was important that the lab environment was capable of emulating a production environment as closely as

possible. There were also hardware constraints that needed to be met. VMware requires a reduced number

of resources to run properly, and the hardware limitation only affected the performance and number of

virtual machines that could be instantiated. In the end, four Dell Optiplex 745 machines were utilized in the

following configurations (note, in this scenario the domain controller required for vSphere to run was

virtualized and is NOT a recommended practice).

Machine 1: Server 2008 R2 64bit (vSphere)

n Dell OptiPlex 745

n Intel Core 2 Duo Processor with VT support

n 1 Gigabit Ethernet network interface cards

n 4GB of memory

n 80GB internal hard drive

Machines 2 and 3: ESXi

n Dell OptiPlex 745

n Intel Core 2 Duo Processor with VT support

n 1 Gigabit Ethernet network interface card

n 6GB of memory

n 150GB internal hard drive

Machine 4: CentOS 5 (vCloud Director)

n Dell OptiPlex 745

n Intel Core 2 Duo Processor with VT support

n 1 Gigabit Ethernet network interface card

n 4GB of memory

n 80GB internal hard drive

n 150GB internal hard drive

Page 22

Research in Cloud Computing

Software Requirements

It was important to use enterprise level hardware that met the processing and memory needs of the machines

that were virtualized. This section provides the minimum requirements necessary to get a VMware cloud

solution running in a lab environment.

VMware ESXi

n 64-bit VT enabled processor

n 2GB RAM minimum

n 1 or more Gigabit or 10Gb Ethernet controllers

n SCSI, SAS, SATA internal storage

VMware vCenter 5

n Microsoft server 2008/R2 64 bit

n 4GB RAM minimum

n Microsoft SQL server 2008 R2 Express (minimum)

n 1 or more Gigabit or 10Gb Ethernet controllers

n Active Directory

vCloud Director

n vCenter 5/4.1/4

n ESX/i 4/4.1/5

n vCenter networks used for network pools must be available to all hosts in the cluster

n vCenter must trust ESX/i hosts

n RHEL 5

Networking Requirements

The VMware cloud was setup directly on network space allocated on the Purdue Computer and Information

Technology (CIT) network.

Installing ESXi

The installation of ESXi was relatively straightforward. The ESXi image was installed directly from a USB

thumb drive onto local storage on each of the three ESXi nodes. Due to hardware and performance

constraints, local storage for all ESXi nodes along with shared storage on the vCloud Director host were used.

For production purposes, a high performance SAN is recommended.

Page 23

Research in Cloud Computing

Installing vCenter

The installation of vCenter was performed by a VMware installation wizard that ensured all hardware and

software prerequisites were met before allowing the installation to start. One important requirement was to

have an Active Directory domain setup. vCenter cannot be installed on a domain controller, so in the lab

environment, a DC was virtualized on one of the ESXi nodes before installing vCenter. Once all requirements

were met, the wizard installed SQL server on the local machine and prompted for the required service ports.

In the lab, the default ports were used.

Installing vCloud Director

RHEL/CentOS 5, Update 4 or 5 as well as a Microsoft SQL Server database were required for vCloud

Director. It was decided that a SQL Server database would be created for the vCenter installation. The

server was also necessary in order to have two different IP addresses that supported multiple SSL certificates.

vCloud Director was installed by running the vCloud Director binary. Once the binary was installed, a

VMware configuration script, which configured network and database connection details, was initialized.

Page 24

Research in Cloud Computing

Results and Conclusions

Page 25

Research in Cloud Computing

CLOUD SCALABILITY

The general consensus among the IT community is that scalability is a critical component of cloud computing.

With tremendous growth in data storage requirements, the ability for companies to be able to scale their

application and data delivery platforms is essential. As a result, this has been a primary focus area of this

research.

Can OpenStack Provide Scalability?

The question many have been asking is whether or not open source cloud technology can provide the same

scalability options as proprietary solutions. Despite having only conducted this research in a small-scale

environment, OpenStack still offers the capability to be scaled within high-capacity data centers. It was found

during this research that the installed services consumed on average less than 10% of the available resources

on each of the hosts. With such a small footprint, IT professionals looking to consolidate existing equipment

have the ability to do so.

This research came across multiple organizations that used OpenStack for large-scale deployments. One such

example is that of Wikipedia whose entire data library now resides on massive OpenStack Object Storage

clusters. Wikipedia maintains one of the largest online encyclopedias in the world with millions of articles

published in a variety of different languages. According to a recent blog post by the Wikimedia team, the

operations group at Wikipedia has migrated its existing storage systems to a production Swift cluster with

almost 100TB of data (Hartshorne, 2012).

With new projects on the horizon, OpenStack is looking to pave the way for cloud computing in the enterprise.

As the community expands further, new companies will participate and scale their own applications on

OpenStacks platform. Companies have been repackaging the OpenStack source code as their own cloud

solutions. Examples include Dells Crowbar and HPs Cloud Services group. This also provides for tighter

integration between companies and their vendors. By leveraging internal IT departments and the services

provided by cloud vendors, organizations can more easily scale their IT capabilities and make room for future

growth.

Can VMware Provide Scalability?

VMware is currently the de facto leader in enterprise virtualization. VMware solutions have been proven to

provide some of the best scalability and lowest overall resource usage in the industry. Its products have been

adopted by many of the worlds leading Fortune 1000 companies and have provided nearly 100% uptime

for enterprise applications.

Numerous white papers about VMware have been published by organizations that have highlighted cost and

reliability benefits. An example includes Indiana University, which has virtualized almost 1,000 servers using

VMware ESX and vCenter. They have even included mission critical student systems in their virtualization

effort (VMware, 2010). A major telecommunications provider, serving more than 137 countries has used

VMware to virtualize more then 10,000 servers allowing it to achieve major cost benefits and performance

gains (VMware, 2010).

VMware has a proven track record of providing scalable solutions for some of the largest companies in the

world. VMware software is able to grow along with the company and can handle thousands of servers.

Page 26

Research in Cloud Computing

CLOUD MANAGEABILITY

When it comes to cloud computing, organizations want to spend less time maintaining and troubleshooting and

more time innovating. With that comes a new aspect of IT systems administration that cloud technologies can

offer.

How difficult is it to manage an OpenStack Cloud?

With the latest Essex release of OpenStack comes a new dashboard management interface that will provide

IT administrators with additional functionality. At the moment, however, OpenStack is not a turnkey cloud

solution. It requires an extensive knowledge base in open source technology and experienced IT professionals

in order to build, maintain, and deliver the technology. The most challenging aspect of the research was

implementing a stable OpenStack solution. At the time of this writing, there is a limited amount of

documentation on the Essex release. As a result, an extensive amount of trial-and-error was conducted

throughout the build process.

Managing an OpenStack cloud requires a combination of both technical command-line and graphical user

interface skills. Because the installation process is not as straightforward as simply running a setup wizard,

administrators should carefully plan out their deployment. Even after OpenStack has been deployed, the

ability to troubleshoot at various levels of the operating system is critical to maintaining a stable environment.

IT departments looking to OpenStack for their private cloud solutions should be aware of the knowledge area

requirements mentioned in this paper that their staff should possess prior to deployment. It is recommended

that individuals attend OpenStack training seminars to learn how to properly develop and maintain their own

cloud environments.

How difficult is it to manage a VMware Cloud?

VMware vSphere and vCloud Director provide management and reporting capabilities for the entire virtual

infrastructure. The vSphere client includes the ability to mange all aspects of the virtual environment. vCloud

Director provides automation and client management capabilities. VMware has a complete, easy-to-use

solution for creating cloud environments of all types.

CLOUD INTEROPERABILITY

An important aspect of cloud computing is the ability for different technologies to integrate with one another.

OpenStack has support for multiple hypervisors on its Compute platform which include Linux KVM, VMwares

ESX/ESXi and Citrixs XenServer/Xen Cloud Platform. This provides organizations that may already have

VMware and/or Citrix virtualization solutions in place with the ability to expand to OpenStack.

VMware solutions are geared towards managing VMware ESX servers. While vCenter supports a variety of

different plugins, most features of both vCenter and vCloud Director are meant for VMware ESX.

IS THE CLOUD A VIABLE SOLUTION?

Due to the nature at which cloud computing has evolved, organizations have been hesitant to adopt it. To

answer this question, practicality of implementation was the key determinant in whether or not the cloud is

appropriate for the enterprise.

Page 27

Research in Cloud Computing

Does OpenStack Make for a Viable Cloud Solution?

After looking at OpenStack over a three month time period, it is certainly safe to assume that it is an

appropriate solution for companies looking to adopt it. In fact, many companies have already begun to do

so. AT&T, one of the largest telecommunications providers, recently announced itself as an official contributor

to the OpenStack platform (Gallagher, 2012).

Does VMware Make for a Viable Cloud Solution

VMware has one of the largest and most complete portfolios of products geared towards the creation of

clouds of all types. VMwares product portfolio, along with its ease of use and support services makes it an

ideal choice for creating a cloud environment.

WHICH SOLUTION IS BETTER FOR THE GIVEN SCENARIO?

In the Research Methodology section, a mid-size business scenario was given to which both cloud solutions

could be compared. The following categories were examined in order to provide an answer to this question:

n Cost

n Performance

n Ease of Implementation

n Support

OpenStack Case Study Analysis

Cost

In regards to the cost of deploying a cloud solution, OpenStack has an advantage over VMware. Without

having to pay for any licensing fees, administrators can deploy OpenStack with minimal additional cost to the

business.

Performance

From a performance standpoint, OpenStack is comparable to any closed-source cloud platform. It has the

ability to scale from small business infrastructure to high capacity data centers.

Ease of Implementation

As mentioned earlier in the report, OpenStack is not a turnkey solution. It requires an extensive amount of

planning and a robust skillset in order to implement. The platform is also in its infancy compared to VMware,

and as a result, much of the community has yet to evaluate it in their own environments.

Support

OpenStack brings with it a well-established community of developers and IT professionals who are dedicated

to sharing their knowledge on the platform. As OpenStack matures, it will provide additional documentation

and improved support.

Page 28

Research in Cloud Computing

VMware Case Study Analysis

Cost

VMware ESXi is free but requires a license for advanced features. vCenter is licensed per physical server,

and vCloud Director is licensed per each virtual machine instance. Customers who are attempting to create a

private infrastructure solution with VMware products will most likely incur significant costs. From a cost

perspective, OpenStack is much more affordable than VMware.

Performance

VMware has an advantage of providing drivers that are more integrated with modern operating systems.

No significant performance differences between the two solutions were noticed, however, a lack of hardware

or tools to accurately measure performance may have hindered research in this area.

Ease of Implementation

VMware still requires significant planning to setup an effective solution. A significant amount of

documentation and an active community, however, make it easier for administrators to turn their plans into

action. VMware has a clear advantage over OpenStack in regards to deployment.

Support

VMware has an extensive and active community, which can help with most problems. VMware also offers

professional support and consulting services which can be utilized to provide design and implementation

guidance.

CONCLUSIONS DRAWN FROM THIS RESEARCH

Both OpenStack and VMware are changing the infrastructure-as-a-service landscape. They offer a

tremendous amount of capability and management options. The research conducted between January and

March 2012 has shown that both solutions are effective IaaS options for enterprises looking to the cloud for

answers. The platform chosen depends entirely on the organizations needs and available resources.

Companies looking to deploy production-level private clouds should not only consult the research

demonstrated in this report but also their own internal learning. The rate at which the IT landscape changes

has been increasing rapidly over the last few years. As a result, administrators, professionals, and

information officers should be willing to change their own environments as necessary.

WHERE TO FROM HERE?

This research only represents a small portion of cloud computing offerings available. Having had only a

limited amount of time to explore these platforms, the team was unable to look further into the following

areas:

n Security

n Performance

As both technologies are adopted by more IT organizations, their security and performance capabilities will

need to be evaluated further. OpenStack, in particular, features an Academic Initiative Community that looks

to expand upon knowledge in many of the topics mentioned in this report.

Page 29

Research in Cloud Computing

GLOSSARY

API: An API, or application programming interface, provides a set of coding specifications used by developers to

provide for communication with other applications and services.

Bridge interface: A Layer 2 networking interface that forwards Ethernet frames between different network

segments.

Domain controller (DC): A host that is responsible for managing clients, devices, and resources in a domain

environment.

Hypervisor: A hypervisor is a hardware virtualization method that allows guest operating systems to run on a

single host machine.

KVM: KVM, or kernel-based virtual machine, is a virtualization technique designed for Linux.

Reverse proxy: A reverse proxy takes requests from Internet hosts and forwards them to internal clients.

Storage area network (SAN): A network of storage devices interconnected via Layer 2 technologies.

Virtual machine: An emulated guest operating system that runs on top of a physical host machine.

VDC: A VDC is a virtual data center concept created by VMware.

VT: VT is Intels virtualization technology built into its chipset to support true hardware virtualization.

Page 30

Research in Cloud Computing

REFERENCES

Chen, G. (2010, January). Virtualizing Tier I Applications: A Critical Step on the Journey Towards the Private

Cloud. IDC.

Gallagher, S. (2012, January). AT&T joins OpenStack as it launches cloud for developers. Retrieved March 25,

2012, from Ars Technica: http://arstechnica.com/business/news/2012/01/att-joins-openstack-as-it-launchescloud-for-developers.ars

Hartshorne, B. (2012, February 9). Scaling media stroage at Wikimedia with Swift. Retrieved March 25, 2012,

from Wikimedia Foundation: http://blog.wikimedia.org/2012/02/09/scaling-media-storage-at-wikimediawith-swift/

Henderson, T. (2012, January 18). Cloud activity to explode in 2012. Retrieved January 30, 2012, from

Network World: http://www.networkworld.com/news/2012/122011-outlook-test-254278.html

VMware. (2010). Indiana University Virtualizes Mission-Critical Oracle Database. Palo Alto, CA: VMware.

VMware. (2010, January). Major Telecom Provider Uses Virtualization to Give Customers Greater Flexibility

and Lower Costs in Outsourced Datacenters. Palo Alto, CA: VMware.

Page 31

Вам также может понравиться

- Best Practices For Securing Computer NetworksДокумент2 страницыBest Practices For Securing Computer NetworksswvylОценок пока нет

- The Importance of Apis For Perfect Healthcare: Orion Health White Paper Fiora Au Product Director Apis 022018Документ9 страницThe Importance of Apis For Perfect Healthcare: Orion Health White Paper Fiora Au Product Director Apis 022018RajОценок пока нет

- Cybersecurity Threat Model ReportДокумент7 страницCybersecurity Threat Model ReportMichael NdegwaОценок пока нет

- Starring Role of Data Mining in Cloud Computing ParadigmДокумент4 страницыStarring Role of Data Mining in Cloud Computing ParadigmijsretОценок пока нет

- Intrusion Detection Techniques in Mobile NetworksДокумент8 страницIntrusion Detection Techniques in Mobile NetworksInternational Organization of Scientific Research (IOSR)Оценок пока нет

- Forged by The Pandemic: The Age of Privacy: Cisco 2021 Data Privacy Benchmark StudyДокумент23 страницыForged by The Pandemic: The Age of Privacy: Cisco 2021 Data Privacy Benchmark StudyJohn GlassОценок пока нет

- Big Data and Data Security 3Документ6 страницBig Data and Data Security 3Adeel AhmedОценок пока нет

- Cloud Security With Virtualized Defense andДокумент6 страницCloud Security With Virtualized Defense andmbamiahОценок пока нет

- Cloud ComputingДокумент14 страницCloud ComputingNabeela Khaled100% (1)

- Technology Risk And Cybersecurity A Complete Guide - 2019 EditionОт EverandTechnology Risk And Cybersecurity A Complete Guide - 2019 EditionОценок пока нет

- Alshehry DissertationДокумент234 страницыAlshehry DissertationViet Van HoangОценок пока нет

- Test 8Документ6 страницTest 8Robert KegaraОценок пока нет

- Siemonster v4 High Level Design v10 PublicДокумент22 страницыSiemonster v4 High Level Design v10 PublicMadhu Neal100% (1)

- Chapter 7 (w6) ASP - NET OverviewДокумент41 страницаChapter 7 (w6) ASP - NET Overviewmuhammedsavas799Оценок пока нет

- Data Network Threats and Penetration TestingДокумент5 страницData Network Threats and Penetration TestingJournal of Telecommunications100% (1)

- Top Threats To Cloud Computing Egregious Eleven PDFДокумент41 страницаTop Threats To Cloud Computing Egregious Eleven PDFCool DudeОценок пока нет

- Cyber Security ChallengesДокумент1 страницаCyber Security ChallengessreehariОценок пока нет

- Web Based Claims Processing System WCPSДокумент5 страницWeb Based Claims Processing System WCPSEditor IJTSRDОценок пока нет

- NIST CC Reference Architecture v1 March 30 2011Документ26 страницNIST CC Reference Architecture v1 March 30 2011Arévalo José100% (1)

- (IJIT-V6I5P7) :ravishankar BelkundeДокумент9 страниц(IJIT-V6I5P7) :ravishankar BelkundeIJITJournalsОценок пока нет

- Types of Malware and Importance of Malware AnalysisДокумент10 страницTypes of Malware and Importance of Malware AnalysisshaletОценок пока нет

- Network SecurityДокумент58 страницNetwork SecurityahkowОценок пока нет

- Cloud ComputingДокумент13 страницCloud ComputingNurlign YitbarekОценок пока нет

- Unit 16. Assignment 02 - BriefДокумент40 страницUnit 16. Assignment 02 - BriefNguyen Manh TaiОценок пока нет

- Multi Factor Authentication Whitepaper Arx - Intellect DesignДокумент12 страницMulti Factor Authentication Whitepaper Arx - Intellect DesignIntellect DesignОценок пока нет

- AWS IoT Lens PDFДокумент67 страницAWS IoT Lens PDFilanОценок пока нет

- 12mcei12 - Network Scanning and Vulnerability Assessment With Report GenerationДокумент91 страница12mcei12 - Network Scanning and Vulnerability Assessment With Report GenerationproftechitspecialistОценок пока нет

- Identifying and Mitigating Cyber Threats To Financial Systems - The MITRE CorporationДокумент3 страницыIdentifying and Mitigating Cyber Threats To Financial Systems - The MITRE CorporationSgt2Оценок пока нет

- Access Control ProposalДокумент9 страницAccess Control ProposalsupportОценок пока нет

- What Is A Malware Attack?Документ4 страницыWhat Is A Malware Attack?aariya goelОценок пока нет

- How To Use ProDiscover, ProDiscover ForensicsДокумент13 страницHow To Use ProDiscover, ProDiscover Forensicsgkpalok100% (1)

- MSC Project Proposal GuidelinesДокумент3 страницыMSC Project Proposal GuidelinesAboalmaail AlaminОценок пока нет

- Technical Communicator Instructional Designer in Phoenix AZ Resume Debra GabrielДокумент3 страницыTechnical Communicator Instructional Designer in Phoenix AZ Resume Debra GabrielDebraGabrielОценок пока нет

- Global Datacenter Locations Talent NeuronДокумент15 страницGlobal Datacenter Locations Talent NeuronTalent NeuronОценок пока нет

- An Android Application Sandbox System For Suspicious Software DetectionДокумент8 страницAn Android Application Sandbox System For Suspicious Software DetectionelvictorinoОценок пока нет

- 2020 Data Center Roadmap Survey PDFДокумент16 страниц2020 Data Center Roadmap Survey PDFRitwik MehtaОценок пока нет

- A Study of Cloud Computing MethodologyДокумент5 страницA Study of Cloud Computing MethodologyR Dharshini SreeОценок пока нет

- Ebook High Avilability DNS Reduces Downtime Risk and Improves End User ExperienceДокумент11 страницEbook High Avilability DNS Reduces Downtime Risk and Improves End User ExperienceIvan O Gomez CОценок пока нет

- Final Digital Forensic Small Devices ReportДокумент21 страницаFinal Digital Forensic Small Devices ReportMithilesh PatelОценок пока нет

- Network SecurityДокумент26 страницNetwork SecurityturiagabaОценок пока нет

- Solution Architecture Proposal Requirements Package ShuberДокумент35 страницSolution Architecture Proposal Requirements Package Shuberapi-388786616Оценок пока нет

- VPN Security MechanismsДокумент11 страницVPN Security MechanismsBaronremoraОценок пока нет

- PKI Basics-A Technical PerspectiveДокумент12 страницPKI Basics-A Technical PerspectiveMaryam ShОценок пока нет

- Elster As300p Profiles 2011 02 Smart MeteringДокумент3 страницыElster As300p Profiles 2011 02 Smart MeteringnscacОценок пока нет

- Computer Network Design For Universities in Developing CountriesДокумент88 страницComputer Network Design For Universities in Developing CountriesAhmed JahaОценок пока нет

- OWASP Top 10 - Threats and MitigationsДокумент54 страницыOWASP Top 10 - Threats and MitigationsSwathi PatibandlaОценок пока нет

- Best Practices Securing EcommerceДокумент64 страницыBest Practices Securing Ecommercejunior_00Оценок пока нет

- Digital Forensik PDFДокумент29 страницDigital Forensik PDFChyntia D. RahadiaОценок пока нет

- Cyber Crime Investigation ProposalДокумент11 страницCyber Crime Investigation ProposalMayur Dev SewakОценок пока нет

- Accenture Connected Health Global Report Final WebДокумент280 страницAccenture Connected Health Global Report Final Webkon-baОценок пока нет

- Cloud ComputingДокумент204 страницыCloud ComputingSarayu NagarajanОценок пока нет

- Vxrail Vcenter Server Planning GuideДокумент12 страницVxrail Vcenter Server Planning GuidetungОценок пока нет

- How To Install MoshellДокумент5 страницHow To Install Moshellcaptvik100% (1)

- Sym CommandsДокумент10 страницSym CommandsSrinivas GollanapalliОценок пока нет

- Amacs Configuration m1584Документ38 страницAmacs Configuration m1584Faysbuk KotoОценок пока нет

- Pro Apache Hadoop 2nd EditionДокумент1 страницаPro Apache Hadoop 2nd EditionDreamtech Press100% (1)

- MPMC Unit 4Документ35 страницMPMC Unit 4Dharani ChinnaОценок пока нет

- Wish Net User ManualДокумент414 страницWish Net User ManualMuhammad Javed Khurshid50% (2)

- Baseband Blue ToothДокумент2 страницыBaseband Blue Toothpriyanka_lakshmi093907Оценок пока нет

- Hardware Maintenance Manual: Thinkpad L440 and L540Документ142 страницыHardware Maintenance Manual: Thinkpad L440 and L540Anonymous BYivjOHK3Оценок пока нет

- VNX DP Manage Luns PDFДокумент19 страницVNX DP Manage Luns PDFvaraprasadgcn1Оценок пока нет

- DX DiagДокумент10 страницDX DiagAbayaОценок пока нет

- ERPM Installation Guide 5.5.1Документ263 страницыERPM Installation Guide 5.5.1powerОценок пока нет

- Brilliance CT: 6-Slice, 10-Slice, 16-Slice, and 16 Power ConfigurationsДокумент285 страницBrilliance CT: 6-Slice, 10-Slice, 16-Slice, and 16 Power ConfigurationsdanielОценок пока нет

- Debian Edu Lenny ManualДокумент64 страницыDebian Edu Lenny Manualamsaifulla2001Оценок пока нет

- DELL U2410 FW Upgrade Procedure USB ISPДокумент9 страницDELL U2410 FW Upgrade Procedure USB ISPCourtney JohnsonОценок пока нет

- Windows 7 Product Key For All Editions 32-64bit (New 2020)Документ8 страницWindows 7 Product Key For All Editions 32-64bit (New 2020)Sharma ComputerОценок пока нет

- PCSPIM Lab Tutorial 2Документ3 страницыPCSPIM Lab Tutorial 2b2uty77_593619754Оценок пока нет

- Exam 4 Training GrileДокумент15 страницExam 4 Training GrileDiana DobrescuОценок пока нет

- E7 20 - SCP 10ge PDFДокумент2 страницыE7 20 - SCP 10ge PDFhakimОценок пока нет

- Deploying F5 With Microsoft SharePoint 2013 and 2010 WelcomeДокумент65 страницDeploying F5 With Microsoft SharePoint 2013 and 2010 WelcomeIgor PuhaloОценок пока нет

- Mqchat TranscriptДокумент7 страницMqchat TranscriptkirankakaОценок пока нет

- Linux Commands: A Introduction: Sunil Kumar July 24, 2014Документ7 страницLinux Commands: A Introduction: Sunil Kumar July 24, 2014Shamim AliОценок пока нет

- What Are The Different Port States A Brocade Switch Can Report?Документ4 страницыWhat Are The Different Port States A Brocade Switch Can Report?Murali Kolakaluri100% (1)

- Chaves Win 7, 8, 8.1, 10, 11Документ12 страницChaves Win 7, 8, 8.1, 10, 11Cauã VinhasОценок пока нет

- Introduction To Parallel ProcessingДокумент47 страницIntroduction To Parallel ProcessingMilindОценок пока нет

- Manual Reefer ManagerДокумент30 страницManual Reefer ManagerelvasclimaОценок пока нет

- Abrites Diagnostics Avdi Common: User ManualДокумент47 страницAbrites Diagnostics Avdi Common: User ManualMauricio Garcia VentОценок пока нет

- EQmod Mounts - Siet'Документ5 страницEQmod Mounts - Siet'Mechtatel HrenovОценок пока нет

- SyllabusДокумент15 страницSyllabusAajnil SagunОценок пока нет

- SIMATIC 545/555/575 System Manual: Order Number: PPX:505-8201-2 Manual Assembly Number: 2804693-0002 Second EditionДокумент316 страницSIMATIC 545/555/575 System Manual: Order Number: PPX:505-8201-2 Manual Assembly Number: 2804693-0002 Second EditionAlejandro A Garcia SerranoОценок пока нет