Академический Документы

Профессиональный Документы

Культура Документы

Ethical and Social Challenges For Machine Learning

Загружено:

Ali Bhutta0 оценок0% нашли этот документ полезным (0 голосов)

21 просмотров1 страницаEthical and Social Challenges for Machine Learning

Оригинальное название

Ethical and Social Challenges for Machine Learning

Авторское право

© © All Rights Reserved

Доступные форматы

DOCX, PDF, TXT или читайте онлайн в Scribd

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документEthical and Social Challenges for Machine Learning

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате DOCX, PDF, TXT или читайте онлайн в Scribd

0 оценок0% нашли этот документ полезным (0 голосов)

21 просмотров1 страницаEthical and Social Challenges For Machine Learning

Загружено:

Ali BhuttaEthical and Social Challenges for Machine Learning

Авторское право:

© All Rights Reserved

Доступные форматы

Скачайте в формате DOCX, PDF, TXT или читайте онлайн в Scribd

Вы находитесь на странице: 1из 1

Ethical and Social Challenges for Machine Learning

The possibility of creating thinking machines raises a host of ethical issues. These

questions relate to the following

I.

II.

machines do not harm humans

machines also do not harm themselves

Imagine, in the near future, a bank using a machine learning algorithm to

recommend mortgage applications for approval. A rejected applicant brings a

lawsuit against the bank, alleging that the algorithm is discriminating racially

against mortgage applicants. The bank replies that this is impossible, since the

algorithm is deliberately blinded to the race of the applicants. Indeed, that was part

of the banks rationale for implementing the system. Even so, statistics show that

the banks approval rate for black applicants has been steadily dropping. Submitting

ten apparently equally qualified genuine applicants (as determined by a separate

panel of human judges) shows that the algorithm accepts white applicants and

rejects black applicants. What could possibly be happening?

Finding an answer may not be easy. If the machine learning algorithm is based on a

complicated neural network, or a genetic algorithm produced by directed evolution,

then it may prove nearly impossible to understand why, or even how, the algorithm

is judging applicants based on their race. e. On the other hand, a machine learner

based on decision trees or Bayesian networks is much more transparent to

programmer inspection (Hastie, Tibshirani, and Friedman 2001), which may enable

an auditor to discover that the AI algorithm uses the address information of

applicants who were born or previously resided in predominantly poverty-stricken

areas.

Although current AI offers us few ethical issues that are not already present in the

design of cars or power plants, the approach of AI algorithms toward more

humanlike thought portends predictable complications.

Social roles may be filled by AI algorithms, suggesting new design requirements like

transparency and predictability. Sufficiently general AI algorithms may no longer

execute in predictable contexts, requiring new kinds of safety assurance and the

engineering of artificial ethical considerations.

AIs with suffi- ciently advanced mental states, or the right kind of states, will have

moral status, and some may count as personsthough perhaps persons very much

unlike the sort that exist now, perhaps governed by different rules. And finally, the

prospect of AIs with superhuman intelligence and superhuman abilities presents us

with the extraordinary challenge of stating an algorithm that outputs superethical

behavior. These challenges may seem visionary, but it seems predictable that we

will encounter them; and they are not devoid of suggestions for present-day

research directions.

Вам также может понравиться

- Abstract ProjectДокумент2 страницыAbstract ProjectAli BhuttaОценок пока нет

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5794)

- Science Fair Research Report TEMPLATEДокумент9 страницScience Fair Research Report TEMPLATEAli BhuttaОценок пока нет

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (895)

- Lecture 2.1: Vector Calculus CSC 84020 - Machine Learning: Andrew RosenbergДокумент46 страницLecture 2.1: Vector Calculus CSC 84020 - Machine Learning: Andrew RosenbergAli BhuttaОценок пока нет

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- A Presentation On The Implementation of Decision Trees in MatlabДокумент9 страницA Presentation On The Implementation of Decision Trees in MatlabAli BhuttaОценок пока нет

- Name of SolutionДокумент2 страницыName of SolutionAli BhuttaОценок пока нет

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (400)

- (International Kierkegaard Commentary) Robert L. Perkins (Ed) - International Kierkegaard Commentary, Vol 4 Either - or Part II-Mercer University Press (1995) PDFДокумент317 страниц(International Kierkegaard Commentary) Robert L. Perkins (Ed) - International Kierkegaard Commentary, Vol 4 Either - or Part II-Mercer University Press (1995) PDFUn om Intamplat100% (1)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- Information Privacy Corporate Management and National RegulationДокумент24 страницыInformation Privacy Corporate Management and National RegulationSehrish TariqОценок пока нет

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- Jurisprudence Class Notes FullДокумент68 страницJurisprudence Class Notes Fulladityatnnls100% (5)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2259)

- Pale Case DoctrinesДокумент28 страницPale Case DoctrinesSheena MarieОценок пока нет

- Zhuran You - A G Rud - Yingzi Hu - The Philosophy of Chinese Moral Education - A History-Palgrave MacMillan (2018)Документ315 страницZhuran You - A G Rud - Yingzi Hu - The Philosophy of Chinese Moral Education - A History-Palgrave MacMillan (2018)Willy A. WengОценок пока нет

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- Review On Ashwini Sukthankar's Queering Approaches To Sex, Gender, and Labor in India - Examining Paths To Sex Worker UnionismДокумент8 страницReview On Ashwini Sukthankar's Queering Approaches To Sex, Gender, and Labor in India - Examining Paths To Sex Worker UnionismNuzhat Ferdous IslamОценок пока нет

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Curriculum Map Grade 10Документ45 страницCurriculum Map Grade 10Yeye Lo CordovaОценок пока нет

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- THAILAND: Human Rights Organizations Urge Thai Government To Criminalize Torture As A CrimeДокумент2 страницыTHAILAND: Human Rights Organizations Urge Thai Government To Criminalize Torture As A CrimePugh JuttaОценок пока нет

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (266)

- 0.1 COURSE SYLLABUS GE 8 Ethics Presentation FinalДокумент30 страниц0.1 COURSE SYLLABUS GE 8 Ethics Presentation FinalJames Isaac CancinoОценок пока нет

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (345)

- Case StudyДокумент2 страницыCase StudyJuztine Raboy100% (1)

- Legprof PaperДокумент21 страницаLegprof PaperJohn Paul MacababbadОценок пока нет

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- Ethics Week 6 Lacno, Jeremiah B. BSCE-3BДокумент2 страницыEthics Week 6 Lacno, Jeremiah B. BSCE-3BMayaОценок пока нет

- STS Instructional Modules Midterm CoverageДокумент58 страницSTS Instructional Modules Midterm CoverageJamaica DavidОценок пока нет

- Samyakgyan Magazine August 2020. Pls SeeДокумент76 страницSamyakgyan Magazine August 2020. Pls SeejambudweephastinapurОценок пока нет

- 0041 Flat Maslows Hierarchy Needs Powerpoint Template 16 9Документ9 страниц0041 Flat Maslows Hierarchy Needs Powerpoint Template 16 9ASPIRAformance ProGigInОценок пока нет

- Civil Society PDFДокумент13 страницCivil Society PDFK.M.SEETHI100% (5)

- Criminology Project MaheshДокумент23 страницыCriminology Project MaheshMahesh RawatОценок пока нет

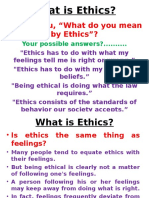

- What Is Ethics LectureДокумент33 страницыWhat Is Ethics LectureRakibZWani100% (1)

- GEC-108 Activity-4 InfographicДокумент2 страницыGEC-108 Activity-4 InfographicRafael Cortez100% (1)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- Exploring Management 5th Edition by Schermerhorn Bachrach ISBN Test BankДокумент33 страницыExploring Management 5th Edition by Schermerhorn Bachrach ISBN Test Bankmartha100% (20)

- The Six ParamitasДокумент3 страницыThe Six Paramitasleo9122Оценок пока нет

- WGU C483 - Principles of ManagementДокумент17 страницWGU C483 - Principles of ManagementAndreeОценок пока нет

- Module Understanding The SelfДокумент47 страницModule Understanding The SelfJoy Asahib MelvaОценок пока нет

- Right To Life EssayДокумент6 страницRight To Life Essayovexvenbf100% (2)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (121)

- BioethicsДокумент30 страницBioethicsM Manzoor MalikОценок пока нет

- Professional Work Ethics of PharmacistsДокумент32 страницыProfessional Work Ethics of PharmacistsNamoAmitofouОценок пока нет

- CRITERION - 3: Course Outcomes and Program Outcomes 3. Course Outcomes and Program OutcomesДокумент2 страницыCRITERION - 3: Course Outcomes and Program Outcomes 3. Course Outcomes and Program OutcomesUdaysingh PatilОценок пока нет

- Richard Anerson - Equality and Equal Opportunity For WelfareДокумент18 страницRichard Anerson - Equality and Equal Opportunity For WelfareSeung Yoon LeeОценок пока нет

- Zwolinski - A Brief History of LibertarianismДокумент66 страницZwolinski - A Brief History of LibertarianismRyan Hayes100% (1)

- Why Should I Be A TeacherДокумент13 страницWhy Should I Be A TeacherVerónica Ivone OrmeñoОценок пока нет