Академический Документы

Профессиональный Документы

Культура Документы

2inceptez Hadoop Processing

Загружено:

Mani VijayАвторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

2inceptez Hadoop Processing

Загружено:

Mani VijayАвторское право:

Доступные форматы

HADOOP PROCESSING LAYER

MAP REDUCE

Introduction

MapReduce is a programming model and an associated implementation for processing and generating

large data sets with a parallel, distributed algorithm on a cluster. MR Provides automatic parallelization

and distribution, fault tolerant, I/O operation and status updates.

To distribute input data and collate results, MapReduce operates in parallel across massive cluster sizes.

Because cluster size doesn't affect a processing job's final results, jobs can be split across almost any

number of servers. Therefore, MapReduce and the overall Hadoop framework simplify software

development.

MapReduce is also fault-tolerant, with each node periodically reporting its status to a master node. If a

node doesn't respond as expected, the master node re-assigns that piece of the job to other available

nodes in the cluster. This creates resiliency and makes it practical for MapReduce to run on inexpensive

commodity servers.

Inceptez Technologies Page 1

Map Stage: The map or mapper’s job is to process the input data. Generally the input data is in the

form of file or directory and is stored in the Hadoop file system (HDFS). The input file is passed to the

mapper function line by line. The mapper processes the data and creates several small chunks of data

that is in the form of key value pairs.

Reduce Stage: This stage is the combination of the Shuffle stage and the Reduce stage. The Reducer’s

job is to process the data that comes from the mapper such as consolidation, aggregation or sorting.

After processing, it produces a new set of output, which will be stored in the HDFS.

Input splits

Inceptez Technologies Page 2

The way HDFS has been set up, it breaks down very large files into large blocks (for example, measuring

128MB), and stores three copies of these blocks on different nodes in the cluster.

MapReduce data processing is driven by this concept of input splits. The number of input splits that are

calculated for a specific application determines the number of mapper tasks. Each of these mapper tasks

is assigned, where possible, to a slave node where the input split is stored. One line represents one

record. Now, remember that the block size for the Hadoop cluster is 64MB, which means that the light

data files are broken into chunks of exactly 64MB.

Do you see the problem? If each map task processes all records in a specific data block, what happens to

those records that span block boundaries? File blocks are exactly 64MB (or whatever you set the block

size to be), and because HDFS has no conception of what’s inside the file blocks, it can’t gauge when a

record might spill over into another block.

To solve this problem, Hadoop uses a logical representation of the data stored in file blocks, known

as input splits. When a MapReduce job client calculates the input splits, it figures out where the first

whole record in a block begins and where the last record in the block ends.

In cases where the last record in a block is incomplete, the input split includes location information for

the next block and the byte offset of the data needed to complete the record. The above figure shows

this relationship between data blocks and input splits.

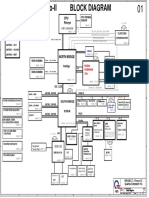

Hadoop Version1 Storage & Processing Daemons

Below flow is self-explanatory.

Inceptez Technologies Page 3

Hadoop Version 1 Processing Daemons:

Job Tracker (Per Cluster) :

JobTracker is master processing software daemon responsible Resource allocation, resource

administration, scheduling and monitoring of jobs by taking in requests from a client and assigning

TaskTrackers with tasks to be performed.

The JobTracker tries to assign tasks to the TaskTracker on the DataNode where the data is locally

present (Data Locality).

Roles of Job Tracker:

Job Tracker is the daemon service for submitting and tracking MapReduce jobs in Hadoop. There is only

One Job Tracker process run on any hadoop cluster. Job Tracker runs on its own JVM process. In a typical

production cluster its run on a separate machine. Each slave node is configured with job tracker node

Inceptez Technologies Page 4

location. The JobTracker is single point of failure for the Hadoop MapReduce service. If it goes down, all

running jobs are halted. JobTracker in Hadoop performs following actions…

The JobTracker is the service within Hadoop that farms out MapReduce tasks to specific nodes in the

cluster, ideally the nodes that have the data, or at least are in the same rack.

1. Client applications submit jobs to the Job tracker.

2. The JobTracker talks to the NameNode to determine the location of the data.

3. The JobTracker locates TaskTracker nodes with available slots at or near the data.

4. The JobTracker submits the work to the chosen TaskTracker nodes.

5. The TaskTracker nodes are monitored. If they do not submit heartbeat signals often enough,

they are deemed to have failed and the work is scheduled on a different TaskTracker.

6. A TaskTracker will notify the JobTracker when a task fails. The JobTracker decides what to do

then: it may resubmit the job elsewhere, it may mark that specific record as something to avoid,

and it may may even blacklist the TaskTracker as unreliable.

7. When the work is completed, the JobTracker updates its status.

8. Client applications can poll the JobTracker for information.

TaskTracker (Per Node)

1. A TaskTracker is a slave node daemon in the cluster that accepts tasks (Map, Reduce and Shuffle

operations) from a JobTracker.

2. There is only One Task Tracker process run on any hadoop data node.

3. Task Tracker runs on its own JVM process.

4. Every TaskTracker is configured with a set of slots; these indicate the number of tasks that it can

accept. The TaskTracker starts a separate JVM processes to do the actual work (called as Task

Instance) this is to ensure that process failure does not take down the task tracker.

5. The TaskTracker monitors these task instances, capturing the output and exit codes. When the Task

instances finish, successfully or not, the task tracker notifies the JobTracker.

6. The TaskTrackers also send out heartbeat messages to the JobTracker, usually every 5 seconds, to

reassure the JobTracker that it is still alive. These message also inform the JobTracker of the number

of available slots, so the JobTracker can stay up to date with where in the cluster work can be

delegated.

Limitation in Version1 with respect to processing

Job tracker is overburden

JobTracker runs on single machine doing several task like

Resource allocation and administration.

Inceptez Technologies Page 5

Job and task scheduling.

Monitoring.

Although there are so many machines (Data Node) available; they are not getting used. This limits

scalability of JobTracker.

Responsibilities of the JobTracker (Hadoop 1.0) mainly included the following:

Managing the computational resources in terms of map and reduce slots

Scheduling submitted jobs

Monitoring the executions of the TaskTrackers

Restarting failed tasks

Performing a speculative execution of tasks

Calculating the Job Counters

Clearly, the JobTracker alone does a lot of tasks together and is overloaded with lots of work.

Job tracker is single point of failure

In Hadoop 1.0, JobTracker is single Point of availability. This means if JobTracker fails, all jobs must

restart as there is no mechanism to maintain/store the interim state of the JT progress to start it from

where the job is left.

Fixed slots for Map & Reduce tasks

In Hadoop 1.0, there is concept of predefined number of map slots and reduce slots for each

TaskTrackers. Resource Utilization issues occur because maps slots might be ‘full’ while reduce slots is

empty (and vice-versa). Here the compute resources (DataNode) could sit idle which are reserved for

Reduce slots even when there is immediate need for those resources to be used as Mapper slots. Due to

this fixed slot limitation version 1 can scale up to 4,000 nodes or 40000 tasks.

In map-reduce v1 mapreduce.tasktracker.map.tasks.maximum and

mapreduce.tasktracker.reduce.tasks.maximum are used to configure number of map slots and reduce

slots accordingly in mapred-site.xml.

MR is only Framework supported:

In Hadoop 1.0, Job tracker was tightly integrated with MapReduce and only supporting

application that obeys MapReduce programming framework can run on Hadoop.

Let’s try to understand point 4 in more detail.

Inceptez Technologies Page 6

MapReduce works on batch-driven data analysis, where the input data is partitioned into smaller

batches that can be processed in parallel across many machines in the Hadoop cluster. But MapReduce,

while powerful enough to express many data analysis algorithms, is not always the optimal choice of

programming paradigm. It‘s often desirable to run other computation paradigms in the Hadoop cluster –

here are some examples.

Problem in performing real-time analysis: MapReduce is batch driven. What if I want to do perform real

time analysis instead of batch-processing (where results is available after several hours).

There are many applications which need results in real time like fraud detection algorithm. There are

real time engines like Apache Storm which can work better in this case. But in Hadoop 1.0, due to tight

coupling these engines cannot run independently.

Problem in running Message-Passing approach: It is a stateful process that runs on each node of a

distributed network. The processes communicate with each other by sending messages, and alter their

state based on the messages they receive. This is not possible in MapReduce.

Problem in running Ad-hoc query: Many users like to query their big data using SQL. Apache Hive can

execute a SQL query as a series of MapReduce jobs, but it has shortcomings in terms of performance.

Recently, some new approaches such as Apache Tajo , Facebook's Presto and Cloudera's Impala

drastically improve the performance, but they require to run services in other form than MapReduce

form.

It is not possible to run all such non Map Reduce jobs on Hadoop Cluster. Such jobs have to "disguise"

themselves as mappers and reducers in order to be able to run on Hadoop 1.0.

Backward compatibility issue

Older version of Map reduces MR1 could not run in the latest MR1 versions, whereas YARN is backward

compatible to run older versions.

Inceptez Technologies Page 7

Transition of Hadoop Version 1 to 2

YARN – Yet Another Resource Negotiator is a resource manager that was created by separating the

processing engine and resource management capabilities of MapReduce as it was implemented in

Hadoop 1. YARN is often called the Data operating system of Hadoop because it is responsible for

managing and monitoring workloads, maintaining a multi-tenant environment, implementing security

controls, and managing high availability features of Hadoop.

Like an operating system on a server, YARN is designed to allow multiple, diverse user applications to

run on a multi-tenant platform. In Hadoop 1, users had the option of writing MapReduce programs in

Java, in Python, Ruby or other scripting languages using streaming, or using Pig, a data transformation

language. Regardless of which method was used, all fundamentally relied on the MapReduce processing

model to run.

YARN supports multiple processing models in addition to MapReduce. One of the most significant

benefits of this is that we are no longer limited to working the often I/O intensive, high latency

MapReduce framework. This advance means Hadoop users should be familiar with the pros and cons of

the new processing models and understand when to apply them to particular use cases.

Architectural Changes

Following two figures represents the architectural changes of the version 1 daemons (Job tracker and

Task tracker) are replaced by Resource manager, Application Master, Node manager and Containers.

In the proposed new YARN architecture, the Job Tracker is split into two different daemons

called Resource Manager and Node Manager and Task Tracker is split into Appmaster and

container. The resource manager only manages the allocation of resources to the different applications

submitted and maintains the scheduler which just takes care of the scheduling jobs without worrying

about any monitoring or status updates. Different resources such as memory, cpu time, network

Inceptez Technologies Page 8

bandwidth etc. are put into one unit called the Container. Node Manager will manage all containers by

creating it for Resource manager to launch AppMasters that executes the actual tasks by launching more

containers. There are different AppMasters running on different nodes which talk to a number of these

resource containers and accordingly update the Node Manager with the monitoring/status details.

YARN Components

I) ResourceManager (RM)

II) ApplicationsManager

III) NodeManager (NM)

IV) ApplicationMaster (AM)

V) Container

I) ResourceManager (RM)

The ResourceManager (RM) is the key service offered in YARN. Clients can interact with the framework

using ResourceManager. ResourceManager is the master for all other daemons available in the

framework.

ResourceManager has two major components, Scheduler and ApplicationsManager

a) Scheduler

1. The Scheduler is responsible for allocating resources to the various running applications subject to

familiar constraints of capacities, queues etc.

2. It is a pure scheduler since it does not monitor or track the status of the application instead it purely

performs its scheduling function based the resource requirements of the applications

3. It schedules the resources depending on the resource “Container”

Inceptez Technologies Page 9

4. The Scheduler has the pluggable policy plug-in which is responsible for partitioning the cluster

resources among the various queues, applications etc, for example a) CapacityScheduler, b)

FairScheduler

b) ApplicationsManager

1. Applications Manager is responsible for accepting job-submissions.

2. Assigning the first container for executing the application specific Application-Master.

3. Provides the service for restarting the Application-Master container on failure.

II) NodeManager (NM)

1. Node-Manager is responsible for Container management.

2. Monitoring container resource usage (like cpu, memory, disk, network).

3. Reporting to the Resource-Manager/Scheduler.

III) ApplicationMaster (AM)

1. Application Master is responsible for negotiating resources with the ResourceManager and for

working with the NodeManagers to start the containers.

2. Application-Master is responsible for negotiating appropriate resource containers from the Scheduler.

3. Tracking the status and monitoring progress for applications running in each containers under the

Application-Master.

IV) Container

Resource Container incorporates elements such as memory, cpu, disk, network, Command line to launch

the process within the container, Environment variables and Local resources necessary on the machine

prior to launch, such as jars, shared-objects, auxiliary data files, Security-related tokens etc.

Schedulers:

There are three schedulers are available in YARN: the FIFO, Capacity, and Fair Schedulers. The

FIFO Scheduler places applications in a queue and runs them in the order of submission

(first in, first out). Requests for the first application in the queue are allocated first; once

its requests have been satisfied, the next application in the queue is served, and so on.

The FIFO Scheduler has the merit of being simple to understand and not needing any

configuration, but it’s not suitable for shared clusters as we none of the critical applications could not be

prioritized that results in missing SLA. Large applications will use all the resources in a cluster, so each

application has to wait its turn. On a shared cluster it is better to use the Capacity Scheduler or the Fair

Scheduler. Both of these allow long runningjobs to complete in a timely manner, while still allowing

users who are running concurrent smaller ad hoc queries to get results back in a reasonable time.

Inceptez Technologies Page 10

CapacityScheduler is designed to allow sharing a large cluster while giving each organization capacity

guarantees. The central idea is that the available resources in the Hadoop cluster are shared among

multiple organizations who collectively fund the cluster based on their computing needs. There is an

added benefit that an organization can access any excess capacity not being used by others. This

provides elasticity for the organizations in a cost-effective manner. The primary abstraction provided by

the CapacityScheduler is the concept of queues. These queues are typically setup by administrators to

reflect the economics of the shared cluster. To provide further control and predictability on sharing of

resources, the CapacityScheduler supports hierarchical queues to ensure resources are shared among

the sub-queues of an organization before other queues are allowed to use free resources, there-by

providing affinity for sharing free resources among applications of a given organization.

Sharing clusters across organizations necessitates strong support for multi-tenancy since each

organization must be guaranteed capacity and safe-guards to ensure the shared cluster is impervious to

single rouge application or user or sets thereof. The CapacityScheduler provides a stringent set of limits

to ensure that a single application or user or queue cannot consume disproportionate amount of

resources in the cluster. Also, the CapacityScheduler provides limits on initialized/pending applications

from a single user and queue to ensure fairness and stability of the cluster.

Inceptez Technologies Page 11

Fair Scheduler is a reasonable way to share a cluster between a number of users. Finally, fair sharing can

also work with app priorities - the priorities are used as weights to determine the fraction of total

resources that each app should get.

The scheduler organizes apps further into “queues”, and shares resources fairly between these queues.

By default, all users share a single queue, named “default”. If an app specifically lists a queue in a

container resource request, the request is submitted to that queue. It is also possible to assign queues

based on the user name included with the request through configuration. Within each queue, a

scheduling policy is used to share resources between the running apps. The default is memory-based

fair sharing, but FIFO and multi-resource with Dominant Resource Fairness can also be configured.

Queues can be arranged in a hierarchy to divide resources and configured with weights to share the

cluster in specific proportions.

In addition to providing fair sharing, the Fair Scheduler allows assigning guaranteed minimum shares to

queues, which is useful for ensuring that certain users, groups or production applications always get

sufficient resources. When a queue contains apps, it gets at least its minimum share, but when the

queue does not need its full guaranteed share, the excess is split between other running apps. This lets

the scheduler guarantee capacity for queues while utilizing resources efficiently when these queues

don’t contain applications.The Fair Scheduler lets all apps run by default, but it is also possible to limit

the number of running apps per user and per queue through the config file. This can be useful when a

user must submit hundreds of apps at once or in general to improve performance if running too many

apps at once would cause too much intermediate data to be created or too much context-switching.

Limiting the apps does not cause any subsequently submitted apps to fail, only to wait in the scheduler’s

queue until some of the user’s earlier apps finish.

Inceptez Technologies Page 12

YARN Architecture

Let’s walk through an application execution sequence (steps are illustrated in the diagram):

1. A client program submits the application, including the necessary specifications to launch the

application-specific ApplicationMaster itself.

Inceptez Technologies Page 13

2. The ResourceManager assumes the responsibility to negotiate a specified container in which to start

the ApplicationMaster and then launches the ApplicationMaster.

3. The ApplicationMaster, on boot-up, registers with the ResourceManager – the registration allows

the client program to query the ResourceManager for details, which allow it to directly

communicate with its own ApplicationMaster.

4. During normal operation the ApplicationMaster negotiates appropriate resource containers to RM.

5. On successful container allocations, the ApplicationMaster launches the container by providing the

container launch specification to the NodeManager. The launch specification, typically, includes the

necessary information to allow the container to communicate with the ApplicationMaster itself.

6. The application code executing within the container then provides necessary information (progress,

status etc.) to its ApplicationMaster via an application-specific protocol.

7. During the application execution, the client that submitted the program communicates directly with

the ApplicationMaster to get status, progress updates etc. via an application-specific protocol.

8. Once the application is complete, and all necessary work has been finished, the ApplicationMaster

deregisters with the ResourceManager and shuts down, allowing its own container to be

repurposed.

UBER mode

In normally mappers and reducers will run by ResourceManager (RM), RM will create separate container

for mapper and reducer. uber configuration, will allow to run mapper and reducers in the same process

as the ApplicationMaster (AM) runs in the same container. Uber jobs are jobs that are executed within

the MapReduce ApplicationMaster. Rather then communicate with RM to create the mapper and

reducer containers. The AM runs the map and reduce tasks within its own process and avoided the

overhead of launching and communicate with remote containers. If you have a small dataset, want to

run MapReduce on small amount of data. Uber configuration will help you out, by reducing additional

time that MapReduce normally spends mapper and reducers phase.

YARN Failure Handling

Container or Task Failure

Container failure will be handled by node-manager. When a container fails or dies, node-manager

detects the failure event and launches a new container to replace the failing container and restart the

task execution in the new container.

If no other container resource available in the same node, Resource manager notifies it to application

master and Application master schedules it again on a different node requesting container from Node

manager.

Tasks fails because of a runtime exception in user code, or a bug in JVM which will be communicated to

the AppMaster, hence AM request a new container to launch the failed task to RM.

Inceptez Technologies Page 14

Application Master Failure

In this Resource manager will start a new Application Master under node manager.

No task needs to re-run as the ability to recover the associated task state depends on the application-

master implementation. MapReduce application-master has the ability to recover the state but it is not

enabled by default.

Job client when polls the application masters it will get a timeout exception as it caches the application

master. So now it needs to go to resource manager and get the address of new application master.

Node Manager Failure

In this case the tasks in containers which were running needs to be rescheduled.

Application master gets another container for the tasks and runs it on a different machine under node

manager.

Application-master should recover the tasks run on the failing node-managers but it depends on the

application-master implementation. MapReduce application-master has an additional capability to

recover the failing task and blacklist the node-managers that often fail.

Resource Manager Failure

This is a serious failure.

No job can be run till a new resource manager is brought up.

Good thing is resource manager keeps storing its state in persistent storage at checkpoints.

So new resource manager can start from there. V8983

ResourceManager from one instance to another, potentially running on a different machine, for high

availability. It involves leader election, transfer of resource-management authority to a newly elected

leader, and client re-direction to the new leader. Refer the following figure for HA implementation of

RM.

Since all the necessary information are stored in persistent storage, it is possible to have more than one

resource-managers running at the same time. All of the resource-managers share the information

through the storage and none of them hold the information in their memory. When a resource-manager

fails, the other resource-managers can easily take over the job since all the needed states are stored in

the storage. Clients, node-managers and application-masters need to be modified so that they can point

to new resource-managers upon the failure.

Inceptez Technologies Page 15

JOB SUBMISSION IN YARN

1. Clients submit Map Reduce job by interacting with Job objects, clients runs in its own JVM.

2. Job’s code interacts with Resource Manager to acquire application metadata such as

application id.

3. Job’s code move all job related resources to HDFS to make them available for rest of the job.

4. Job’s code submits the application to Resource Manager.

5. Resource Manager chooses a Node manager with available resources and requests a

container for MRAppMaster.

6. Node manager allocates container for MRAppMaster; MRAppMaster will execute and co-

ordinate Map Reduce Job.

7. MRAppMaster grabs required resources from HDFS, such as input splits; these resources

were copied in step 3.

8. MRAppMaster negotiates with Resource Manager for available resources; Resource Manager

will select Node Manager that has the most resources and the data blocks.

9. MRAppMaster tells selected Node Manager to start Map and Reduce tasks.

10. Node Manager creates YARN Child containers that will coordinate and run tasks.

11. YARN Child acquires job resources from HDFS that will be required to execute Map and

Reduce tasks.

12. YARN Child executes Map and Reduce Tasks.

Inceptez Technologies Page 16

Вам также может понравиться

- SAS Programming Guidelines Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesОт EverandSAS Programming Guidelines Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesОценок пока нет

- Nexus TroubleshootingДокумент127 страницNexus TroubleshootingTylorKytasaariОценок пока нет

- Recover Point Connectivity Diagram OverviewДокумент27 страницRecover Point Connectivity Diagram Overviewarvind100% (1)

- MQ GuideДокумент129 страницMQ GuidefuckyouSCОценок пока нет

- 24 Hadoop Interview Questions & Answers For MapReduce Developers - FromDevДокумент7 страниц24 Hadoop Interview Questions & Answers For MapReduce Developers - FromDevnalinbhattОценок пока нет

- Describe The Functions and Features of HDPДокумент16 страницDescribe The Functions and Features of HDPMahmoud Elmahdy100% (2)

- NH RobostarДокумент50 страницNH Robostarbahram100% (1)

- Hadoop Interview Questions - Part 1Документ8 страницHadoop Interview Questions - Part 1Arunkumar PalathumpattuОценок пока нет

- Hadoop Classroom NotesДокумент76 страницHadoop Classroom NotesHADOOPtraining100% (2)

- Configure and Verify A Site-To-Site IPsec VPN Using CLIДокумент6 страницConfigure and Verify A Site-To-Site IPsec VPN Using CLIMilošKovačevićОценок пока нет

- 2016 Brkspg-2061 Cableipv6Документ107 страниц2016 Brkspg-2061 Cableipv6alfagemeoОценок пока нет

- Conf Gpon Olt Huawei 2Документ5 страницConf Gpon Olt Huawei 2Yasser Erbey Romero GonzalezОценок пока нет

- 24 Interview QuestionsДокумент7 страниц24 Interview QuestionsZachariah BentleyОценок пока нет

- UNIT 4 Notes by ARUN JHAPATEДокумент20 страницUNIT 4 Notes by ARUN JHAPATEAnkit “अंकित मौर्य” MouryaОценок пока нет

- Hadoop V 2.x Vs V 3.xДокумент20 страницHadoop V 2.x Vs V 3.xAtharv ChaudhariОценок пока нет

- Unit 3 BbaДокумент11 страницUnit 3 Bbarajendrameena172003Оценок пока нет

- Hadoop KaruneshДокумент14 страницHadoop KaruneshMukul MishraОценок пока нет

- Hadoop Interview QuestionsДокумент2 страницыHadoop Interview QuestionsmobyОценок пока нет

- Hadoop 1.x Architecture: Name: Siddhant Singh Chandel PRN: 20020343053Документ4 страницыHadoop 1.x Architecture: Name: Siddhant Singh Chandel PRN: 20020343053Siddhant SinghОценок пока нет

- Hadoop Architecture: Er. Gursewak Singh DcseДокумент12 страницHadoop Architecture: Er. Gursewak Singh DcseDaisy KawatraОценок пока нет

- 1 Purpose: Single Node Setup Cluster SetupДокумент1 страница1 Purpose: Single Node Setup Cluster Setupp001Оценок пока нет

- Hadoop Interview Quations: HDFS (Hadoop Distributed File System)Документ23 страницыHadoop Interview Quations: HDFS (Hadoop Distributed File System)Stanfield D. JhonnyОценок пока нет

- System Design and Implementation 5.1 System DesignДокумент14 страницSystem Design and Implementation 5.1 System DesignsararajeeОценок пока нет

- Understand: The First Phase of Mapreduce Paradigm, What Is A Map/Mapper, What Is The Input To TheДокумент5 страницUnderstand: The First Phase of Mapreduce Paradigm, What Is A Map/Mapper, What Is The Input To TheAnjali AdwaniОценок пока нет

- Unit 2 Notes BDAДокумент10 страницUnit 2 Notes BDAvasusrivastava138Оценок пока нет

- Top Answers To Map Reduce Interview QuestionsДокумент6 страницTop Answers To Map Reduce Interview QuestionsEjaz AlamОценок пока нет

- Chap 6 - MapReduce ProgrammingДокумент37 страницChap 6 - MapReduce ProgrammingHarshitha RaajОценок пока нет

- Ditp - ch2 4Документ2 страницыDitp - ch2 4jefferyleclercОценок пока нет

- Hadoop ArchitectureДокумент8 страницHadoop Architecturegnikithaspandanasridurga3112Оценок пока нет

- Sem 7 - COMP - BDAДокумент16 страницSem 7 - COMP - BDARaja RajgondaОценок пока нет

- DSBDA Manual Assignment 11Документ6 страницDSBDA Manual Assignment 11kartiknikumbh11Оценок пока нет

- Hadoop and Big Data Unit 31Документ9 страницHadoop and Big Data Unit 31pnirmal086Оценок пока нет

- Q1. Discuss Hadoop and Map Reduce Algorithm.: Data Is LocatedДокумент7 страницQ1. Discuss Hadoop and Map Reduce Algorithm.: Data Is LocatedHîмanî JayasОценок пока нет

- BigData Hadoop Online Training by ExpertsДокумент41 страницаBigData Hadoop Online Training by ExpertsHarika583Оценок пока нет

- Term Paper JavaДокумент14 страницTerm Paper JavaMuskan BhartiОценок пока нет

- Data Science PresentationДокумент20 страницData Science Presentationyadvendra dhakadОценок пока нет

- Assn - No:1 Cloud Computing Assignment 13.10.2019Документ4 страницыAssn - No:1 Cloud Computing Assignment 13.10.2019Hari HaranОценок пока нет

- BDA Unit 3 NotesДокумент11 страницBDA Unit 3 NotesduraisamyОценок пока нет

- Chapter 10Документ45 страницChapter 10Sarita SamalОценок пока нет

- Tez Design v1.1Документ15 страницTez Design v1.1dressguardОценок пока нет

- HadoopДокумент5 страницHadoopVaishnavi ChockalingamОценок пока нет

- Big DataДокумент17 страницBig DatagtfhbmnvhОценок пока нет

- Top Answers To Map Reduce Interview Questions: Criteria Mapreduce SparkДокумент2 страницыTop Answers To Map Reduce Interview Questions: Criteria Mapreduce SparkSumit KОценок пока нет

- Best Hadoop Online TrainingДокумент41 страницаBest Hadoop Online TrainingHarika583Оценок пока нет

- Hadoop DcsДокумент31 страницаHadoop Dcsbt20cse155Оценок пока нет

- Hadoop: Er. Gursewak Singh DsceДокумент15 страницHadoop: Er. Gursewak Singh DsceDaisy KawatraОценок пока нет

- Hdfs Architecture and Hadoop MapreduceДокумент10 страницHdfs Architecture and Hadoop MapreduceNishkarsh ShahОценок пока нет

- Assignment BdaДокумент12 страницAssignment BdaDurastiti samayaОценок пока нет

- An Optimized Algorithm For Reduce Task Scheduling: Xiaotong Zhang, Bin Hu, Jiafu JiangДокумент8 страницAn Optimized Algorithm For Reduce Task Scheduling: Xiaotong Zhang, Bin Hu, Jiafu Jiangbony009Оценок пока нет

- Module 4Документ37 страницModule 4Aryan VОценок пока нет

- Unit Iv Mapreduce ApplicationsДокумент70 страницUnit Iv Mapreduce ApplicationskavyaОценок пока нет

- Master The Mapreduce Computational Engine in This In-Depth Course!Документ2 страницыMaster The Mapreduce Computational Engine in This In-Depth Course!Sumit KОценок пока нет

- Hadoop Interview Questions Author: Pappupass Learning ResourceДокумент16 страницHadoop Interview Questions Author: Pappupass Learning ResourceDheeraj ReddyОценок пока нет

- Survey Paper On Traditional Hadoop and Pipelined Map Reduce: Dhole Poonam B, Gunjal Baisa LДокумент5 страницSurvey Paper On Traditional Hadoop and Pipelined Map Reduce: Dhole Poonam B, Gunjal Baisa LInternational Journal of computational Engineering research (IJCER)Оценок пока нет

- Hadoop: A Seminar Report OnДокумент28 страницHadoop: A Seminar Report OnRoshni KhairnarОценок пока нет

- DM Hadoop ArchitectureДокумент6 страницDM Hadoop Architecture20PCT19 THANISHKA SОценок пока нет

- PPT1 Module2 Hadoop DistributionДокумент23 страницыPPT1 Module2 Hadoop DistributionHiran SureshОценок пока нет

- Unit 5Документ20 страницUnit 5manasaОценок пока нет

- Framework For Processing Data in Hadoop - : Yarn and MapreduceДокумент31 страницаFramework For Processing Data in Hadoop - : Yarn and MapreduceHarshitha RaajОценок пока нет

- Hadoop 2.0Документ20 страницHadoop 2.0krishan GoyalОценок пока нет

- Map RedДокумент6 страницMap Red20261A6757 VIJAYAGIRI ANIL KUMARОценок пока нет

- Shortnotes For CloudДокумент22 страницыShortnotes For CloudMahi MahiОценок пока нет

- Bda - 3 UnitДокумент18 страницBda - 3 UnitASMA UL HUSNAОценок пока нет

- Map ReduceДокумент10 страницMap ReduceVikas SinhaОценок пока нет

- Diagcar EuДокумент1 страницаDiagcar Eumosafree1Оценок пока нет

- 01-05 Best Practice For The Enterprise Multi-DC + Branch Solution (Multi-Hub Networking)Документ192 страницы01-05 Best Practice For The Enterprise Multi-DC + Branch Solution (Multi-Hub Networking)prasanthaf077Оценок пока нет

- GTH 644 842 844Документ282 страницыGTH 644 842 844maqvereОценок пока нет

- W25X10, W25X20, W25X40, W25X80Документ48 страницW25X10, W25X20, W25X40, W25X80Brahim TelliОценок пока нет

- 12.4.1.2 Lab - Isolate Compromised Host Using 5-TupleДокумент18 страниц12.4.1.2 Lab - Isolate Compromised Host Using 5-TupleJangjang KatuhuОценок пока нет

- Ibm Best PracticesДокумент57 страницIbm Best PracticesMarvinОценок пока нет

- 53 1000114 02 FOS Password Recovery PDFДокумент14 страниц53 1000114 02 FOS Password Recovery PDFkenjiro08Оценок пока нет

- HP Router Enablement Basic 3 - High Availability Overview TTP v6Документ52 страницыHP Router Enablement Basic 3 - High Availability Overview TTP v6AlexОценок пока нет

- Olson Power Meters - Open Source Network Monitoring and Systems ManagementДокумент5 страницOlson Power Meters - Open Source Network Monitoring and Systems ManagementAnshul KaushikОценок пока нет

- CP210x Universal Windows Driver ReleaseNotesДокумент10 страницCP210x Universal Windows Driver ReleaseNotesAirton FreitasОценок пока нет

- Mikrotik in A Virtualised Hardware EnvironmentДокумент26 страницMikrotik in A Virtualised Hardware EnvironmentAl QadriОценок пока нет

- Char CRC5Документ6 страницChar CRC5ZBC1927Оценок пока нет

- Sms Code 1811aДокумент5 страницSms Code 1811aasdfОценок пока нет

- DP 0572 ReleaseNotes2Документ12 страницDP 0572 ReleaseNotes2the pilotОценок пока нет

- SCADA Project Guide - 03 PDFДокумент1 страницаSCADA Project Guide - 03 PDFrenvОценок пока нет

- LG R480 - QUANTA QL3 Preso-II - REV 1A PDFДокумент39 страницLG R480 - QUANTA QL3 Preso-II - REV 1A PDFJoseni FigueiredoОценок пока нет

- Firewall Debug Flow BasicДокумент2 страницыFirewall Debug Flow BasicWayne VermaОценок пока нет

- TSV Ipb100Документ3 страницыTSV Ipb100Kemari Abd MadjidОценок пока нет

- Cpe 001 - Different Types of TopologyДокумент9 страницCpe 001 - Different Types of TopologyLabra223Оценок пока нет

- ENUДокумент152 страницыENUJames LeaperОценок пока нет

- CCNA 2 RSE Chapter 6 Practice Skills Assessment - Packet TracerДокумент4 страницыCCNA 2 RSE Chapter 6 Practice Skills Assessment - Packet TracerLuis Ramirez SalinasОценок пока нет

- HPE - Dp00002639en - Us - HPE Smart Storage Administrator GUI User GuideДокумент142 страницыHPE - Dp00002639en - Us - HPE Smart Storage Administrator GUI User GuideHung Do MinhОценок пока нет

- Rajesh AWS 4yrs ResumeДокумент4 страницыRajesh AWS 4yrs ResumekbrpuramОценок пока нет