Академический Документы

Профессиональный Документы

Культура Документы

What Is A HMM

Загружено:

afsana_shimuИсходное описание:

Оригинальное название

Авторское право

Доступные форматы

Поделиться этим документом

Поделиться или встроить документ

Этот документ был вам полезен?

Это неприемлемый материал?

Пожаловаться на этот документАвторское право:

Доступные форматы

What Is A HMM

Загружено:

afsana_shimuАвторское право:

Доступные форматы

PRIMER

What is a hidden IVIarkov model?

Sean R Eddy

Statistical models called hidden Markov models are a recurring theme in computational biology. What are hidden

Markov models, and why are they so useful for so many different problems?

Often, biological sequence analysis is just a

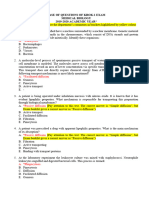

matter of putting the right label on each A = 0.25 A = 0.05 A = 0.4

C = 0.25 C=0 C

residue. In gene identification, we want to G = 0.25 G = 0.95 G=0 1

label nucleotides as exons, introns, or inter- T = 0.25 T=0 J = o!4

genic sequence. In sequence alignment, we

want to associate residues in a query End

sequence with homologous residues in a tar-

get database sequence. We can always write

an ad hoc program for any given problem,

but the same frustrating issues will always Sequence: C T T C A T TCA

recur. One is that we want to incorporate

heterogeneous sources of information. A

State path: E E E E E E I I I logP

genefinder, for instance, ought to combine I -41.22

splice-site consensus, codon bias, exon/ -43.90

intron length preferences and open reading Parsing: -43.45

frame analysis into one scoring system. How -43.94

-42.58

should these parameters be set? How should -41.71

different kinds of information be weighted? 46% •

A second issue is to interpret results proba-

bilistically. Finding a best scoring answer is Posterior I 28%

one thing, but what does the score mean, decoding:

and how confident are we that the best scor-

ing answer is correct? A third issue is exten- Figure 1 A toy HMM for 5' splice site recognition. See text for explanation.

sibility. The moment we perfect our ad hoc

genefinder, we wish we had also modeled

translational initiation consensus, alterna-

ing an intuitive picture. They are at the heart different statistical properties. Let's imagine

tive splicing and a polyadenylation signal.

of a diverse range of programs, including some simple differences: say that exons

Too often, piling more reality onto a fragile

ad hoc program makes it collapse under its genefinding, profile searches, multiple have a uniform base composition on average

own weight. sequence alignment and regulatory site (25% each base), introns are A/T rich (say,

identification. HMMs are the Legos of com- 40% each for A/T, 10% each for C/G), and

Hidden Markov models (HMMs) are a putational sequence analysis. the 5'SS consensus nucleotide is almost

formal foundation for making probabilistic always a G (say, 95% G and 5% A).

models of linear sequence 'labeling' prob- A toy HMM: 5' splice site recognition Starting from this information, we can

lems'"^. They provide a conceptual toolkit As a simple example, imagine the following draw an HMM (Fig. 1). The HMM invokes

for building complex models just by draw- caricature of a 5' splice-site recognition three states, one for each of the three labels

problem. Assume we are given a DNA we might assign to a nucleotide: E (exon),

sequence that begins in an exon, contains 5 (5'SS) and I (intron). Each state has its

Sean R. Eddy is at Howard Hughes Medical one 5' splice site and ends in an intron. own emission probabilities (shown above the

Institute & Department of Genetics, The problem is to identify where the switch states), which model the base composition

Washington University School of Medicine, from exon to intron occurred—where the of exons, introns and the consensus G at the

4444 Forest Park Blvd., Box 8510, Saint Louis, 5' splice site (5'SS) is. 5'SS. Each state also has transition probabili-

Missouri 63108, USA. For us to guess intelligently, the sequences ties (arrows), the probabilities of moving

e-mail: eddy@genetics.wustl.edu of exons, splice sites and introns must have from this state to a new state. The transition

NATURE BIOTECHNOLOGY VOLUME 22 NUMBER 10 OCTOBER 2004 1315

PRIMER

probabilities describe the linear order in with G at the 5'SS), The best one has a log For example, in our toy splice-site model,

which we expect the states to occur: one or probability of-41,22, which infers that the maybe we're not happy with our discrimina-

more Es, one 5, one or more Is, most likely 5'SS position is at the fifth G, tion power; maybe we want to add a more

For most problems, there are so many realistic six-nucleotide consensus GTRAGT

So, what's hidden? possible state sequences that we could not at the 5' splice site. We can put a row of

It's useful to imagine an HMM generating a afford to enumerate them. The efficient six HMM states in place of '5' state, to

sequence. When we visit a state, we emit a Viterbi algorithm is guaranteed to find the model a six-base ungapped consensus motif,

residue from the state's emission probability most probable state path given a sequence parameterizing the emission probabilities

distribution. Then, we choose which state to and an HMM, The Viterbi algorithm is a on known 5' splice sites. And maybe we

visit next according to the state's transition dynamic programming algorithm quite want to model a complete intron, including

probability distribution. The model thus similar to those used for standard sequence a 3' splice site; we just add a row of states for

generates two strings of information. One alignment. the 3'SS consensus, and add a 3' exon state

is the underlying state path (the labels), as to let the observed sequence end in an exon

we transition from state to state. The other Beyond best scoring alignments instead of an intron. Then maybe we want to

is the observed sequence (the DNA), each Figure 1 shows that one alternative state build a complete gene model,,,whatever

residue being emitted from one state in the path differs only slightly in score from put- we add, it's just a matter of drawing what

state path. ting the 5'SS at the fifth G (log probabilities we want.

The state path is a Markov chain, meaning of-41,71 versus -41,22), How confident are

that what state we go to next depends only we that the fifth G is the right choice? The catch

on what state we're in. Since we're only given This is an example of an advantage of HMMs don't deal well with correlations

the observed sequence, this underlying state probabilistic modeling: we can calculate our between residues, because they assume that

path is hidden—these are the residue labels confidence directly. The probability that each residue depends only on one under-

that we'd like to infer. The state path is a residue i was emitted by state k is the sum lying state. An example where HMMs are

hidden Markov chain. of the probabilities of all the state paths usually inappropriate is RNA secondary

The probability P(S,7t|HMM,e) that an that use state k to generate residue i (that is, structure analysis, Gonserved RNA base

HMM with parameters 9 generates a state TC; = k in the state path n), normalized by the pairs induce long-range pairwise correla-

path 7t and an observed sequence S is the sum over all possible state paths. In our toy tions; one position might be any residue,

product of all the emission probabilities and model, this is just one state path in the but the base-paired partner must be com-

transition probabilities that were used. numerator and a sum over 14 state paths in plementary. An HMM state path has no

For example, consider the 26-nucleotide the denominator. We get a probability of way of 'remembering' what a distant state

sequence and state path in the middle of 46% that the best-scoring fifth G is correct generated.

Figure 1, where there are 27 transitions and and 28% that the sixth G position is correct Sometimes, one can bend the rules of

26 emissions to tote up. Multiply all 53 (Fig, 1, bottom). This is called posterior HMMs without breaking the algorithms.

probabilities together (and take the log, decoding. For larger problems, posterior For instance, in genefinding, one wants to

since these are small numbers) and you'll decoding uses two dynamic programming emit a correlated triplet codon instead of

calculate log P(S,7t|HMM,e) = -41,22, algorithms called Forward and Backward, three independent residues; HMM algo-

An HMM is a full probabilistic model— which are essentially like Viterbi, but they rithms can readily be extended to triplet-

the model parameters and the overall sum over possible paths instead of choosing emitting states. However, the basic HMM

sequence'scores' are all probabilities. There- the best. toolkit can only be stretched so far. Beyond

fore, we can use Bayesian probability theory HMMs, there are more powerful (though

to manipulate these numbers in standard, Making more realistic models less efficient) classes of probabilistic models

powerful ways, including optimizing para- Making an HMM means specifying four for sequence analysis,

meters and interpreting the significance things: (i) the symbol alphabet, K different 1. Rabiner, L,R, A tutorial on hidden Markov modeis and

of scores. symbols (e,g,, ACGT, K= 4); (ii) the number seiected applications in speech recognition, Proc.

of states in the model, M; (iii) emission ; £ £ E 7 7 , 2 5 7 - 2 8 6 (1989),

2, Durbin, R,, Eddy, S,R,, Krogh, A, & Mitchison, G,J,

Finding the best state path probabilities e,(x) for each state ;, that sum Biological Sequence Analysis: Probabilistic Models of

In an analysis problem, we're given a to one over K symbols x, Xe,(x) = 1; and Proteins and Nucieic Acids {Cambridge University

sequence, and we want to infer the hidden Press, Cambridge UK, 1998),

(iv) transition probabilities tj(j) for each state

state path. There are potentially many state

paths that could generate the same 1 going to any other state j (including itself)

that sum to one over the M states;, X f,( j) = 1,

sequence. We want to find the one with the nature

highest probability.

For example, if we were given the HMM

Any model that has these properties is an HMM,

This means that one can make a new

biotechnology

and the 26-nucleotide sequence in Figure 1, HMM just by drawing a picture corres- Wondering how some other

there are 14 possible paths that have non- ponding to the problem at hand, like mathematical technique really works?

zero probability, since the 5'SS must fall on Figure 1, This graphical simplicity lets one Send suggestions for future primers to

one of 14 internal As or Gs, Figure 1 enu- focus clearly on the biological definition of askthegeek@natureny,com.

merates the six highest-scoring paths (those a problem.

1316 VOLUME 22 NUMBER 10 OCTOBER 2004 NATURE BIOTECHNOLOGY

Вам также может понравиться

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeОт EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeРейтинг: 4 из 5 звезд4/5 (5784)

- The Little Book of Hygge: Danish Secrets to Happy LivingОт EverandThe Little Book of Hygge: Danish Secrets to Happy LivingРейтинг: 3.5 из 5 звезд3.5/5 (399)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceОт EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceРейтинг: 4 из 5 звезд4/5 (890)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureОт EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureРейтинг: 4.5 из 5 звезд4.5/5 (474)

- The Yellow House: A Memoir (2019 National Book Award Winner)От EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Рейтинг: 4 из 5 звезд4/5 (98)

- Team of Rivals: The Political Genius of Abraham LincolnОт EverandTeam of Rivals: The Political Genius of Abraham LincolnРейтинг: 4.5 из 5 звезд4.5/5 (234)

- Never Split the Difference: Negotiating As If Your Life Depended On ItОт EverandNever Split the Difference: Negotiating As If Your Life Depended On ItРейтинг: 4.5 из 5 звезд4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerОт EverandThe Emperor of All Maladies: A Biography of CancerРейтинг: 4.5 из 5 звезд4.5/5 (271)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryОт EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryРейтинг: 3.5 из 5 звезд3.5/5 (231)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaОт EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaРейтинг: 4.5 из 5 звезд4.5/5 (265)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersОт EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersРейтинг: 4.5 из 5 звезд4.5/5 (344)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyОт EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyРейтинг: 3.5 из 5 звезд3.5/5 (2219)

- The Unwinding: An Inner History of the New AmericaОт EverandThe Unwinding: An Inner History of the New AmericaРейтинг: 4 из 5 звезд4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreОт EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreРейтинг: 4 из 5 звезд4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)От EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Рейтинг: 4.5 из 5 звезд4.5/5 (119)

- Lab Report Sds-Page WB - PT 1 (1-5)Документ5 страницLab Report Sds-Page WB - PT 1 (1-5)Ezad juferiОценок пока нет

- 12th Chap 12 BoardДокумент3 страницы12th Chap 12 BoardVraj PatelОценок пока нет

- Bioinformatics Sequence and Genome Analy PDFДокумент3 страницыBioinformatics Sequence and Genome Analy PDFNatasha NawazОценок пока нет

- A FST 3110 IntroДокумент37 страницA FST 3110 IntroAfiQah AzizОценок пока нет

- COVID vaccination certificate for Indian teenДокумент1 страницаCOVID vaccination certificate for Indian teenShubham TiwariОценок пока нет

- The Aspects of GENE THERAPYДокумент12 страницThe Aspects of GENE THERAPYJasmin Prindiana100% (6)

- Struktur Materi GenetikДокумент73 страницыStruktur Materi GenetikFikry SyahrizalОценок пока нет

- IBL Company Profile and Services PDFДокумент16 страницIBL Company Profile and Services PDFAnand DoraisingamОценок пока нет

- Nitric Oxide and Hydrogen Peroxide Signaling in Higher PlantsДокумент275 страницNitric Oxide and Hydrogen Peroxide Signaling in Higher PlantsJ. Enrique BlandonОценок пока нет

- Biotechnology / Molecular Techniques in Seed Health ManagementДокумент6 страницBiotechnology / Molecular Techniques in Seed Health ManagementMadhwendra pathak100% (1)

- Future of Health3 E ONLINEДокумент28 страницFuture of Health3 E ONLINEdrshihadОценок пока нет

- Regulation of the trp operon in prokaryotesДокумент4 страницыRegulation of the trp operon in prokaryotesBelay Rayos del SolОценок пока нет

- Outline of A Research Paper Apa22Документ2 страницыOutline of A Research Paper Apa22api-216660156Оценок пока нет

- Universiti Malaysia Sarawak Pre-TranscriptДокумент3 страницыUniversiti Malaysia Sarawak Pre-TranscriptAmir AbuОценок пока нет

- 2018 01 (Jan) 11 Youtube Part 2 On 9 Stanley Plotkin, Godfather of Vaccines, UNDER OATH P. 52 To 96Документ40 страниц2018 01 (Jan) 11 Youtube Part 2 On 9 Stanley Plotkin, Godfather of Vaccines, UNDER OATH P. 52 To 96Jagannath100% (1)

- UPLB Accomplishment Report 2017 2 PDFДокумент120 страницUPLB Accomplishment Report 2017 2 PDFjonesОценок пока нет

- NCERT Exemplar For Class 12 Biology Chapter 6Документ31 страницаNCERT Exemplar For Class 12 Biology Chapter 6Me RahaviОценок пока нет

- Chlorophytum Borivilianum (Safed Musli) : A Vital Herbal DrugДокумент11 страницChlorophytum Borivilianum (Safed Musli) : A Vital Herbal DrugZahoor AhmadОценок пока нет

- Edexcel Biology IGCSE flashcards on living organismsДокумент23 страницыEdexcel Biology IGCSE flashcards on living organismsMD TAHSIN SIYAMОценок пока нет

- M and R ModuleДокумент247 страницM and R ModuleMuhammed SamiОценок пока нет

- MEDBIO KROK-1 English 2019-2020Документ65 страницMEDBIO KROK-1 English 2019-2020Катерина КабишОценок пока нет

- Hidden Markov Models in Bioinformatics: Example Domain: Gene FindingДокумент32 страницыHidden Markov Models in Bioinformatics: Example Domain: Gene FindingrednriОценок пока нет

- cDNA Synthesis Kit Biorad PDFДокумент2 страницыcDNA Synthesis Kit Biorad PDFMSc ZoologyОценок пока нет

- Biotech DirectoryДокумент98 страницBiotech DirectoryShreya Singh100% (1)

- Parts of the Animal CellДокумент1 страницаParts of the Animal CellVanessa RamírezОценок пока нет

- 13Документ788 страниц13Mamdouh SolimanОценок пока нет

- Cancer, Stem Cells and Development BiologyДокумент2 страницыCancer, Stem Cells and Development Biologysicongli.leonleeОценок пока нет

- Nucleolus A Central Hub For Nuclear FunctionsДокумент13 страницNucleolus A Central Hub For Nuclear FunctionsJuan Andres Fernández MОценок пока нет

- Agricultural Consultants Business PlanДокумент43 страницыAgricultural Consultants Business PlanNourdine ElbounjimiОценок пока нет

- GeneticEngineering GizmoДокумент4 страницыGeneticEngineering GizmokallenbachcarterОценок пока нет